Before Google reads a single word of your content, it reads your URL. That might seem like a small detail, but it has significant consequences for how your pages get crawled, indexed, and ranked. A well-structured URL tells Google exactly what a page is about, where it fits in your site hierarchy, and whether it's worth prioritizing. A poorly structured one can confuse crawlers, waste crawl budget, and quietly bury your content before it ever has a chance to rank.

This matters more than most marketers realize. You can write exceptional content, earn quality backlinks, and nail your on-page SEO — and still struggle to rank if your URLs are a mess of parameters, redirect chains, or duplicate variations. The URL is the foundation everything else builds on.

In this guide, you'll learn how Google actually interprets URLs, the best practices for structuring them to maximize crawl efficiency, how to submit URLs to Google using multiple methods, the most common URL mistakes that block visibility, and how to audit your existing URL structure for quick wins. You'll also explore why URL optimization now extends beyond traditional search into AI-powered platforms like ChatGPT, Claude, and Perplexity. If you want your content discovered everywhere it matters, the URL is where that work begins.

How Google Reads and Interprets Your URLs

Think of a URL as a postal address for a web page. Just as a physical address tells a courier the country, city, street, and building number, a URL tells Google the protocol, domain, directory structure, and specific page it's navigating to. Each component carries meaning, and Googlebot pays attention to all of them.

A complete URL has several distinct parts. The protocol (http:// or https://) signals security status. The domain (yourbrand.com) establishes site identity. The path (/blog/seo-tips) communicates hierarchy and topic. Query parameters (?id=123&ref=email) are typically used for dynamic content or tracking. Fragments (#section-title) point to specific on-page locations but are generally ignored by crawlers. Google uses all of these signals together to understand what it's looking at.

Googlebot doesn't just visit URLs in isolation. It uses them to map your site's structure, understand how pages relate to one another, and infer content relevance. A URL like yoursite.com/services/seo-consulting immediately tells the crawler that "seo-consulting" is a subcategory under "services." That hierarchy helps Google understand the relationship between your pages and assign topical relevance accordingly.

The distinction between static and dynamic URLs is particularly important here. A static URL like yoursite.com/blog/keyword-research-guide is clean, descriptive, and easy for crawlers to process. A dynamic URL like yoursite.com/page?cat=3&id=47&sort=asc is harder to parse and can generate dozens of near-identical URL variations for the same content. This creates crawl inefficiency and can fragment your link equity across multiple URL strings that all point to the same page.

Google's crawlers are sophisticated, but they operate within constraints. Googlebot allocates a certain amount of crawl budget to each site — essentially, a limit on how many pages it will process in a given timeframe. When your URL structure is clean and logical, crawlers can navigate your site efficiently and index more of your important content. Understanding how often Google crawls a site can help you appreciate why clean URLs matter for maximizing that budget.

Descriptive paths also help Google's natural language processing systems connect your URL to relevant search queries. A slug like /how-to-build-backlinks reinforces the page's topical relevance in a way that /post?id=8821 simply cannot. This is why Google's own documentation consistently recommends using words in URLs rather than arbitrary ID numbers. The URL itself is a ranking signal, albeit a relatively lightweight one. But combined with everything else, it contributes to how confidently Google can classify and surface your content.

Building URLs That Search Engines Actually Love

Good URL structure isn't complicated, but it does require consistency. The goal is to create URLs that are short, descriptive, predictable, and free of unnecessary complexity. When you apply these principles across your entire site, you make it significantly easier for Google to crawl and understand your content at scale.

Start with the slug, the part of the URL after the domain and directory. Your slug should reflect the primary topic of the page using relevant keywords, but it shouldn't be stuffed or overly long. A slug like /seo-url-best-practices is ideal. A slug like /the-ultimate-complete-guide-to-seo-url-best-practices-for-google-2026 is not. Keep it concise, meaningful, and readable by a human at a glance.

Use lowercase letters consistently: URLs are technically case-sensitive on many servers, which means /Blog/SEO-Tips and /blog/seo-tips can be treated as two different pages. Sticking to lowercase everywhere eliminates this risk entirely.

Use hyphens, not underscores: Google treats hyphens as word separators, meaning seo-tips is read as "seo tips." Underscores, however, are not treated the same way, so seo_tips may be read as a single string. This is a small but meaningful distinction for keyword research and recognition.

Keep your folder structure shallow and logical: Avoid burying pages deep in your hierarchy. A URL like yoursite.com/resources/guides/seo/beginners/url-structure has too many levels. Aim for two to three directory levels at most. Deeper structures can dilute page authority and make it harder for crawlers to prioritize your content.

Avoid unnecessary parameters: Parameters like session IDs, tracking codes, and sorting filters can create thousands of unique URL variations for the same content. Use canonical tags to consolidate these, or configure your URL parameter handling in Google Search Console to tell Google which parameters to ignore.

Canonicalization deserves special attention. Duplicate URLs are one of the most common and damaging URL issues sites face. The same page can appear at http:// and https://, at www. and non-www, with and without a trailing slash, and with multiple parameter combinations. From Google's perspective, these can look like separate pages with identical content. The fix is to implement a rel=canonical tag on every page pointing to your preferred URL version, and to use 301 redirects to consolidate traffic from non-preferred versions to the canonical one.

Consistency is the underlying principle across all of these practices. When your URL structure follows predictable patterns, Google can crawl your site more efficiently, understand your content hierarchy more accurately, and index your pages with greater confidence.

Submitting Your URL to Google: Step-by-Step Methods

Structuring your URLs correctly is half the battle. The other half is making sure Google actually knows they exist. While Googlebot will eventually discover most URLs through links and crawling, proactive submission accelerates the process considerably, especially for new pages or recently updated content.

Using Google Search Console's URL Inspection Tool

The most direct method for submitting individual URLs is through Google Search Console. Once you've verified your site, navigate to the URL Inspection tool and enter the specific URL you want Google to index. The tool will show you whether the URL is currently indexed, when it was last crawled, and whether any issues were detected.

If the page isn't indexed yet, you'll see an option to "Request Indexing." This sends a signal to Google to prioritize crawling that URL. It's not a guarantee of immediate indexing, but it significantly speeds up the process compared to waiting for Googlebot to discover the page organically. For a deeper walkthrough, our guide on how to submit a URL to Google covers the full process. This method is best used for high-priority individual pages, such as a new product page, a key landing page, or an updated piece of cornerstone content.

Submitting an XML Sitemap

For larger sites with many pages, submitting an XML sitemap is the most scalable approach. A sitemap is a structured file that lists all the important URLs on your site, along with optional metadata like last modified dates and update frequency. It gives Googlebot a complete map of your site's content in a single, easy-to-parse document.

You can submit your sitemap directly in Google Search Console under the "Sitemaps" section. You can also reference your sitemap in your robots.txt file so that any crawler visiting your site can find it automatically. Keep your sitemap updated whenever you publish new content or make structural changes. Many CMS platforms, including WordPress with plugins like Yoast or Rank Math, generate and update sitemaps automatically.

One important note: your sitemap should only include canonical, indexable URLs. Don't include pages with noindex tags, redirect URLs, or parameter-based duplicates. A clean sitemap focused on your best content is more valuable than a bloated one that includes everything.

Using the IndexNow Protocol

IndexNow is a newer protocol that allows you to notify search engines instantly when a URL is created, updated, or deleted. Rather than waiting for a crawler to revisit your site, IndexNow lets you push a signal directly to participating search engines the moment content changes. Bing and Yandex have adopted the protocol, and various CMS platforms and SEO tools have integrated IndexNow support.

The practical benefit is speed. With traditional crawling, there can be a lag of days or even weeks between publishing a page and having it indexed. IndexNow dramatically compresses that window. For sites that publish frequently or update time-sensitive content, exploring faster Google indexing for new content can have a meaningful impact on how quickly new URLs become visible in search results.

Sight AI's website indexing tools include IndexNow integration alongside automated sitemap updates, making it straightforward to ensure every new URL you publish gets flagged for discovery as quickly as possible. Combining proactive submission methods with a clean URL structure gives your content the best possible foundation for fast, accurate indexing.

Common URL Mistakes That Block Google From Finding Your Pages

Even well-intentioned site structures can develop URL problems over time. Some of these mistakes are easy to spot; others quietly drain crawl budget and suppress rankings without any obvious warning signs. Knowing what to look for is the first step to fixing it.

Accidental noindex tags: A noindex directive in a page's meta robots tag tells Google explicitly not to index that URL. This is useful for pages you genuinely don't want in search results, like internal admin pages or thank-you pages. But when it's applied accidentally to important pages during a site migration or CMS update, it can remove those pages from Google's index entirely. Always verify that your high-value pages don't have noindex tags applied unintentionally.

Robots.txt blocks: Your robots.txt file controls which URLs Googlebot is allowed to crawl. Overly broad disallow rules can inadvertently block entire sections of your site. A common mistake is blocking the /wp-admin/ directory in WordPress but accidentally also blocking CSS or JavaScript files that Google needs to render your pages correctly. If you're struggling with crawl access, learning how to get Google to crawl your site can help you diagnose and resolve these issues.

Orphan pages: An orphan page is a URL that exists on your site but has no internal links pointing to it. Since Googlebot primarily discovers pages by following links, orphan pages are often never crawled or indexed. This is a surprisingly common issue, especially on large sites where content gets published without being properly integrated into the site's navigation or internal linking structure.

Redirect chains and loops: A redirect chain occurs when URL A redirects to URL B, which redirects to URL C, and so on. Each hop in the chain slows down crawling, dilutes link equity, and increases the risk of errors. A redirect loop is even worse: URL A redirects to URL B, which redirects back to URL A. Crawlers will simply abandon the URL. Keep redirects as direct as possible, always pointing to the final destination URL in a single hop.

URL parameter bloat: Faceted navigation, session tracking, and A/B testing tools can all generate enormous numbers of unique URL variations. If these aren't properly managed with canonical tags or parameter configuration, Google can end up crawling thousands of low-value URLs instead of focusing on your core content. This wastes crawl budget and can dilute the authority of your important pages.

URL changes without 301 redirects: Changing a URL without implementing a proper 301 redirect is one of the most damaging mistakes you can make. The original URL loses all its accumulated link equity, and any backlinks pointing to it become dead ends. Google treats the new URL as a brand-new page with no history. If your content is not indexed by Google fast enough, broken redirects may be a contributing factor. Always implement 301 redirects when changing URLs, and update your internal links to point directly to the new URL as well.

Auditing and Fixing Your Existing URLs for Better Visibility

If your site has been live for any length of time, there's a good chance some URL issues have accumulated. A structured audit helps you identify and prioritize the fixes that will have the most impact on crawlability and visibility.

Start with Google Search Console. The Index Coverage report shows you which URLs Google has indexed, which it has excluded, and why. Common exclusion reasons include "Crawled but not indexed," "Excluded by noindex tag," "Redirect error," and "Not found (404)." Each category points to a specific type of problem that needs attention. You can also check your position in Google search to understand how your indexed URLs are actually performing in rankings.

The URL Inspection tool lets you examine individual URLs in detail. Enter any URL on your site and you'll see its indexing status, last crawl date, canonical URL as Google sees it, and any detected issues. This is particularly useful for investigating why a specific high-value page isn't ranking or isn't appearing in search results at all.

For a broader view of your site's URL health, run a site crawl using a dedicated tool. A crawl will surface duplicate URLs, redirect chains, broken internal links, pages with missing canonical tags, and URLs that are linked internally but blocked by robots.txt. Knowing what to look for in SEO tools helps you choose the right crawler for a comprehensive audit. The output gives you a comprehensive picture of your URL structure that Search Console alone can't provide.

When prioritizing fixes, think in terms of page value. Start with your highest-traffic pages, your most important conversion pages, and your cornerstone content. These are the URLs where clean structure and proper indexing have the most direct impact on business outcomes. From there, work outward to supporting pages and less critical content.

Consolidate duplicate URL variations: If you find multiple versions of the same page (www vs. non-www, HTTP vs. HTTPS, trailing slash vs. no trailing slash), implement 301 redirects to the canonical version and add a rel=canonical tag to eliminate any ambiguity.

Fix or remove broken URLs: Any URL returning a 404 error that still has internal or external links pointing to it should either be restored or redirected to the most relevant live page. Broken URLs waste crawl budget and create a poor user experience.

Flatten unnecessary redirect chains: Update any redirects that pass through multiple hops to point directly to the final destination URL. This preserves link equity and speeds up crawling.

Regular URL audits, ideally conducted quarterly or after any significant site change, keep these issues from compounding over time.

Beyond Google: Why URL Structure Matters for AI Search Visibility

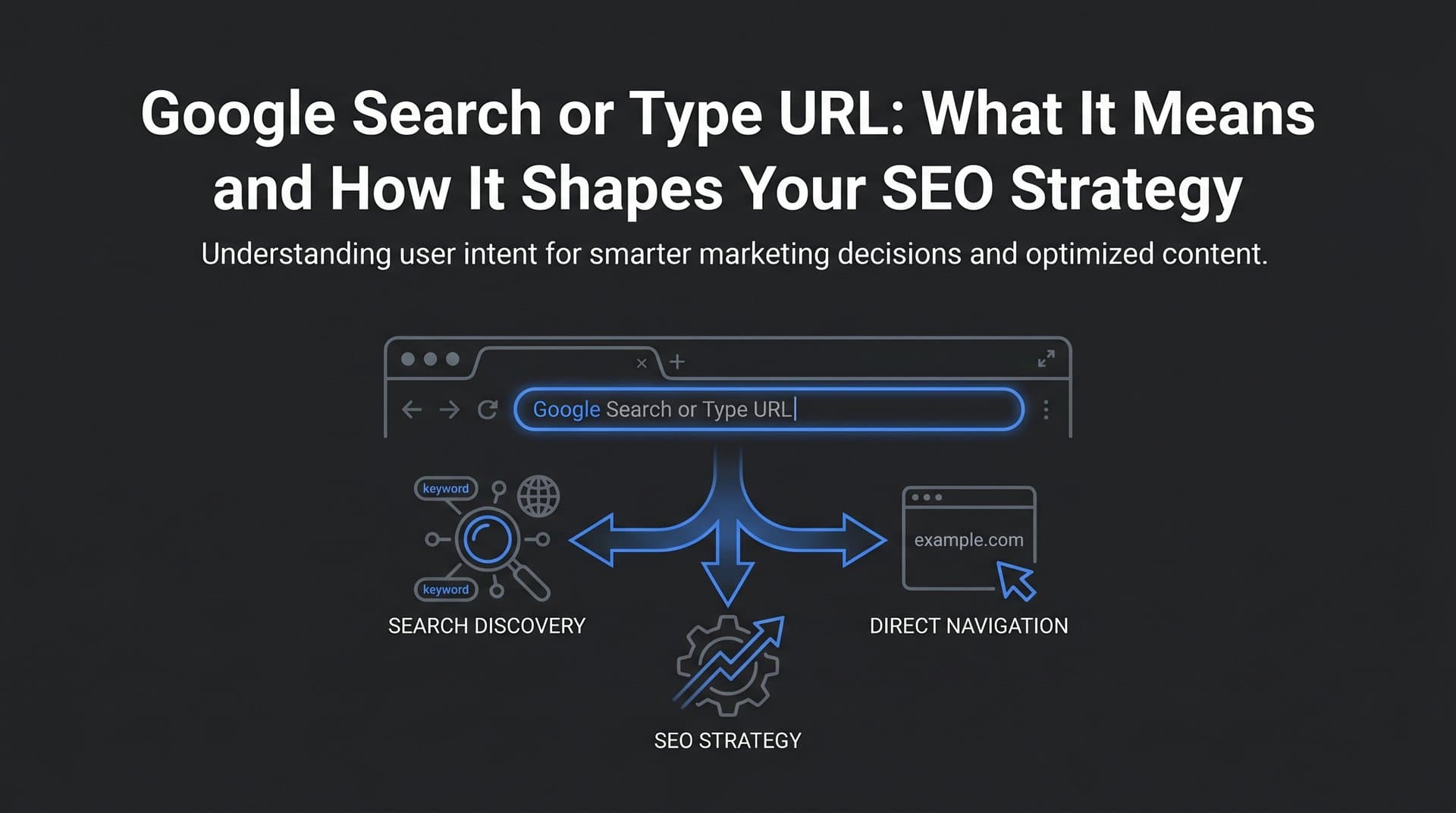

SEO has always been about making your content easy for machines to find and understand. That principle hasn't changed, but the machines have multiplied. AI-powered platforms like ChatGPT, Claude, and Perplexity are increasingly becoming discovery surfaces in their own right, pulling from indexed web content to answer user questions and make recommendations. And the same URL hygiene that helps Google find your content also influences whether AI models can reference and cite it.

When an AI model surfaces information from the web, it's drawing on content that has been crawled, indexed, and deemed credible by search infrastructure. Pages with clean, descriptive URLs are easier to categorize, easier to attribute, and easier to cite accurately. A URL like yoursite.com/guides/generative-engine-optimization is far more citable than yoursite.com/p?id=4421. The former communicates context; the latter communicates nothing.

This is where URL optimization intersects with Generative Engine Optimization (GEO), the practice of structuring your content and technical foundation so that AI models are more likely to surface and recommend your brand. Understanding how to optimize for AI search builds on traditional SEO principles, including URL structure, and extends them to account for how generative AI systems process and reference web content. A well-indexed site with clean URLs and authoritative content is better positioned to appear in AI-generated responses.

Monitoring your AI visibility is increasingly important as these platforms grow in influence. Understanding where your brand URLs are being mentioned across AI platforms, what context they appear in, and how your content is being described gives you actionable intelligence for both your content strategy and your technical SEO work. Tools like Sight AI's AI visibility tracking let you monitor brand mentions across platforms like ChatGPT, Claude, and Perplexity, giving you a clear picture of your presence in AI search alongside traditional search engines.

The bottom line is that URL structure is no longer just a Google concern. It's a foundational element of your entire content discoverability strategy, across every surface where your audience might find you.

Putting It All Together

URLs are the silent infrastructure of your search visibility. They're the first signal Google encounters when it finds a page, the structure that guides crawlers through your site, and the address that AI models use when they reference your content. Getting them right isn't glamorous work, but it pays dividends across every other SEO effort you make.

The core actions are straightforward: structure your URLs to be short, descriptive, and consistent; submit them proactively using Search Console, XML sitemaps, and IndexNow; fix errors like redirect chains, noindex tags, and duplicate variations before they compound; and audit regularly to catch new issues before they become ranking problems.

And as AI search continues to grow in importance, remember that the same clean URL structure that helps Google index your content also makes it easier for generative AI platforms to cite and surface it. Your URL strategy is no longer just about ranking in blue links. It's about being discoverable everywhere your audience is looking.

If you're ready to take that visibility seriously, Start tracking your AI visibility today with Sight AI. Monitor exactly where your brand appears across ChatGPT, Claude, Perplexity, and more, uncover content gaps, and use automated indexing tools to ensure every URL you publish gets discovered as fast as possible. Your content deserves to be found. Make sure it is.