You hit publish on your latest article. It's well-researched, perfectly optimized, and addresses a gap in your industry's content landscape. Then you wait. And wait. Days turn into weeks, and your content sits in digital limbo—invisible to search engines, inaccessible to your audience, and generating exactly zero traffic. The frustration isn't just about wasted effort. It's about missed opportunities while your competitors' content gets discovered first.

This is the discovery gap, and it's costing you more than you realize. Every hour your content remains unindexed is an hour your competitors own the conversation. Every day of delayed discovery is a day of lost organic traffic, missed conversions, and diminished competitive advantage.

The solution isn't publishing more content or optimizing harder. It's eliminating the bottleneck between publication and discovery through automated indexing. Modern indexing protocols can shrink your time-to-discovery from weeks to hours, from days to minutes. This guide will show you exactly how to implement automated indexing workflows that ensure your content gets discovered the moment it goes live, giving you the competitive edge that comes from being first, being fast, and being consistently visible across traditional search and emerging AI platforms.

The Discovery Gap: Why Fresh Content Gets Lost

Search engines don't magically know when you publish new content. They rely on crawlers—automated bots that systematically visit websites, following links and discovering pages. But here's the problem: crawlers work on schedules, not on your publishing timeline. Google's crawler might visit your homepage daily, but that new blog post buried three clicks deep? It could wait weeks for discovery.

This passive discovery model creates a queue system where your content competes for crawler attention. High-authority sites with frequent updates get crawled more often. Newer sites or those with infrequent publishing schedules get crawled less. Your brilliant article about an emerging industry trend might finally get indexed three weeks later—long after the conversation has moved on and your competitors have claimed the top rankings.

The business impact hits hardest in time-sensitive scenarios. Product launches need immediate visibility. News content becomes worthless if it's indexed days after publication. Seasonal campaigns miss their window. Competitive keyword opportunities slip away because your content wasn't discoverable when it mattered most. Understanding why you're experiencing slow Google indexing for new content is the first step toward solving this problem.

Traditional submission methods offered partial solutions. You could manually submit URLs through Google Search Console or ping your sitemap after updates. These approaches work, but they're reactive, manual, and inconsistent. They require someone to remember to submit each new page, check that the submission worked, and monitor indexing status. At scale, this becomes impossible to maintain.

The gap between publication and discovery isn't just a technical inconvenience. It's a strategic vulnerability. While your content waits to be found, competitors are already ranking, AI search tools are already citing other sources, and your potential audience is already getting their questions answered elsewhere. Modern automated indexing protocols were built specifically to close this gap, transforming the passive wait-and-hope approach into an active, immediate notification system.

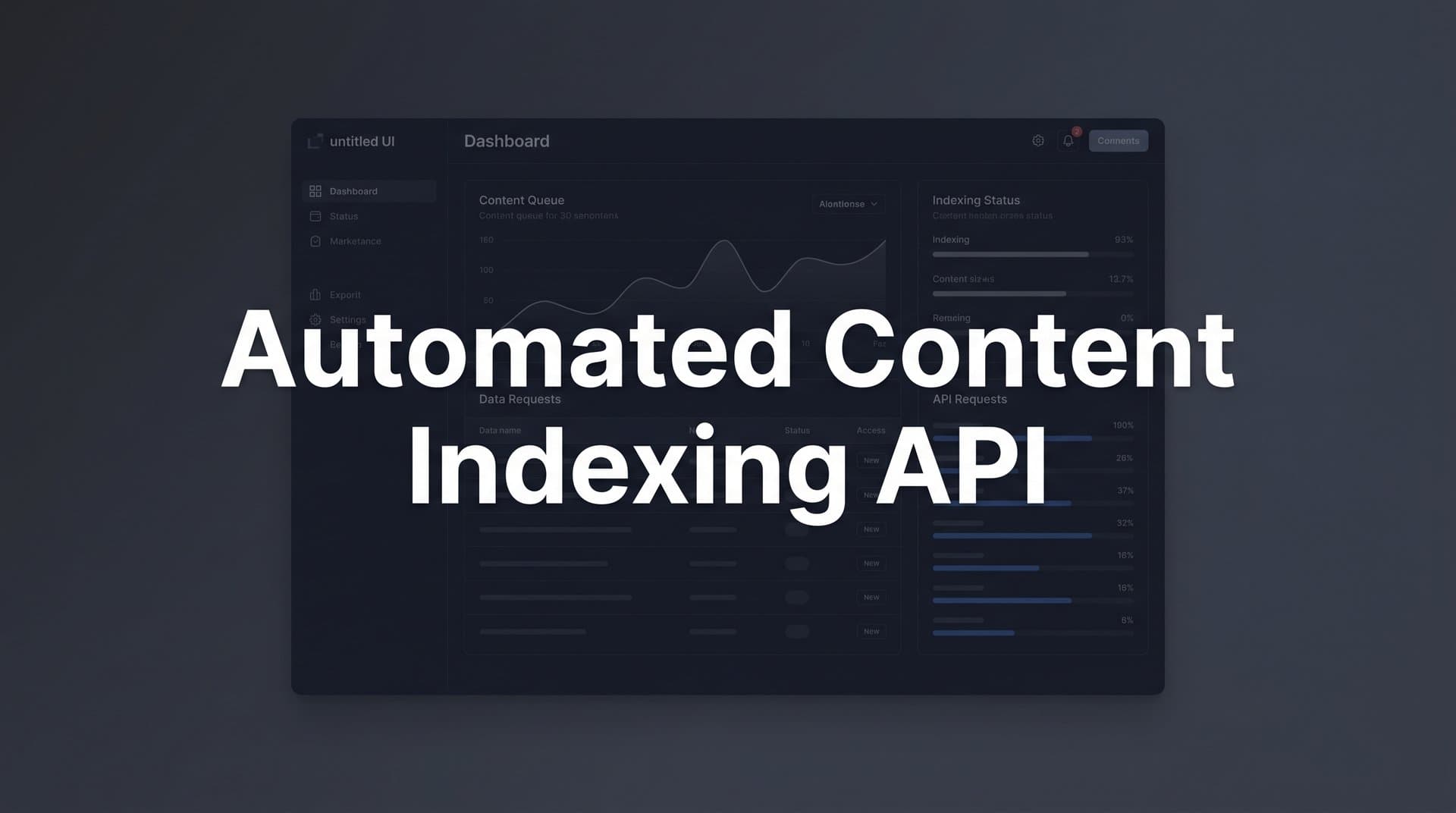

IndexNow and Modern Indexing Protocols Explained

IndexNow represents a fundamental shift in how search engines discover content. Instead of waiting for crawlers to find your pages, IndexNow lets you notify search engines the moment content goes live. Think of it as the difference between leaving a voicemail and calling someone directly—one requires them to check their messages eventually, the other gets immediate attention.

The protocol works through a simple API call. When you publish or update a page, your system sends a notification to participating search engines with the URL. These engines receive the notification instantly and can prioritize crawling that specific page, dramatically reducing time-to-index. The technical implementation is straightforward: a single POST request containing the URL and your site's API key.

Microsoft Bing was the first major search engine to adopt IndexNow in 2021, followed quickly by Yandex and several other platforms. The protocol gained traction because it benefits both publishers and search engines. Publishers get faster indexing, while search engines reduce unnecessary crawling of unchanged pages, saving computational resources. It's a win-win that's reshaping the indexing landscape.

Google takes a different approach. While they haven't adopted IndexNow directly, they offer the URL Inspection API within Search Console, allowing programmatic submission of individual URLs. Google also responds to sitemap pings—notifications that your sitemap has been updated with new content. These mechanisms serve similar purposes to IndexNow, though they operate within Google's existing infrastructure rather than adopting a new protocol. Learning techniques for faster Google indexing for new content can complement your IndexNow implementation.

The technical requirements for implementing IndexNow are minimal. You need to generate an API key (a simple text file placed in your site's root directory), implement code that triggers API calls when content publishes, and ensure your server can make HTTPS requests to IndexNow endpoints. Most modern CMS platforms can handle these requirements with plugins or custom integrations.

What makes IndexNow particularly powerful is its network effect. When you submit a URL to one participating search engine, they share that notification with other protocol members. Submit to Bing, and Yandex learns about your new content too. This amplification effect means a single API call can notify multiple search engines simultaneously, maximizing your content's discoverability across platforms.

The protocol also includes built-in protections against abuse. Rate limits prevent excessive submissions, and search engines validate that you control the domain before accepting notifications. These safeguards ensure the system remains effective while preventing spam or manipulation attempts.

Building Your Automated Indexing Workflow

Automation starts with the trigger—the event that initiates your indexing request. In most content workflows, this trigger is the publish action in your CMS. When an editor clicks "Publish" or when scheduled content goes live, that event should automatically fire an API call to your indexing endpoints. This ensures zero delay between publication and notification.

For WordPress sites, plugins can handle this integration seamlessly. They hook into the publish event, capture the new post URL, and send IndexNow notifications without any manual intervention. For custom CMS platforms or headless setups, you'll need to build this trigger into your publishing pipeline—typically a few lines of code in your content creation API that fires whenever a new page or post is created.

Content updates deserve the same treatment as new publications. When you revise an article, update product information, or refresh outdated content, those changes should trigger fresh indexing requests. Search engines need to know the page has changed so they can recrawl and update their index with your latest content. Building update triggers into your workflow ensures your indexed content always reflects your current site state.

Bulk operations require a different approach. If you're launching a new section with dozens of pages or performing site-wide content updates, sending individual API calls for every URL could trigger rate limits. Instead, batch these requests with appropriate delays between submissions, or leverage sitemap updates that signal multiple changes at once. Many platforms automatically regenerate sitemaps when content changes, then ping search engines with the updated sitemap URL.

Sitemap synchronization forms the backbone of comprehensive indexing automation. Your sitemap should update automatically whenever content publishes, and search engines should be notified of these updates immediately. This creates a reliable secondary notification channel—if an individual API call fails, the sitemap update ensures search engines eventually discover the new content during their next sitemap check.

Error handling matters more than most implementations consider. API calls can fail due to network issues, rate limits, or service outages. Your automation should include retry logic that attempts failed submissions again after a delay, logging for troubleshooting, and fallback mechanisms that ensure critical content gets submitted even if primary methods fail.

Integration with your broader content pipeline creates powerful synergies. Connect your indexing automation to your content calendar so seasonal content gets indexed exactly when it goes live. Link it to your A/B testing tools so new variants get discovered immediately. Integrate with your analytics platform to track the relationship between indexing speed and traffic acquisition. An automated content publishing platform can streamline these integrations significantly.

Measuring Indexing Performance and Troubleshooting

Time-to-index is your north star metric—the duration between publication and confirmed indexing. Track this for every piece of content to understand your baseline performance and identify improvements as you optimize your automation. Before implementing automated indexing, you might see average times of 5-7 days. After automation, this should drop to hours or even minutes for most content.

Crawl frequency indicates how often search engines visit your site. Higher crawl rates generally correlate with faster indexing, but they're also a sign of site authority and content freshness in search engines' eyes. Monitor crawl frequency through Search Console to understand whether your automation is improving your site's overall crawl priority beyond just the pages you submit.

Indexation rate measures the percentage of your submitted URLs that successfully make it into search indexes. A healthy site should see indexation rates above 90% for quality content. Lower rates signal problems—either with your content quality, technical accessibility, or submission process. Track this metric separately for different content types to identify which sections of your site face indexing challenges.

Common indexing failures usually stem from crawlability issues. Search engines can't index what they can't access. Pages blocked by robots.txt, protected by authentication requirements, or buried behind JavaScript rendering problems will fail indexing regardless of how quickly you submit them. Use crawling tools to verify pages are accessible before celebrating fast submission times.

Duplicate content creates another frequent failure point. If search engines determine your new page duplicates existing content—whether on your site or elsewhere—they may choose not to index it. This shows up as submitted URLs that remain in "Discovered - currently not indexed" status indefinitely. Canonical tags and unique content solve most duplication issues.

Server errors during crawler visits sabotage even perfect automation. If your server returns 500 errors or times out when search engines attempt to crawl submitted URLs, indexing fails despite successful API notifications. Monitor server logs around submission times to catch these issues, and ensure your hosting infrastructure can handle increased crawler attention that comes with automated submissions.

Google Search Console provides the most comprehensive indexing data for Google's index. The Coverage report shows which URLs are indexed, which have errors, and which are excluded. The URL Inspection tool lets you check individual pages and request indexing manually when troubleshooting. Set up email alerts for coverage issues so you catch problems immediately rather than discovering them weeks later.

Third-party indexing monitoring tools offer cross-platform visibility. Services that check indexing status across multiple search engines help you understand whether issues are platform-specific or site-wide. Exploring automated content indexing tools can provide the monitoring capabilities you need. They can also track indexing speed more granularly than native search engine tools, providing detailed timelines from submission to confirmed indexing.

Automated Indexing in an AI-Driven Search Landscape

AI search tools like Perplexity, ChatGPT's web browsing mode, and Claude's search capabilities fundamentally rely on indexed web content. These systems don't crawl the web themselves—they access information that traditional search engines have already discovered and indexed. Content that isn't indexed simply doesn't exist to AI search tools, no matter how valuable or well-optimized it might be.

This creates a direct connection between your indexing speed and AI visibility. When you publish content about emerging topics or breaking industry news, the first indexed sources often become the primary references AI tools cite. Faster indexing means your content enters the AI-accessible knowledge base before competitors, increasing the likelihood that AI systems will reference your insights when users ask related questions.

The relationship between indexed content and AI model training adds another layer of importance. While major AI models aren't retrained daily, the web content they access through search integration updates constantly. Fresh, quickly-indexed content influences AI responses in real-time through retrieval-augmented generation, where models pull current information from search results to supplement their training data.

AI search tools increasingly prioritize recency in their citations. When multiple sources cover similar topics, these systems often favor more recently published and indexed content, assuming it contains the latest information. Automated indexing ensures your content competes on recency, not just quality, giving you an edge in time-sensitive topic areas where being current matters as much as being comprehensive.

The convergence of traditional SEO and AI visibility makes indexing automation doubly valuable. You're not just optimizing for Google rankings anymore—you're ensuring discoverability across an entire ecosystem of AI-powered search and research tools. Content that gets indexed quickly appears in traditional search results faster and becomes available to AI systems sooner, multiplying your visibility across both channels.

Future-proofing your strategy means recognizing that search is evolving beyond traditional engines. As users increasingly turn to AI assistants for information, the distinction between "search engine optimization" and "AI visibility optimization" blurs. Automated indexing serves both goals simultaneously, ensuring your content is discoverable regardless of how users choose to find information.

The technical foundation you build for automated indexing today will adapt as new platforms emerge. Whether the next major discovery channel is another search engine, a new AI system, or something entirely different, the core principle remains: immediate notification beats passive waiting. Systems built around proactive content discovery will outperform reactive approaches regardless of how the search landscape evolves.

Putting Your Indexing Strategy Into Action

Start with a technical audit of your current setup. Can your CMS trigger events when content publishes? Do you have API access to submit URLs programmatically? Is your sitemap automatically generated and up-to-date? These foundational elements must work correctly before automation can succeed. Fix any gaps in your technical infrastructure first.

Prioritize content types based on time-sensitivity and competitive value. News articles, product launches, and trend-focused content benefit most from instant indexing. Publishers with time-sensitive content should explore specialized content indexing for news sites strategies. Evergreen reference content matters less—if a comprehensive guide gets indexed in three days instead of three hours, the impact is minimal. Focus your automation efforts where speed creates the greatest advantage.

Implement IndexNow for Microsoft Bing and partner engines first. The protocol is straightforward to set up, well-documented, and immediately functional. Generate your API key, add the verification file to your site, and integrate the API call into your publishing workflow. This gives you quick wins and validates your automation approach before tackling more complex integrations.

Configure Google's URL Inspection API or sitemap ping mechanisms next. While Google hasn't adopted IndexNow, they provide alternative submission methods that achieve similar results. Automating these ensures comprehensive coverage across all major search engines, maximizing your content's discoverability regardless of which platforms your audience uses.

Set up monitoring dashboards that track your key metrics. Time-to-index, submission success rates, and indexation percentages should all be visible at a glance. These dashboards help you identify problems quickly, measure the impact of your automation, and demonstrate ROI to stakeholders who need to understand why indexing speed matters. Leveraging automated indexing features for marketers can simplify this process considerably.

Connect your indexing automation to broader content workflows. If you use content calendars, ensure scheduled content triggers indexing requests when it goes live. If you run A/B tests, make sure new variants get submitted immediately. If you update content regularly, build update triggers that notify search engines of changes. These integrations ensure consistent, comprehensive automation without manual intervention.

Document your processes and train your team. Content creators should understand that publishing triggers automatic indexing, so they can time releases strategically. Technical teams need to know how the system works for troubleshooting. Marketing leaders should grasp the competitive advantage to justify continued investment in automation infrastructure. Clear documentation ensures your indexing strategy survives team changes and organizational shifts.

Taking Control of Your Content Discovery

Automated indexing removes the most frustrating bottleneck in content marketing—the gap between creation and discovery. You've invested time, expertise, and resources into producing valuable content. Leaving its discovery to chance wastes that investment and hands competitive advantages to faster-moving rivals.

The technology exists today to eliminate this bottleneck completely. IndexNow, URL submission APIs, and automated sitemap updates work reliably across major search engines. Implementation requires some technical setup, but the payoff—measured in hours saved, traffic gained, and opportunities captured—justifies the effort many times over. Evaluating the best automated content indexing software can help you choose the right solution for your needs.

In a landscape where AI search tools increasingly shape how users discover information, being indexed isn't just about traditional SEO anymore. It's about existing in the knowledge bases that power conversational AI, appearing in the sources that AI models cite, and ensuring your expertise reaches audiences regardless of how they choose to search. Fast indexing serves both traditional search visibility and emerging AI discovery channels simultaneously.

The competitive advantage goes to publishers who move fastest. When breaking news hits your industry, when product launches create new keyword opportunities, when seasonal trends emerge—the first indexed content often captures disproportionate visibility. Automation ensures you're consistently first, turning speed from a occasional advantage into a systematic competitive edge.

Start by auditing your current indexing performance. How long does new content typically wait before appearing in search results? Which content types face the longest delays? Where are your biggest opportunities for improvement? These insights reveal where automated indexing will create the most value for your specific content strategy.

Then implement the technical foundations: API integrations, CMS triggers, sitemap automation, and monitoring dashboards. These components work together to create a system that operates without manual intervention, ensuring every piece of content gets discovered as quickly as possible without ongoing effort from your team.

But indexing is just one piece of a larger visibility puzzle. Understanding how your content performs after indexing matters just as much as getting it discovered quickly. Start tracking your AI visibility today to see exactly how AI platforms like ChatGPT, Claude, and Perplexity reference your brand, identify content gaps where competitors are getting cited instead of you, and optimize your entire content strategy around both traditional search rankings and emerging AI visibility metrics. The future of content discovery spans multiple channels—make sure you're visible across all of them.