When a potential customer asks ChatGPT to recommend the best project management tools for remote teams, does your brand make the list? When someone queries Claude about email marketing platforms with the best deliverability, does your product get mentioned? Right now, millions of buying decisions are happening through conversations with AI models—and most brands have absolutely no idea what these systems are saying about them.

This isn't a future scenario. It's happening today. Users have shifted from typing queries into Google to having conversations with ChatGPT, Perplexity, and Claude. They're asking for recommendations, comparisons, and advice—and these AI models are responding with specific brand names and product suggestions. The catch? Unlike traditional search where you can see your rankings, track your competitors, and measure your visibility, AI responses are a black box.

AI model monitoring for marketing solves this critical blind spot. It's the systematic practice of tracking, analyzing, and optimizing how AI systems represent your brand across conversational platforms. Think of it as the next evolution of SEO—except instead of monitoring where you rank on a search results page, you're tracking whether you're being recommended in the conversations that matter. The brands that master this now will own the next decade of organic discovery. The ones that ignore it will watch opportunities vanish into competitors' pipelines without ever knowing why.

The New Discovery Layer: Why AI Responses Shape Buying Decisions

Search behavior has fundamentally changed, and the shift happened faster than most marketers realized. Users no longer want to click through ten blue links and piece together information themselves. They want direct answers, personalized recommendations, and expert guidance—all delivered conversationally. AI-powered platforms like ChatGPT, Perplexity, and Claude provide exactly that.

Here's what this looks like in practice: A marketing director researching analytics platforms opens ChatGPT and asks, "What's the best analytics tool for tracking user behavior across mobile and web?" The AI responds with three specific recommendations, complete with feature comparisons and use case scenarios. The director never opens Google. Never visits a comparison site. Never sees your carefully optimized landing pages. The buying journey begins and often ends within that AI conversation.

This represents a fundamental departure from traditional SEO dynamics. In the old model, success meant ranking on page one for relevant keywords. Users would see your listing, click through, and evaluate your offering. You had multiple chances to capture attention—through meta descriptions, title tags, featured snippets, and organic rankings. Even if you ranked fifth, you still had visibility.

AI visibility works differently. When an AI model responds to a query, it synthesizes information from multiple sources and presents a curated answer. If your brand makes that response, you're in the consideration set. If you don't, you're invisible. There's no page two. There's no "scroll down to see more options." You're either part of the conversation or you're not.

The business implications are stark. Every time an AI model recommends a competitor but not you, a potential customer forms their consideration set without ever knowing you exist. They're not choosing your competitor over you—they're simply unaware you're an option. This is opportunity loss at scale, happening in thousands of conversations daily across multiple AI platforms.

What makes this particularly challenging is that AI recommendations feel authoritative. When ChatGPT suggests three email marketing platforms, users perceive that as expert guidance, not paid placement. The trust factor is higher than traditional search results, where users understand that ads and SEO tactics influence rankings. AI responses feel like unbiased recommendations, which makes inclusion even more valuable—and exclusion even more costly.

Breaking Down AI Model Monitoring: Core Components and Metrics

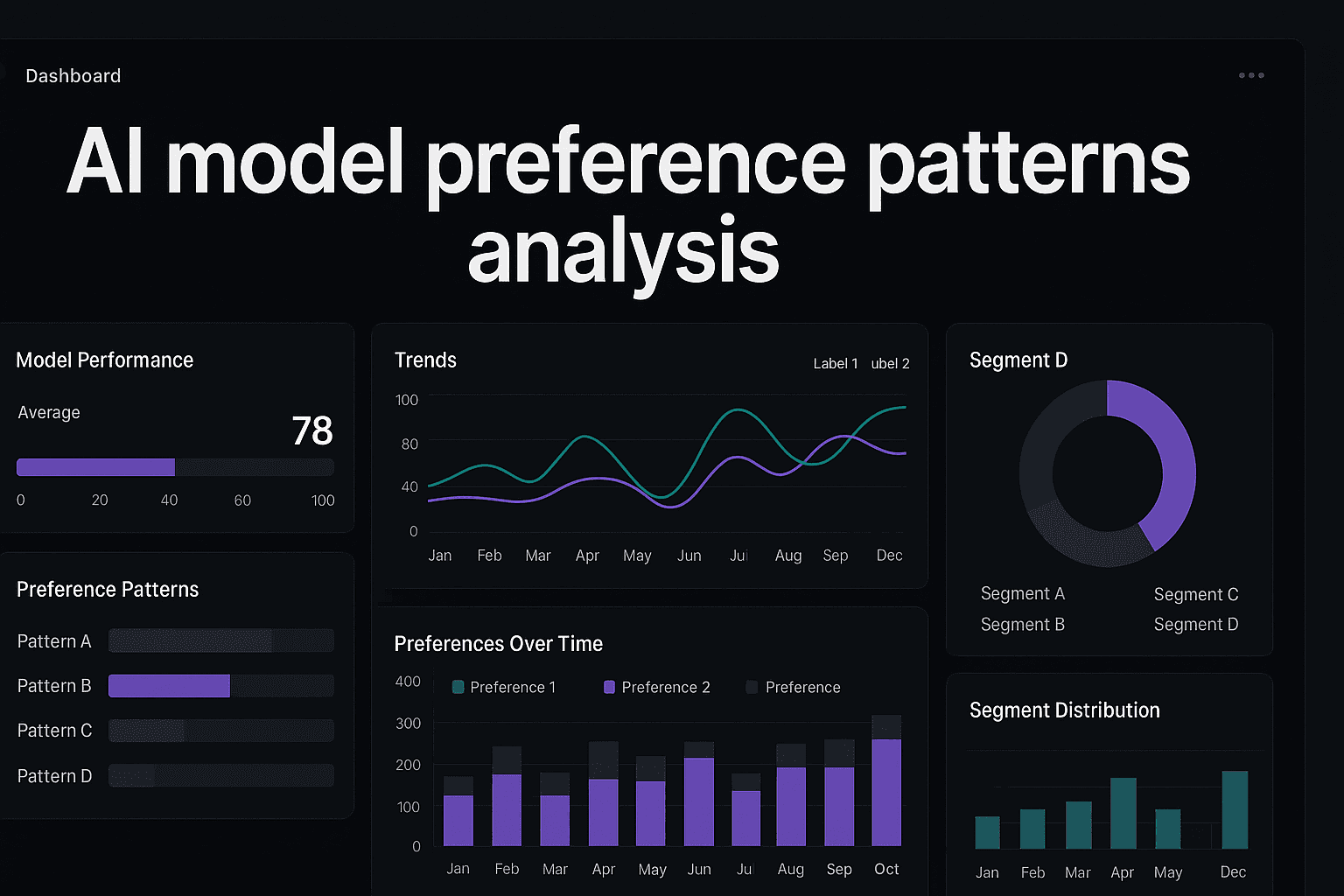

Understanding what AI model monitoring actually measures is essential before you can optimize for it. The discipline rests on three foundational pillars, each providing different insights into your AI visibility.

Brand Mention Tracking: This is the baseline metric—are you being mentioned at all? Monitoring systems track specific prompts across multiple AI platforms and record whether your brand appears in responses. This isn't just about vanity metrics. Mention frequency across relevant queries indicates whether you're part of the consideration set for your category. If competitors appear in 70% of product recommendation queries while you appear in 15%, you have a visibility gap that's costing you opportunities. A dedicated AI model brand monitoring tool can automate this tracking across platforms.

Sentiment Analysis: Being mentioned matters, but how you're being mentioned matters more. AI models don't just name-drop brands—they characterize them. "Company X offers robust features but has a steep learning curve" carries very different implications than "Company X provides intuitive tools perfect for beginners." AI sentiment analysis for brand monitoring categorizes mentions as positive, neutral, or negative, and tracks the specific attributes AI models associate with your brand. This reveals not just whether you're visible, but whether that visibility is helping or hurting.

Prompt-Response Mapping: This component tracks which user queries trigger brand mentions. You might discover that your brand appears frequently in queries about "enterprise solutions" but never in queries about "affordable options for startups." This mapping reveals your positioning in AI model knowledge—where you're strong, where you're absent, and where competitors own territory you should contest. It's the foundation for strategic content decisions.

These three pillars combine into what's known as an AI Visibility Score—a quantifiable metric that benchmarks your overall presence across AI platforms. While specific calculation methods vary, the score typically factors in mention frequency, sentiment quality, and breadth of relevant prompts where you appear. A score of 45 might indicate you're mentioned in 45% of relevant category queries with predominantly positive sentiment.

Benchmarking matters because raw scores lack context. Knowing you have a visibility score of 60 is meaningless without understanding that your top competitor scores 85 and the category average is 52. Effective monitoring systems provide competitive context, showing not just your absolute performance but your relative position in the AI visibility landscape.

Here's where cross-platform monitoring becomes non-negotiable: Different AI models have different training data, different update cycles, and different user bases. ChatGPT might mention your brand frequently while Claude rarely does. Perplexity might characterize you positively while Google's AI Overview emphasizes different attributes. Multi-model AI presence monitoring eliminates these dangerous blind spots. Your target audience isn't using just one AI tool—they're using whichever one is convenient in the moment. Comprehensive visibility requires tracking across at least six major platforms: ChatGPT, Claude, Perplexity, Gemini, Copilot, and emerging alternatives.

The monitoring cadence matters too. AI models update their knowledge bases continuously as new content gets indexed and training data refreshes. A weekly snapshot might miss important shifts in how you're being represented. Real-time AI model monitoring captures trends as they emerge, allowing faster strategic responses when sentiment shifts or mention frequency changes.

From Monitoring to Action: Turning AI Insights Into Content Strategy

Data without action is just expensive noise. The real value of AI model monitoring emerges when you translate insights into strategic content decisions that improve your visibility.

Start by identifying content gaps—the queries where competitors get mentioned but you don't. These gaps represent clear opportunities. If monitoring reveals that AI models recommend three competitors when users ask about "marketing automation for e-commerce," but never mention you, that's your signal. You need comprehensive, authoritative content that positions you as a solution for that specific use case.

This is where the feedback loop begins. Monitoring data shows you where you're absent. You create content specifically designed to fill those gaps—detailed guides, case studies, comparison articles, and implementation resources. Then you track whether your AI visibility improves in those areas. This isn't traditional content marketing where you publish and hope. It's strategic, data-driven optimization with measurable outcomes.

The content you create for AI visibility differs from traditional SEO content in important ways. AI models prioritize comprehensiveness, accuracy, and direct usefulness. They favor content that thoroughly answers user questions without marketing fluff. Think less about keyword density and more about information density. A 3,000-word guide that definitively answers "how to choose marketing automation software" will outperform ten 500-word blog posts dancing around the topic.

This approach is called Generative Engine Optimization, or GEO. While SEO optimizes for search engine rankings, GEO optimizes for being cited and recommended by AI models. The tactics overlap but the priorities differ. GEO emphasizes structured data, clear hierarchies of information, authoritative sourcing, and comprehensive coverage of topics. Understanding how AI models choose information sources is essential for crafting content that gets cited.

Practical GEO tactics include creating comparison content that directly addresses "X vs. Y" queries, developing detailed implementation guides for common use cases, publishing data-driven resources that AI models can cite as sources, and maintaining regularly updated content that reflects current best practices. Learning how to optimize content for AI models gives you a systematic framework for these efforts.

The feedback loop accelerates over time. As you publish optimized content, your AI visibility improves in targeted areas. As visibility improves, you gain data on which content formats and topics drive the most mentions. You double down on what works, refine what doesn't, and continuously expand your footprint across relevant queries. Brands that execute this cycle effectively see compounding returns—each piece of optimized content improves visibility, which provides better data for the next piece.

Setting Up Your AI Monitoring System: A Practical Framework

Implementation starts with defining what you're actually tracking. Begin by creating a prompt library—a collection of queries that represent how your target audience researches solutions in your category. These should include direct product queries like "best CRM for small businesses," comparison queries like "HubSpot vs. Salesforce," problem-focused queries like "how to improve email deliverability," and recommendation queries like "what tools do marketing agencies use."

Your initial prompt library should contain 30-50 queries that span the customer journey from early research to final decision-making. Don't just focus on queries where you expect to appear. Include the searches where you want to appear but currently don't—those represent your growth opportunities.

Next, establish competitor benchmarks. Identify 3-5 direct competitors and track their AI visibility across the same prompt library. This creates the comparative context you need to understand your relative position. You're not just measuring whether you're visible—you're measuring whether you're more or less visible than the brands competing for the same customers.

Platform prioritization depends on where your audience actually engages with AI. For B2B marketers, ChatGPT and Claude dominate professional workflows. For consumer brands, Perplexity and Google's AI Overview capture more search-intent traffic. For technical audiences, Claude and newer specialized models matter more. An AI visibility monitoring platform can help you track across the 3-4 platforms most relevant to your target users, then expand coverage as your monitoring practice matures.

Alert thresholds prevent data overload. Configure your monitoring system to flag significant changes: sudden drops in mention frequency, shifts from positive to negative sentiment, competitors gaining visibility in your core territory, or new opportunities where you're starting to appear. These alerts let you respond quickly to changes without manually reviewing every data point daily.

Integration with existing marketing workflows is where monitoring becomes operational rather than theoretical. Feed AI visibility data into your content planning meetings. Use sentiment insights to inform messaging strategy. Let prompt-response mapping guide your SEO priorities. When your content team sees that competitors dominate AI responses for "affordable project management tools," that becomes a content brief with clear strategic justification.

Establish a reporting cadence that matches your decision-making rhythm. Weekly snapshots work for tactical content decisions. Monthly reports suit strategic planning and budget allocation. Quarterly reviews provide the perspective needed to measure long-term trends and evaluate whether your GEO investments are paying off. LLM monitoring tools for marketers can streamline this entire reporting process.

Common Monitoring Pitfalls and How to Avoid Them

The most expensive mistake is monitoring without acting. Some brands implement comprehensive tracking, generate detailed reports, and then do nothing with the insights. They're collecting data instead of driving decisions. AI visibility monitoring only creates value when insights translate into content strategy, messaging adjustments, and competitive responses. If your monitoring system isn't directly influencing what content you create and how you position your brand, you're wasting resources.

Single-platform monitoring creates dangerous blind spots. Brands that only track ChatGPT miss what Claude users see. Those focused solely on Perplexity remain ignorant of Google AI Overview recommendations. Your customers don't use just one AI platform—they use whichever is convenient in the moment. Comprehensive visibility requires cross-platform coverage. The good news is that once you've built your prompt library and established your monitoring methodology, expanding to additional platforms is mostly operational execution.

Focusing exclusively on mention frequency while ignoring sentiment is another common trap. Being mentioned negatively is often worse than not being mentioned at all. If AI models consistently characterize your brand as "expensive," "complicated," or "outdated," those mentions are actively damaging your positioning. Sentiment analysis for brand monitoring reveals whether your visibility is an asset or a liability—and whether you need reputation management content to shift AI model characterizations.

Many brands also make the mistake of tracking only branded queries—searches that explicitly include their company name. This provides an incomplete picture. The valuable visibility happens in non-branded category queries where users are forming their consideration sets. "What's the best email marketing platform" matters far more than "what do people think about [Your Brand]." The first query represents discovery opportunities. The second only measures awareness you've already built.

Finally, expecting immediate results leads to premature abandonment. AI model knowledge bases update gradually as new content gets indexed and training data refreshes. Publishing optimized content today doesn't guarantee visibility tomorrow. The feedback loop operates on weeks and months, not days. Brands that treat GEO like paid advertising—expecting instant results—get frustrated and quit before the compounding benefits materialize. Understanding how AI models verify information accuracy helps set realistic expectations for this timeline.

Putting AI Visibility Into Practice

AI model monitoring for marketing represents a fundamental shift in how brands approach organic discovery. The monitoring-to-optimization cycle creates a systematic path to visibility: track where you appear and where you don't, identify content gaps and sentiment issues, create optimized content that addresses those gaps, and measure whether your visibility improves. This cycle compounds over time, with each iteration providing better data for the next.

The impact extends beyond just being mentioned more often. Brands that master AI visibility gain strategic advantages across multiple marketing objectives. You build brand authority by becoming the source AI models cite when users ask questions in your domain. You generate qualified leads by appearing in the consideration set during active research phases. You strengthen competitive positioning by owning territory where rivals currently dominate AI responses.

Start with an audit of your current AI visibility. Take your 10 most important category queries and manually check how often your brand appears across ChatGPT, Claude, and Perplexity. This baseline reveals where you stand today and provides context for measuring improvement. Most brands discover they're far less visible than they assumed—which is both sobering and clarifying.

Establish baseline metrics across your prompt library. Track mention frequency, sentiment distribution, and competitive benchmarks. These become your starting point for measuring progress. Without clear baselines, you can't demonstrate ROI or justify continued investment in GEO initiatives.

Implement systematic monitoring that feeds directly into content planning. The brands winning in AI visibility aren't just tracking data—they're building feedback loops where monitoring insights drive content strategy, which improves visibility, which generates better insights. This operational integration is what separates monitoring that creates value from monitoring that generates reports nobody acts on.

The opportunity is clear: AI-powered discovery is growing while traditional search behaviors are declining. Users increasingly want recommendations delivered conversationally rather than lists of links to evaluate themselves. The brands that establish strong AI visibility now will own organic discovery in this new paradigm. Those that wait will spend years playing catch-up while competitors build compounding advantages.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.