A misconfigured robots.txt file can silently tank your SEO. One wrong directive blocks Googlebot from your most important pages; another accidentally exposes a staging environment to the entire web. The consequences are rarely immediate, which makes them even more dangerous.

What's changed in 2026 is the scope of the problem. It's no longer just Googlebot you need to think about. GPTBot, ClaudeBot, PerplexityBot, and a growing roster of AI crawlers are now hitting your site regularly, scraping content for training data and retrieval-augmented generation. Getting your robots.txt right means thinking about all of them.

Whether you need a simple free generator or a full technical SEO platform with validation and indexing tools built in, here are the best robots.txt generators available right now.

1. Sight AI

Best for: Teams who want to control crawl access and monitor AI brand visibility in one place.

Sight AI is an all-in-one AI visibility and indexing platform that combines website indexing tools with AI brand monitoring across ChatGPT, Claude, Perplexity, and more.

Where This Tool Shines

Most robots.txt tools stop at generation or validation. Sight AI goes further by connecting your crawl control decisions to actual outcomes: are the right pages being indexed, and is your brand showing up when AI models answer questions in your category?

The IndexNow integration means search engines get notified the moment you publish or update content, cutting the lag between publishing and indexing. Combined with automated sitemap management and CMS auto-publishing, Sight AI handles the full indexing workflow rather than just one piece of it.

Key Features

IndexNow Integration: Instantly notifies search engines when content is published or updated, accelerating indexing without manual submission.

Automated Sitemap Management: Keeps your sitemap current automatically, so it always reflects your live site structure.

AI Visibility Score: Tracks how your brand is mentioned, cited, and described across six or more AI platforms including ChatGPT, Claude, and Perplexity.

CMS Auto-Publishing: Publishes content directly from the platform with built-in indexing triggers, removing manual steps from the workflow.

AI Content Writer: Thirteen-plus specialized AI agents generate SEO and GEO-optimized content including listicles, guides, and explainers designed to earn AI citations.

Best For

Marketers, founders, and agencies who want more than a robots.txt file editor. If you're thinking about indexing speed, AI crawler visibility, and content performance together rather than as separate problems, Sight AI is built for that workflow.

Pricing

Visit trysight.ai for current plans and pricing. Multiple tiers are available depending on site volume and feature needs.

2. Screaming Frog SEO Spider

Best for: Technical SEOs who want to validate robots.txt by simulating how crawlers actually experience the site.

Screaming Frog SEO Spider is the industry-standard desktop crawler that tests robots.txt compliance by crawling your entire site and flagging what's blocked, what's conflicting, and what's misconfigured.

Where This Tool Shines

The distinction here is that Screaming Frog doesn't just read your robots.txt in isolation. It crawls your site the way a search engine would and surfaces the real-world consequences of your directives: which URLs are blocked, which resources are inaccessible, and where your directives conflict with each other.

The custom user-agent simulation is particularly useful for testing how different bots, including AI crawlers, would interpret your current setup. You can switch agents mid-crawl and compare results without touching your live file.

Key Features

Full Site Crawl with Robots.txt Compliance Testing: Crawls the entire site and flags every URL affected by your current directives.

Custom User-Agent Simulation: Test how any specific bot, including AI crawlers, would interpret your robots.txt.

Exportable Crawl Reports: Download blocked URL lists and directive conflict reports for client presentations or internal audits.

Google Analytics and Search Console Integration: Layer traffic and performance data on top of crawl findings for richer analysis.

Free Version: Crawls up to 500 URLs at no cost, sufficient for smaller sites and initial audits.

Best For

Technical SEO consultants, in-house SEO teams, and agencies running site audits who need to see the downstream effects of robots.txt directives across a full crawl rather than testing individual URLs.

Pricing

Free for up to 500 URLs. The paid license is £259 per year and removes the crawl limit entirely.

3. Google Search Console Robots.txt Tester

Best for: Verifying exactly how Googlebot reads your current robots.txt file.

Google Search Console includes a built-in robots.txt tester that lets you check specific URLs against your live directives and see precisely how Google interprets what you've written.

Where This Tool Shines

There's no more authoritative source for "how does Google read this?" than Google itself. The tester pulls your live robots.txt file and lets you enter any URL to see whether it would be allowed or blocked, with the exact directive responsible highlighted.

It also flags syntax errors and warnings that might cause Googlebot to misinterpret your file. For anyone who has ever wondered whether a trailing slash or a wildcard character is working as intended, this tool gives you a definitive answer without waiting for a crawl cycle.

Key Features

Live URL Testing: Enter any URL on your site and instantly see whether Googlebot would crawl it under your current directives.

Directive Highlighting: Shows exactly which rule in your robots.txt is responsible for allowing or blocking a given URL.

Syntax Error Detection: Flags formatting issues and warnings that could cause Googlebot to misread your file.

No Setup Required: Available to any verified Search Console property owner with no additional tools needed.

Best For

Any site owner or SEO who wants to confirm that Google is reading their robots.txt the way they intended. Especially useful after making changes to your file before those changes propagate through a full crawl cycle.

Pricing

Completely free with any verified Google Search Console property.

4. Yoast SEO

Best for: WordPress site owners who want to manage robots.txt without leaving their dashboard.

Yoast SEO is the widely-used WordPress SEO plugin that includes a built-in robots.txt editor, letting you create and modify directives directly from your WordPress admin panel.

Where This Tool Shines

For WordPress users, the appeal is integration. Rather than FTP-ing into your server or using a separate tool, you edit your robots.txt file from the same place you manage your meta titles, sitemaps, and on-page SEO. Changes are applied immediately without touching a file manager.

Yoast also auto-generates a robots.txt file for new WordPress installations with sensible defaults, which reduces the risk of a blank or missing file causing crawl issues from day one.

Key Features

In-Dashboard Robots.txt Editor: Edit your robots.txt file directly from WordPress admin without FTP or server access.

Automatic Robots.txt Creation: Generates a default file for new WordPress sites with appropriate starting directives.

Sitemap Integration: Yoast's XML sitemap is automatically referenced in the robots.txt file, keeping both in sync.

Broader SEO Integration: Robots.txt management sits alongside meta tags, breadcrumbs, and schema markup in one unified plugin.

Best For

WordPress site owners and developers who want robots.txt management folded into their existing SEO workflow without installing a separate tool or accessing server files directly.

Pricing

The core Yoast SEO plugin is free. Yoast SEO Premium starts at $99 per year and adds features like redirect management and internal linking suggestions.

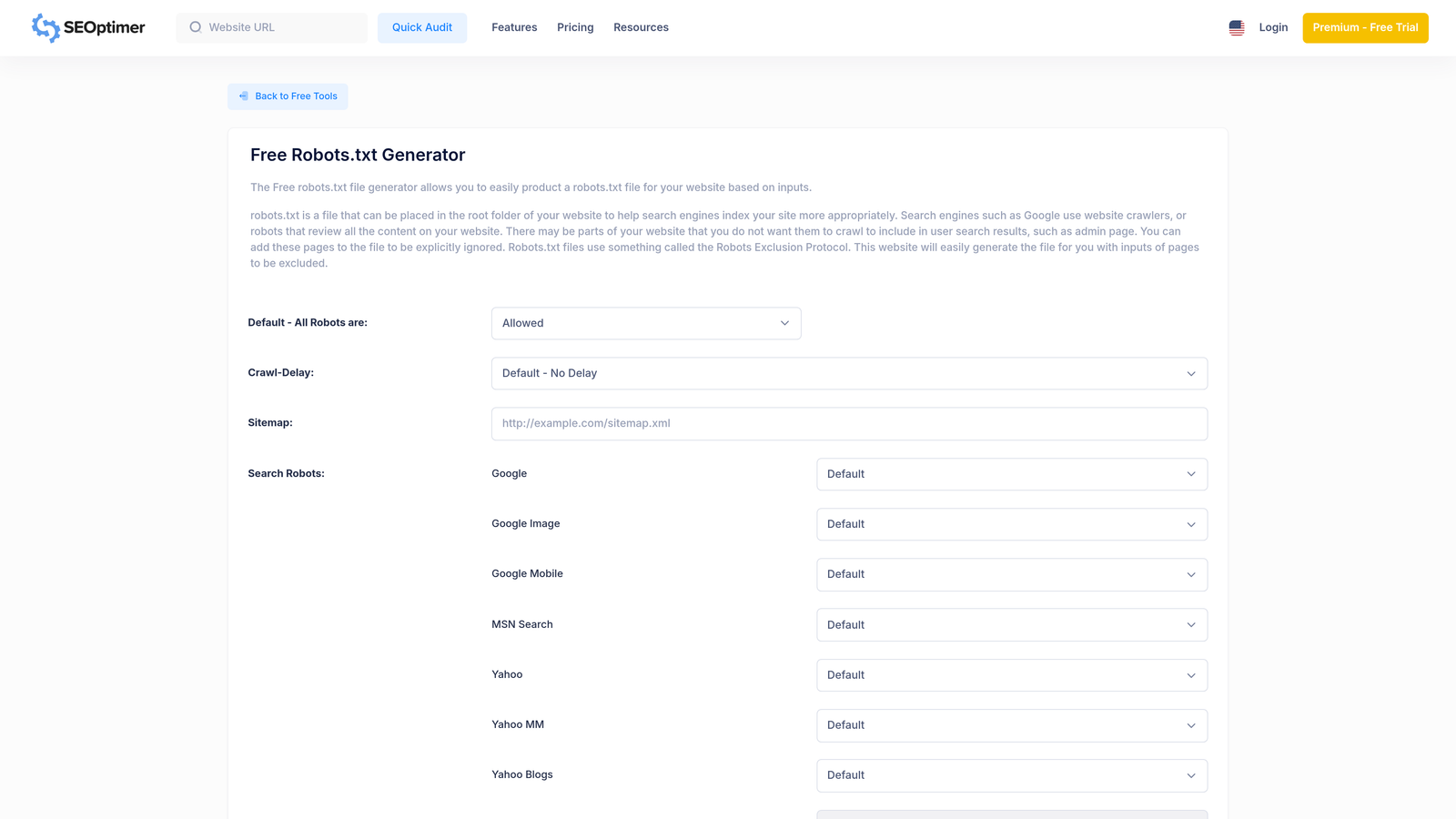

5. SEOptimer Robots.txt Generator

Best for: Quick robots.txt file creation without an account or technical knowledge.

SEOptimer's Robots.txt Generator is a lightweight, free online tool that builds a robots.txt file through a simple form interface with no signup required.

Where This Tool Shines

Sometimes you just need to generate a clean robots.txt file quickly. SEOptimer's generator handles that without friction. You fill in the form, select which user agents to address, add your sitemap URL, and get a ready-to-deploy file in seconds.

It's not a validation tool and it doesn't crawl your site, but for straightforward use cases where you know what you want and just need the correct syntax, it removes the need to remember directive formatting entirely.

Key Features

Form-Based Interface: Fill in fields rather than writing raw syntax, reducing the chance of formatting errors.

Common User-Agent Presets: Select from a list of well-known crawlers including Googlebot, Bingbot, and others.

Sitemap URL Field: Include your sitemap reference directly in the generated file.

One-Click Copy or Download: Grab the generated file instantly with no account, no email, and no waiting.

Best For

Developers, small business owners, or non-technical users who need a correctly formatted robots.txt file fast and don't require validation or site-wide crawl analysis.

Pricing

Completely free with no account required.

6. Ryte

Best for: Enterprise teams who need robots.txt analysis as part of large-scale site audits.

Ryte is an enterprise technical SEO platform that includes robots.txt analysis as part of comprehensive site audits, showing how your directives affect crawl coverage across complex, large-scale site architectures.

Where This Tool Shines

Where Ryte differentiates itself is in scale and context. For large sites with thousands or millions of URLs, understanding the cumulative effect of robots.txt directives requires more than a URL-by-URL test. Ryte's crawl-based analysis shows you the full picture: what percentage of your site is accessible, which sections are blocked, and how those patterns have changed over time.

The change monitoring feature is particularly valuable for enterprise environments where robots.txt files are modified by multiple team members. Ryte tracks those changes and surfaces unintended consequences before they affect crawl coverage at scale.

Key Features

Crawl-Based Robots.txt Validation: Validates directives in the context of a full site audit rather than as a standalone file check.

Visual Blocked vs. Accessible Reports: Clearly shows which site sections are crawlable and which are off-limits under current directives.

Change Monitoring: Tracks robots.txt modifications over time and alerts teams to unintended changes.

Enterprise Architecture Support: Built to handle large, complex sites with multiple subdomains, international variants, and high URL volumes.

Best For

Enterprise SEO teams, large e-commerce sites, and media publishers managing high-volume sites where robots.txt errors at scale can have significant traffic consequences.

Pricing

Free basic plan available. Paid plans start at approximately €199 per month; enterprise pricing is available on request.

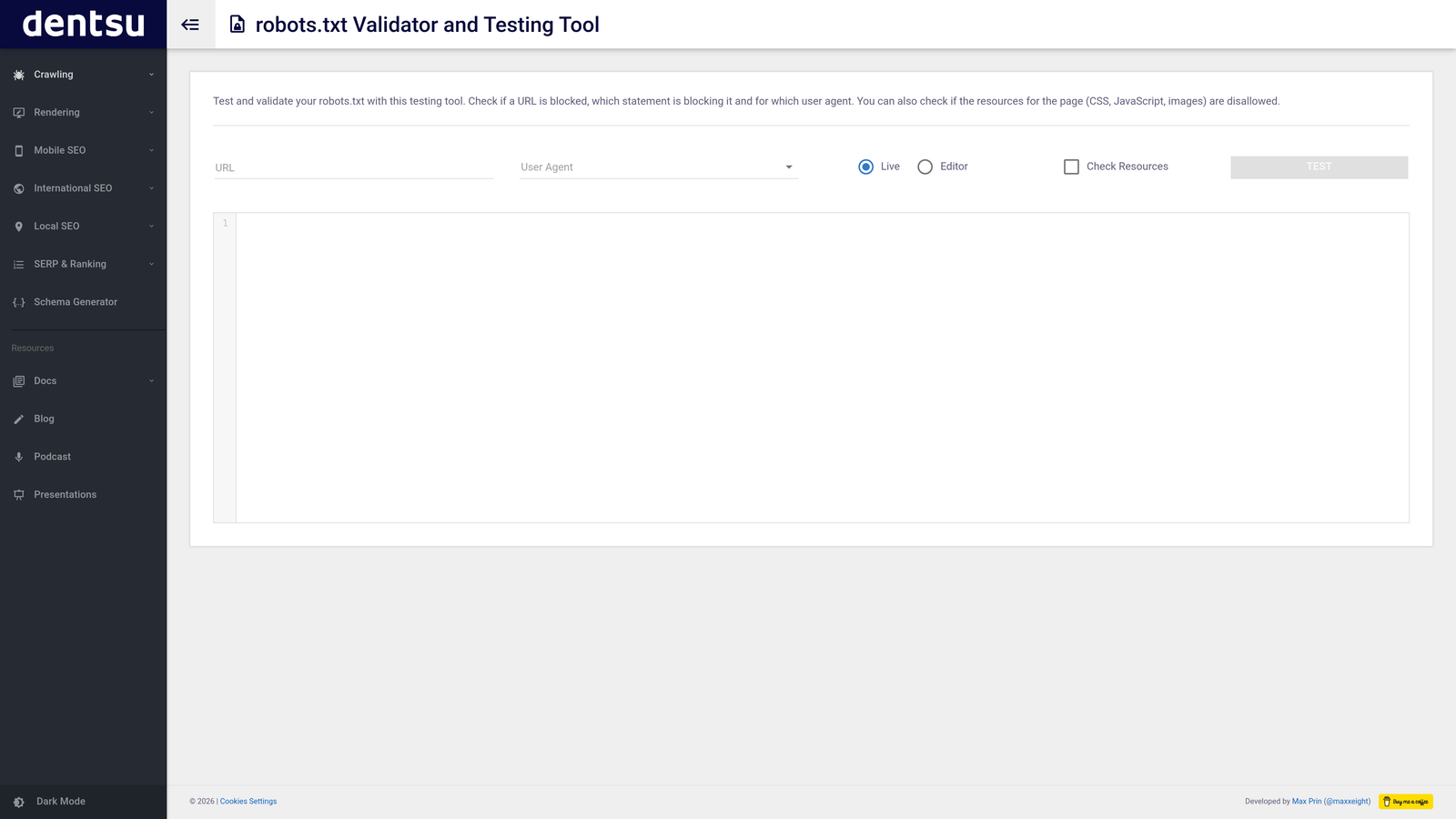

7. Merkle Robots.txt Generator

Best for: Developers and technical SEOs who want precise directive control with a clean interface.

Merkle's Robots.txt Generator is a free, developer-friendly tool from Merkle's technical SEO tools suite that provides structured input fields for accurate, precise directive creation.

Where This Tool Shines

Merkle's generator is built with technical accuracy as the primary goal. The structured fields for user-agent, allow, disallow, and sitemap directives make it harder to introduce formatting errors, and the clean interface doesn't add unnecessary complexity.

As part of Merkle's broader free technical SEO tools suite, it fits naturally into a workflow where you might also be using their other utilities for hreflang, structured data, or meta tag generation. No account is needed for any of them.

Key Features

Structured Directive Fields: Separate inputs for user-agent, allow, disallow, and sitemap directives prevent syntax errors.

Technical Accuracy Focus: Interface is designed for precision rather than simplicity, making it well-suited for developers.

Part of a Free Tools Suite: Integrates with Merkle's other technical SEO utilities for a broader workflow.

No Account Required: Fully accessible without registration or login.

Best For

Developers, technical SEOs, and digital marketing consultants who want a reliable, no-frills generator with structured inputs and no account overhead.

Pricing

Completely free with no account or signup required.

8. Ahrefs Webmaster Tools

Best for: Site owners who want robots.txt issue detection alongside broader crawl health and backlink analysis.

Ahrefs Webmaster Tools is a free site audit platform that flags robots.txt errors and misconfigured directives as part of a comprehensive crawl health analysis, integrated with Ahrefs' backlink and keyword data.

Where This Tool Shines

Ahrefs Webmaster Tools doesn't generate robots.txt files, but it's one of the most useful tools for finding problems with your existing one. The Site Audit identifies blocked pages, conflicting directives, and pages that are blocked by robots.txt but still receiving backlinks, which is a particularly common and costly mistake.

The integration with Ahrefs' broader dataset means you can immediately assess the SEO impact of a blocked URL: does it have backlinks? Is it ranking for anything? That context makes prioritization much faster than a crawl report alone.

Key Features

Site Audit Robots.txt Detection: Flags errors, warnings, and misconfigured directives as part of a full site crawl.

Blocked Page Identification: Shows exactly which pages are currently inaccessible to crawlers under your directives.

Backlink and Keyword Context: Cross-references blocked pages with Ahrefs' backlink and ranking data to prioritize fixes.

Free for Verified Owners: Full site audit access is available at no cost for verified site owners.

Best For

SEO professionals and site owners who already use or are considering Ahrefs and want robots.txt issue detection folded into a broader crawl health and performance analysis workflow.

Pricing

Ahrefs Webmaster Tools is free for verified site owners. Full Ahrefs subscription plans start at $129 per month for access to the complete platform.

9. Sitebulb

Best for: Agencies and consultants who need visual crawl analysis with client-ready reporting.

Sitebulb is a desktop-based technical SEO auditing tool that visualizes how robots.txt directives affect your site's crawl paths, with prioritized hints and recommendations for fixing issues.

Where This Tool Shines

Sitebulb's visual crawl maps are what set it apart. Rather than presenting robots.txt issues as a flat list, it shows you how directives interact with your site's architecture: which sections are blocked, how that affects internal link equity flow, and where the highest-priority fixes are.

The client-friendly PDF audit reports make Sitebulb particularly popular with agencies. You can surface robots.txt issues in a format that non-technical stakeholders can understand and act on, without having to rebuild the findings in a separate presentation.

Key Features

Visual Crawl Maps: Graphical representation of how robots.txt directives affect site architecture and crawl paths.

Priority Hints: Automatically categorizes robots.txt issues by severity so you know what to fix first.

Client-Friendly PDF Reports: Generates polished audit reports suitable for presenting to non-technical stakeholders.

Custom User-Agent Testing: Test how different bots, including AI crawlers, would navigate your site under current directives.

Best For

SEO agencies, freelance consultants, and in-house teams who need visual crawl analysis and professional reporting alongside robots.txt validation, rather than a standalone generator.

Pricing

Starts at £13.50 per month for the Lite plan. Higher tiers are available for larger sites and team use.

Which Tool Fits Your Workflow?

The right robots.txt tool depends on what you're actually trying to accomplish. Here's a practical way to think about it.

If you need a file generated quickly with no technical overhead, SEOptimer and Merkle's generator both deliver that for free, with no account required. They're the right choice when you know what directives you want and just need the correct syntax formatted for you.

If you're a WordPress user, Yoast SEO handles robots.txt management natively within your dashboard, which keeps your workflow consolidated without adding another tool to the stack.

For validation and audit work, Screaming Frog, Ahrefs Webmaster Tools, and Sitebulb each bring something distinct. Screaming Frog is the most thorough for technical crawl simulation. Ahrefs adds SEO performance context to blocked page findings. Sitebulb is the strongest choice when client-facing reports are part of the deliverable.

Google Search Console's robots.txt tester is the definitive answer to "how does Google read this?" and should be in every SEO's regular workflow regardless of which other tools they use.

For enterprise teams managing complex, high-volume sites, Ryte's change monitoring and crawl-scale analysis fill a gap that lighter tools can't address.

And if you're thinking beyond robots.txt to the broader question of how your content gets indexed and how your brand appears across AI platforms, Sight AI is in a category of its own. Controlling what crawlers can access is only one piece of the puzzle. Knowing whether the right content is being indexed quickly, and whether AI models like ChatGPT and Claude are citing your brand accurately, is what turns crawl management into a competitive advantage.

Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms. Stop guessing how AI models talk about you and start building a content and indexing strategy based on what's actually happening.