Understanding how large language models talk about your brand has become essential for modern marketers. When ChatGPT, Claude, or Perplexity answers questions about your industry, are they mentioning your company—or sending potential customers to competitors?

LLM response analysis tools help you track, measure, and optimize your brand's presence across AI-generated content. This guide covers the top tools for monitoring AI responses, from comprehensive visibility platforms to specialized prompt testing solutions.

Whether you're a marketer tracking brand mentions, an agency managing multiple clients, or a founder ensuring accurate AI representation, these tools provide the insights you need to stay competitive in the AI search landscape.

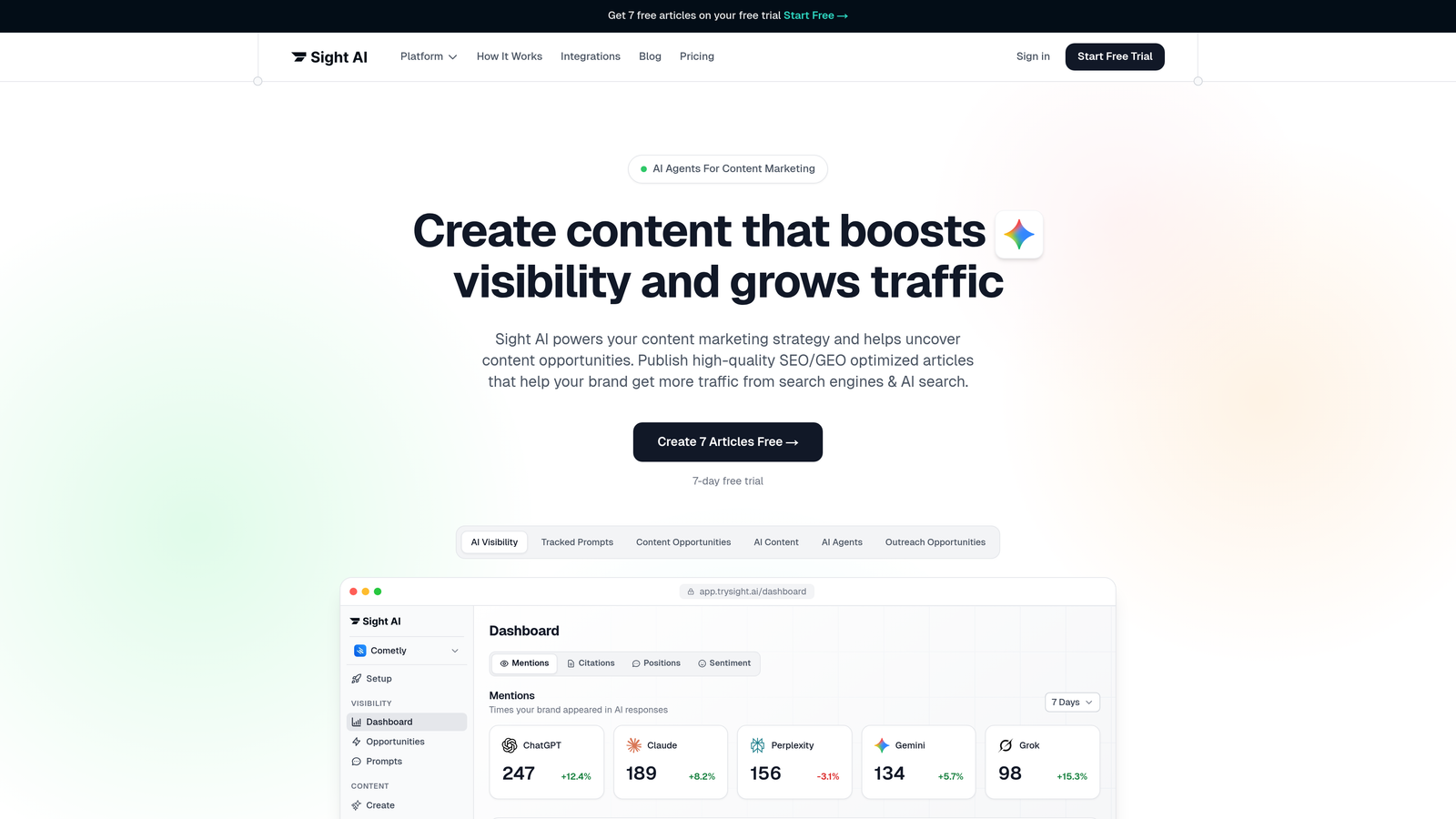

1. Sight AI

Best for: Marketers and agencies tracking brand visibility across multiple AI platforms

Sight AI is an AI visibility tracking platform that monitors how brands are mentioned and represented across major LLMs including ChatGPT, Claude, and Perplexity.

Where This Tool Shines

Sight AI takes a brand-first approach to LLM monitoring, focusing specifically on the questions marketers actually need answered. Instead of technical metrics like latency or token usage, you get visibility into whether AI models are recommending your brand, how they're describing your products, and which competitor names appear alongside yours.

The platform's prompt tracking feature reveals which specific queries trigger your brand mentions, giving you concrete insights into content gaps and opportunities. This intelligence helps you understand not just if you're being mentioned, but in what context and for which use cases.

Key Features

Real-time monitoring across 6+ AI platforms: Track mentions simultaneously across ChatGPT, Claude, Perplexity, and other major models from a single dashboard.

AI Visibility Score with sentiment analysis: Quantify your brand's AI presence with a comprehensive score that factors in mention frequency, sentiment, and positioning.

Prompt tracking: See exactly which user queries trigger your brand mentions and identify content opportunities based on what people are asking.

Competitive benchmarking: Compare your AI visibility against industry rivals to understand your relative positioning in AI-generated responses.

Automated alerts: Receive notifications when your brand mention patterns change significantly across AI platforms.

Best For

Marketing teams and agencies who need to understand and optimize their brand's presence in AI-generated content. Particularly valuable for companies in competitive industries where AI recommendations directly influence purchase decisions.

Pricing

Contact for pricing; offers tiered plans designed for individual marketers through enterprise agencies managing multiple client brands.

2. Profound

Best for: Enterprise teams requiring comprehensive AI brand monitoring with robust security

Profound is an enterprise AI monitoring platform focused on tracking brand presence and accuracy across large language models.

Where This Tool Shines

Profound positions itself as the enterprise-grade solution for companies with strict compliance requirements and complex organizational structures. The platform emphasizes data security and audit trails, making it suitable for regulated industries like finance and healthcare that need to monitor AI brand representation while maintaining strict data governance.

The multi-model comparison view lets you see how different LLMs respond to identical queries about your brand, revealing inconsistencies in how your company is represented across the AI ecosystem. This side-by-side analysis helps identify which models need targeted content optimization efforts.

Key Features

Multi-model response comparison: View how different LLMs answer the same brand-related queries in a unified comparison interface.

Enterprise-grade security: SOC 2 compliance, role-based access controls, and comprehensive audit logging for regulated industries.

Custom prompt library testing: Build and maintain libraries of brand-relevant queries to test consistently across models and over time.

API access: Integrate monitoring data into existing business intelligence tools and workflows through comprehensive API documentation.

Best For

Large enterprises with significant compliance requirements and dedicated teams managing brand reputation across digital channels. Best suited for organizations already using enterprise marketing technology stacks.

Pricing

Enterprise pricing model; contact for customized quote based on organization size and monitoring needs.

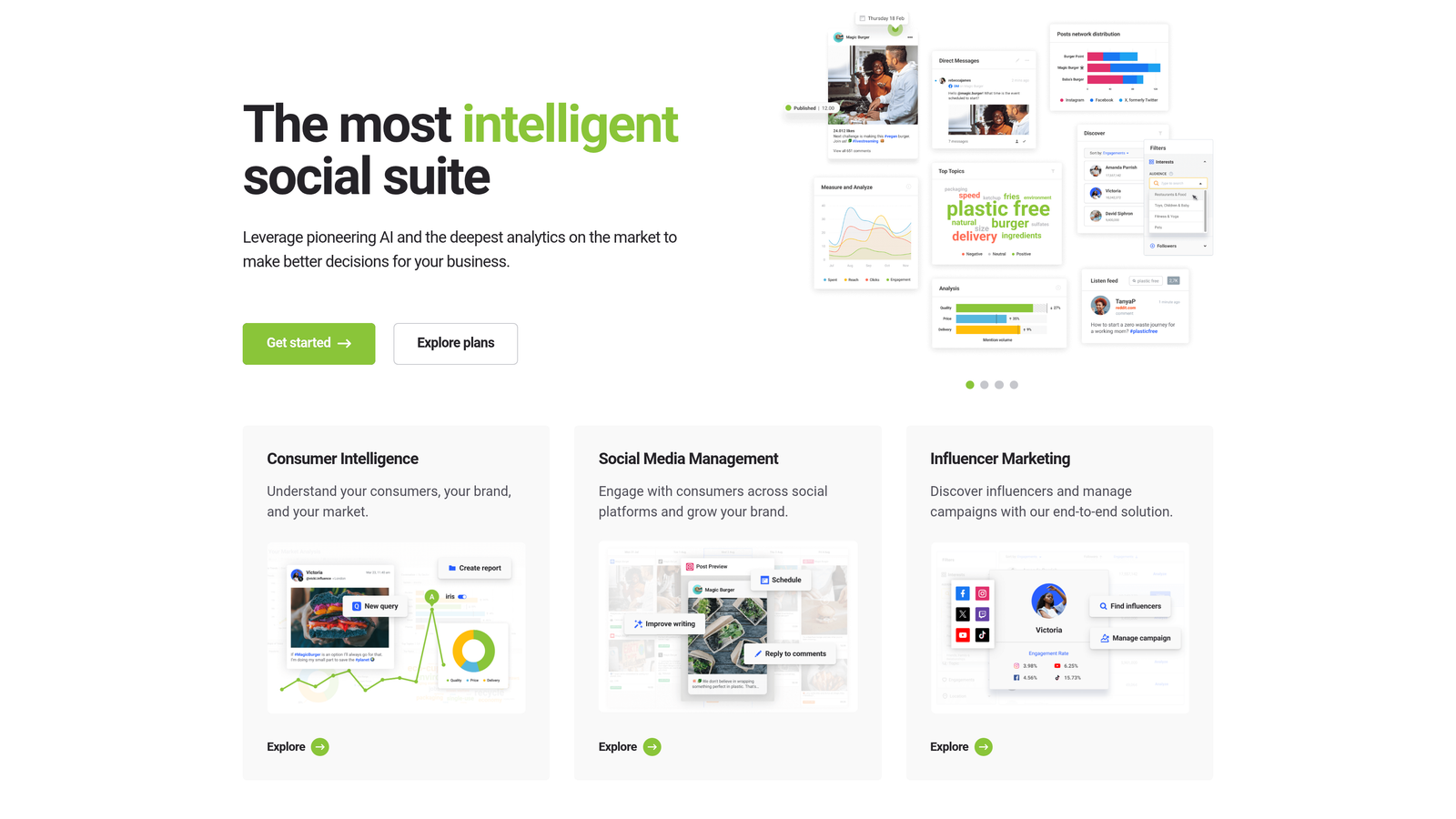

3. Brandwatch AI Monitor

Best for: Teams already using Brandwatch who want unified social and AI monitoring

Brandwatch AI Monitor is an extension of Brandwatch's social listening platform that includes AI response tracking capabilities.

Where This Tool Shines

For organizations already invested in Brandwatch's ecosystem, AI Monitor provides a natural extension of existing social listening workflows. The unified dashboard approach means your team can track brand mentions across social media, news sites, forums, and now AI models without switching between multiple platforms.

The historical trend analysis becomes particularly powerful when you can correlate changes in AI mentions with social media campaigns or PR events. This cross-channel visibility helps marketing teams understand how their broader content efforts influence AI model training and responses.

Key Features

Unified dashboard: Track social media mentions, news coverage, and AI model responses in a single interface with consistent reporting.

Historical trend analysis: View how AI model responses about your brand have evolved over time with detailed historical data.

Sentiment tracking: Apply Brandwatch's proven sentiment analysis capabilities across both social and AI-generated content.

Workflow integration: Leverage existing Brandwatch workflows, alerts, and reporting structures for AI monitoring data.

Best For

Marketing and communications teams already using Brandwatch for social listening who want to expand monitoring to include AI platforms without adopting a separate tool.

Pricing

Custom enterprise pricing; AI monitoring capabilities typically bundled with Brandwatch Consumer Intelligence suite rather than sold standalone.

4. Originality.ai

Best for: Content teams analyzing AI-generated text patterns and authenticity at scale

Originality.ai is an AI content detection platform that can analyze LLM response patterns and content authenticity.

Where This Tool Shines

While primarily known for AI content detection, Originality.ai provides valuable insights into how LLMs structure and present information. Content teams use it to understand the patterns and characteristics of AI-generated text, which helps when optimizing content to be more likely referenced or cited by AI models.

The bulk analysis capabilities make it practical to process large volumes of AI responses, helping you identify patterns in how different models generate content about your industry or competitors. This pattern recognition can inform your content strategy for improving AI visibility.

Key Features

AI content detection: Identify whether text was generated by AI models with detailed scoring and confidence levels.

Plagiarism checking: Cross-reference content against billions of web pages to identify copied or derivative content.

Bulk content analysis: Process multiple documents simultaneously for efficient large-scale content auditing.

API integration: Automate content checking workflows through API access for development teams.

Best For

Content operations teams, publishers, and agencies who need to analyze large volumes of AI-generated text or verify content authenticity as part of quality assurance processes.

Pricing

Pay-as-you-go model starting at $0.01 per credit; subscription plans available for teams with consistent usage needs.

5. PromptLayer

Best for: Development teams building AI applications who need detailed request logging

PromptLayer is a developer-focused platform for logging, debugging, and analyzing LLM API requests and responses.

Where This Tool Shines

PromptLayer excels at giving technical teams complete visibility into their LLM API usage. Every request and response gets logged with full context, making it straightforward to debug issues, track changes in model behavior, and understand exactly how your application interacts with various LLMs.

The prompt versioning system becomes invaluable as teams iterate on their AI applications. You can track which prompt variations produced better results, roll back to previous versions when needed, and maintain a clear history of how your prompts evolved over time.

Key Features

Request logging and history: Automatically capture every LLM API call with full request and response details for debugging and analysis.

Prompt versioning: Track changes to prompts over time with version control and the ability to compare performance across versions.

Response latency tracking: Monitor API response times to identify performance issues and optimize user experience.

Team collaboration: Share prompts, logs, and insights across development teams with role-based access controls.

Best For

Software development teams building AI-powered applications who need detailed observability into their LLM usage and prompt performance.

Pricing

Free tier available for individual developers; Pro plans start at $29/month for teams with higher usage requirements.

6. Helicone

Best for: Teams focused on LLM cost optimization and performance monitoring

Helicone is an open-source LLM observability platform for monitoring costs, latency, and response quality.

Where This Tool Shines

Helicone's one-line integration makes it remarkably easy to add comprehensive LLM monitoring to existing applications. Development teams appreciate that they can start tracking costs and performance without refactoring code or changing their existing LLM implementation patterns.

The cost tracking per request becomes critical as AI applications scale. You can identify which features or users generate the highest API costs, enabling data-driven decisions about pricing, rate limiting, and optimization priorities. The response caching feature can significantly reduce costs by avoiding redundant API calls.

Key Features

One-line integration: Add comprehensive monitoring to your LLM applications with minimal code changes and setup time.

Cost tracking per request: Monitor API costs at granular levels to identify expensive patterns and optimization opportunities.

Response caching: Automatically cache and reuse LLM responses to reduce API costs and improve response times.

User analytics: Segment usage patterns by user or feature to understand how different parts of your application consume LLM resources.

Best For

Product teams running AI applications in production who need to control costs while maintaining detailed visibility into LLM performance and usage patterns.

Pricing

Free tier includes 100,000 requests per month; Growth plans start at $20/month for higher volume needs.

7. Langfuse

Best for: Engineering teams requiring detailed tracing and self-hosted deployment options

Langfuse is an open-source LLM engineering platform for tracing, evaluating, and monitoring AI application responses.

Where This Tool Shines

Langfuse provides the most detailed trace visualization in this category, showing exactly how data flows through complex AI applications. For teams building sophisticated AI systems with multiple LLM calls, retrieval steps, and processing stages, this visibility becomes essential for debugging and optimization.

The self-hosted deployment option addresses data privacy concerns that prevent many companies from using cloud-based monitoring tools. Organizations in regulated industries or those handling sensitive data can maintain complete control over their monitoring infrastructure while still getting enterprise-grade observability features.

Key Features

Detailed trace visualization: See complete execution paths through your AI application with timing, costs, and data at each step.

Evaluation frameworks: Build custom scoring systems to automatically evaluate LLM response quality against your specific criteria.

Self-hosted deployment: Run Langfuse on your own infrastructure for complete data control and compliance with strict security requirements.

Framework integration: Native support for LangChain, LlamaIndex, and other popular AI development frameworks.

Best For

Engineering teams building complex AI applications who need detailed observability and either prefer open-source tools or require self-hosted deployment for compliance reasons.

Pricing

Free for self-hosted deployments; managed cloud plans start at $59/month for teams preferring hosted infrastructure.

8. Weights & Biases Prompts

Best for: ML teams integrating LLM monitoring into existing experiment tracking workflows

Weights & Biases Prompts is an ML experiment tracking platform with dedicated features for prompt engineering and LLM response analysis.

Where This Tool Shines

For teams already using Weights & Biases for machine learning experiment tracking, the Prompts feature provides a natural extension into LLM observability. You can track prompt experiments with the same rigor you apply to model training runs, comparing response quality across different prompt variations with statistical significance.

The collaboration features shine in larger ML teams where multiple engineers are iterating on prompts simultaneously. Shared workspaces, commenting, and comparison views help teams learn from each other's experiments and avoid duplicating effort across parallel workstreams.

Key Features

Prompt experiment tracking: Log and compare prompt variations with the same experiment tracking infrastructure used for model training.

Response comparison: View side-by-side comparisons of how different prompts perform across multiple evaluation metrics.

Team collaboration: Share experiments, add comments, and collaborate on prompt optimization within shared team workspaces.

ML workflow integration: Seamlessly combine LLM monitoring with existing model training, evaluation, and deployment workflows.

Best For

Machine learning teams already invested in the Weights & Biases ecosystem who want to apply the same experiment rigor to prompt engineering and LLM applications.

Pricing

Free tier available for individual researchers; Team plans start at $50 per user per month with volume discounts for larger organizations.

9. Pezzo

Best for: Development teams wanting open-source prompt management with version control

Pezzo is a developer-first platform for managing, testing, and monitoring LLM prompts and responses.

Where This Tool Shines

Pezzo treats prompts as first-class code artifacts, bringing software engineering best practices to prompt management. The version control system works like Git for prompts, letting teams track changes, review modifications, and maintain a clear history of how prompts evolved alongside their application code.

The A/B testing capabilities enable data-driven prompt optimization. Instead of guessing which prompt variation performs better, you can run controlled experiments with real user traffic and measure actual performance differences. This scientific approach to prompt engineering helps teams make confident optimization decisions.

Key Features

Prompt version control: Track prompt changes over time with full version history, diffs, and the ability to roll back to previous versions.

A/B testing: Run controlled experiments comparing prompt variations with real user traffic to identify optimal approaches.

Response quality monitoring: Track quality metrics over time to detect degradation and ensure consistent LLM performance.

Open-source core: Self-host the complete platform or contribute to the codebase for custom functionality needs.

Best For

Development teams who want to apply software engineering rigor to prompt management and prefer open-source tools with the option for self-hosting.

Pricing

Free open-source tier for self-hosted deployments; managed cloud plans available for teams preferring hosted infrastructure.

Making the Right Choice

The LLM response analysis tool you choose depends on whether you're focused on brand visibility or technical performance. Marketers tracking how AI models represent their brand should prioritize platforms like Sight AI or Brandwatch AI Monitor that emphasize sentiment, competitive positioning, and brand mention tracking.

Development teams building AI applications have different needs. Tools like PromptLayer, Helicone, and Langfuse focus on technical metrics like latency, cost optimization, and debugging capabilities. These platforms help engineering teams maintain performance and control expenses as their AI applications scale.

For teams requiring both perspectives, consider starting with a brand-focused tool for marketing visibility, then adding developer-focused observability as your technical needs grow. Many organizations find value in running both types of tools simultaneously—marketers track brand presence while engineers optimize application performance.

The market is evolving rapidly. AI models are becoming more influential in purchase decisions, making brand visibility tracking increasingly critical. At the same time, the technical complexity of AI applications demands sophisticated monitoring and optimization tools.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.