When potential customers ask ChatGPT, Claude, or Perplexity about solutions in your space, does your brand come up? As AI assistants increasingly shape purchasing decisions, understanding how these models discuss your company has become as critical as traditional SEO. Yet most teams have zero visibility into what AI platforms say about them—or worse, whether they're mentioned at all.

This guide covers nine platforms that help you monitor LLM behavior, from tracking brand mentions across AI models to debugging your own LLM deployments. We evaluated each based on monitoring coverage, sentiment analysis capabilities, alert systems, reporting depth, and integration options. Whether you need brand visibility tracking or technical observability for production AI systems, you'll find the right fit here.

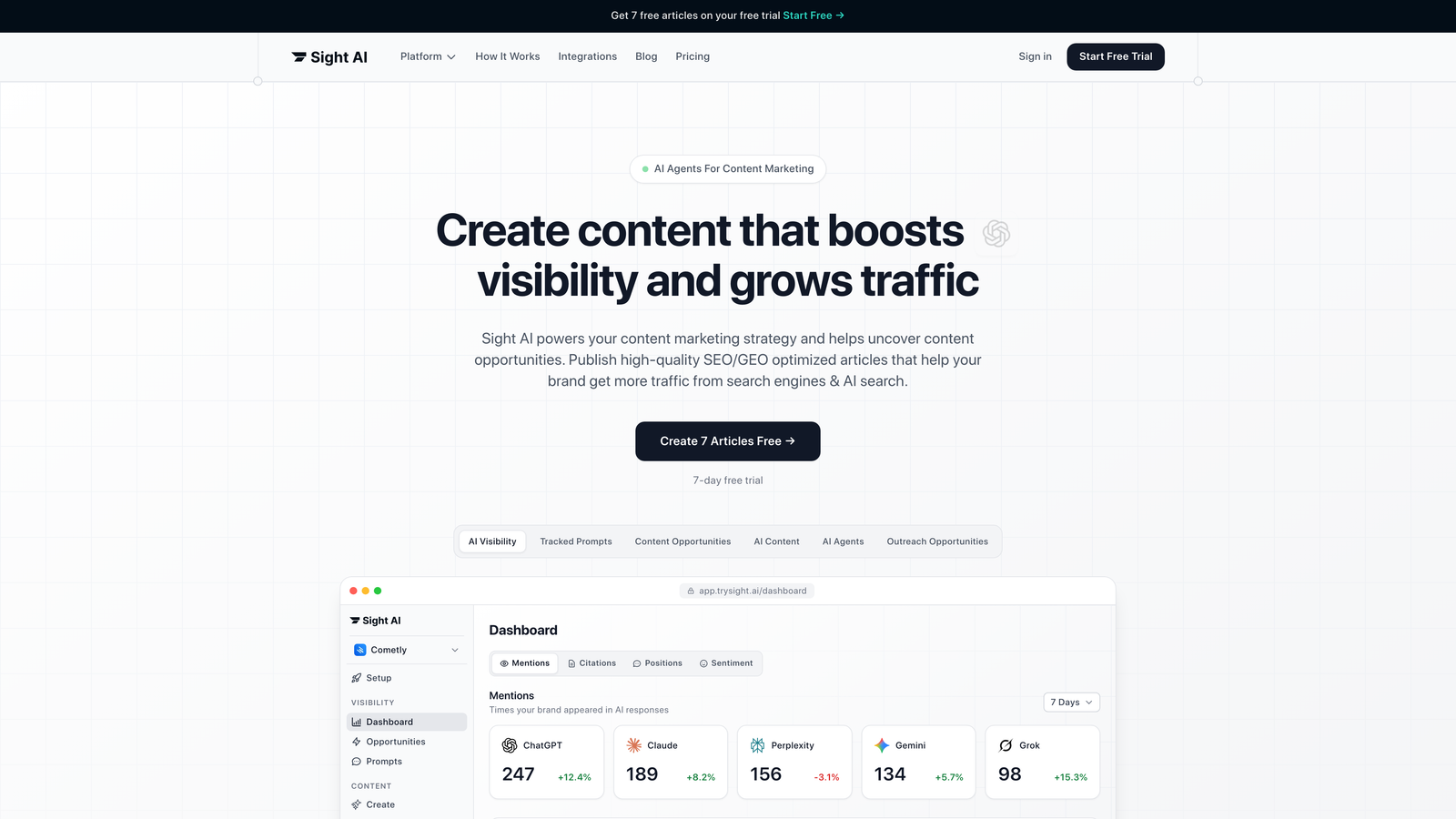

1. Sight AI

Best for: Marketing teams tracking brand mentions and sentiment across major AI platforms

Sight AI monitors how AI models like ChatGPT, Claude, and Perplexity mention and discuss your brand, providing visibility into AI-driven brand perception.

Where This Tool Shines

Sight AI addresses a specific gap that traditional observability tools miss: tracking what public AI models say about your brand. While most monitoring platforms focus on debugging your own LLM deployments, Sight AI monitors the AI platforms your customers actually use.

The platform's AI Visibility Score gives you a single metric to track brand presence trends over time, while prompt tracking reveals exactly what questions trigger your brand mentions. This intelligence helps content teams understand which topics associate with their brand and where gaps exist.

Key Features

Multi-Platform Monitoring: Tracks brand mentions across ChatGPT, Claude, Perplexity, and three other major AI platforms simultaneously.

AI Visibility Score: Proprietary metric that quantifies your brand's presence across AI models with historical trend analysis.

Sentiment Analysis: Classifies how AI models discuss your brand—positive, neutral, or negative—with context for each mention.

Prompt Tracking: Shows which user queries trigger brand mentions, revealing content opportunities and competitive positioning.

Content Recommendations: Suggests optimization strategies to improve how AI models reference your brand based on mention patterns.

Best For

Marketing teams and agencies focused on organic visibility growth who need to understand and influence how AI assistants present their brand to potential customers. Particularly valuable for companies in competitive spaces where AI recommendations drive purchasing decisions.

Pricing

Contact for pricing. Enterprise plans available with custom monitoring configurations.

2. Langfuse

Best for: Development teams needing open-source LLM observability with self-hosting options

Langfuse provides comprehensive tracing and debugging for LLM applications with full control through self-hosting capabilities.

Where This Tool Shines

Langfuse stands out for teams with strict data governance requirements or budget constraints. The open-source model means you can deploy it internally without sending sensitive LLM interaction data to third-party services—a critical requirement for healthcare, finance, and government applications.

The trace visualization interface makes debugging complex LLM chains intuitive. When a multi-step AI workflow produces unexpected results, you can drill into each component to identify where things went wrong, examining inputs, outputs, and latency at every stage.

Key Features

Self-Hosting Option: Deploy on your own infrastructure for complete data control and compliance with internal security policies.

Detailed Trace Visualization: Interactive timeline view showing every step in LLM request chains with expandable detail levels.

Prompt Management: Version control system for prompts with comparison tools and rollback capabilities.

Cost Tracking: Per-request token usage and cost calculation across different models and providers.

Framework Integration: Native support for LangChain, LlamaIndex, and other popular LLM frameworks with minimal setup.

Best For

Engineering teams building production LLM applications who need granular debugging capabilities and prefer open-source solutions. Ideal for organizations with data residency requirements or those wanting to avoid vendor lock-in.

Pricing

Free tier includes core features with reasonable usage limits. Paid plans start at $59/month for increased capacity and priority support.

3. Arize AI

Best for: Enterprise ML teams requiring comprehensive model performance monitoring and drift detection

Arize AI delivers enterprise-grade observability for production AI systems with advanced drift detection and embeddings analysis.

Where This Tool Shines

Arize excels at catching subtle model degradation before it impacts users. The automated drift detection continuously compares current model behavior against baseline performance, alerting teams when prediction quality declines or input distributions shift unexpectedly.

The embeddings visualization tools help teams understand how their models cluster and categorize data. When a model starts misclassifying certain inputs, you can visualize where those examples fall in embedding space and identify systematic issues rather than isolated errors.

Key Features

Automated Drift Detection: Monitors data distribution changes and model performance degradation with configurable sensitivity thresholds.

Embeddings Analysis: UMAP and t-SNE visualizations for understanding model representations and identifying clustering issues.

Root Cause Analysis: Automated tools that correlate performance drops with specific feature changes or data segments.

Enterprise Security: SOC 2 Type II compliance, SSO integration, and granular access controls for regulated industries.

Multi-Model Dashboards: Compare performance across different model versions or A/B test variants in unified views.

Best For

Enterprise machine learning teams managing multiple production models who need sophisticated monitoring beyond basic metrics. Companies in regulated industries appreciate the compliance certifications and security features.

Pricing

Free tier available for evaluation. Enterprise pricing based on prediction volume and features—contact sales for quotes.

4. Weights & Biases

Best for: ML teams needing experiment tracking and collaborative model development workflows

Weights & Biases combines experiment management, model versioning, and team collaboration for end-to-end ML lifecycle management.

Where This Tool Shines

W&B transforms chaotic experiment tracking into organized knowledge. Teams running dozens of training runs can compare results across any dimension—hyperparameters, datasets, model architectures—and identify winning configurations without maintaining spreadsheets or custom tracking code.

The collaborative workspace features make it easy for distributed teams to share findings. When a researcher discovers a promising approach, they can share interactive reports with visualizations and metrics rather than static screenshots or lengthy documentation.

Key Features

Experiment Tracking: Automatic logging of metrics, hyperparameters, and artifacts with minimal code changes.

Model Registry: Centralized versioning system with promotion workflows from development through production stages.

Team Workspaces: Shared dashboards and reports with commenting and annotation for asynchronous collaboration.

Hyperparameter Optimization: Automated sweep configurations with Bayesian optimization and early stopping.

Framework Integrations: First-class support for PyTorch, TensorFlow, Hugging Face, and 50+ ML frameworks.

Best For

Research teams and ML organizations where experiment reproducibility and knowledge sharing are critical. Particularly valuable for teams transitioning from academic research to production systems.

Pricing

Free for individual researchers and academics. Team plans start at $50 per user per month with volume discounts available.

5. Helicone

Best for: Startups needing lightweight LLM monitoring with minimal implementation overhead

Helicone offers straightforward LLM monitoring through a proxy approach that requires just one line of code to implement.

Where This Tool Shines

Helicone's simplicity is its superpower. You change your OpenAI base URL to point through Helicone's proxy, and suddenly you have request logging, cost tracking, and caching—without refactoring your application code or adding complex instrumentation.

The request caching feature provides immediate ROI by reducing redundant API calls. If multiple users ask similar questions, Helicone serves cached responses instead of hitting expensive LLM APIs, often cutting costs by 30-50% for applications with repetitive queries.

Key Features

One-Line Implementation: Start monitoring by changing your API base URL—no SDK installation or code refactoring required.

Real-Time Cost Tracking: Dashboard showing token usage and costs broken down by model, user, or custom tags.

Request Caching: Automatic response caching with configurable TTL to reduce redundant API calls and costs.

Rate Limiting: User-level or API-key-level rate limits to prevent abuse and control spending.

Custom Properties: Tag requests with user IDs, session data, or business context for filtered analysis.

Best For

Small teams and startups building LLM features who need immediate visibility without engineering overhead. Perfect for validating product-market fit before investing in complex observability infrastructure.

Pricing

Free tier covers up to 100,000 requests monthly. Growth plans start at $20/month for higher volumes and advanced features.

6. Datadog LLM Observability

Best for: Enterprises with existing Datadog infrastructure wanting unified monitoring across all systems

Datadog LLM Observability integrates LLM monitoring into Datadog's comprehensive observability platform for centralized visibility.

Where This Tool Shines

For organizations already using Datadog for infrastructure and application monitoring, adding LLM observability creates a unified view of the entire stack. You can correlate LLM performance issues with database latency, API errors, or infrastructure problems in the same interface.

The enterprise alerting capabilities leverage Datadog's mature notification system. Complex alert rules can trigger based on LLM-specific metrics combined with traditional APM data, enabling sophisticated incident response workflows that many standalone LLM tools can't match.

Key Features

Unified Monitoring: Single platform for infrastructure, applications, and LLM performance with correlated dashboards.

Enterprise Alerting: Sophisticated alert rules combining LLM metrics with APM data, with PagerDuty and Slack integrations.

Token Analytics: Detailed cost analysis with forecasting and budget alerts based on usage patterns.

Latency Tracking: P50, P95, and P99 latency metrics for LLM requests with historical trending.

Compliance Features: Audit logs, data retention policies, and access controls meeting SOC 2 and HIPAA requirements.

Best For

Large enterprises with existing Datadog deployments who want to extend their observability practice to LLM applications. The consolidated billing and unified interface reduce tooling complexity.

Pricing

Usage-based pricing integrated with existing Datadog contracts. Contact sales for LLM-specific pricing details and volume discounts.

7. LangSmith

Best for: Developers building with LangChain who need native debugging and testing tools

LangSmith provides deep integration with the LangChain ecosystem for debugging, testing, and monitoring LangChain applications.

Where This Tool Shines

LangSmith understands LangChain's abstractions natively, making debugging feel natural rather than forced. When you're troubleshooting a complex chain with multiple retrievers, agents, and tools, LangSmith displays each component's behavior in context rather than generic request logs.

The prompt playground lets you iterate on prompts without deploying code. You can test variations against saved datasets, compare outputs side-by-side, and measure quality improvements before pushing changes to production—accelerating the prompt engineering cycle significantly.

Key Features

LangChain Integration: Zero-configuration monitoring for LangChain applications with automatic trace capture.

Prompt Playground: Interactive testing environment for experimenting with prompts against real or synthetic data.

Dataset Management: Store test cases and evaluation datasets with version control for regression testing.

Trace Visualization: Hierarchical view of chain execution showing agent decisions, tool calls, and retrieval results.

Feedback Collection: Built-in tools for capturing user feedback on responses to improve model performance.

Best For

Development teams standardized on LangChain who want purpose-built tooling rather than generic observability platforms. The tight integration reduces setup time and provides LangChain-specific insights.

Pricing

Free tier includes 5,000 traces monthly. Plus plans start at $39/month for increased capacity and team collaboration features.

8. Galileo

Best for: Teams prioritizing response quality and hallucination detection in production LLMs

Galileo specializes in evaluating LLM output quality with hallucination detection and accuracy analysis.

Where This Tool Shines

Galileo tackles the hardest problem in LLM deployment: ensuring responses are factually accurate. The hallucination detection system assigns confidence scores to model outputs, flagging statements that might be fabricated or inconsistent with source material.

The automated evaluation pipelines let teams establish quality benchmarks and catch regressions. When you update a model or change a prompt, Galileo runs your test suite and reports whether response quality improved or degraded across multiple dimensions—factuality, relevance, coherence.

Key Features

Hallucination Detection: Proprietary scoring system that identifies potentially fabricated or unsupported statements in model outputs.

Quality Metrics: Multi-dimensional evaluation covering factuality, relevance, coherence, and task-specific criteria.

Automated Evaluation: CI/CD integration for running quality checks on every model or prompt change.

Data Quality Analysis: Identifies problematic training examples or retrieval results affecting model performance.

Fine-Tuning Guidance: Recommendations for improving model behavior based on error pattern analysis.

Best For

Organizations deploying LLMs in high-stakes domains like healthcare, legal, or financial services where accuracy is non-negotiable. Teams needing to demonstrate quality assurance for regulatory compliance.

Pricing

Contact for pricing. Enterprise plans include custom evaluation criteria and dedicated support.

9. PromptLayer

Best for: Teams needing collaborative prompt management with version control and A/B testing

PromptLayer focuses on prompt lifecycle management with versioning, testing, and team collaboration features.

Where This Tool Shines

PromptLayer treats prompts as first-class code artifacts deserving proper version control. When multiple team members iterate on prompts, you can track who changed what, compare versions, and roll back problematic updates—preventing the chaos of prompts scattered across Slack messages and Google Docs.

The A/B testing framework makes prompt optimization data-driven. You can run controlled experiments comparing prompt variations, measure performance differences with statistical significance, and confidently promote winning versions to production.

Key Features

Version Control: Git-like versioning for prompts with diff views, branching, and rollback capabilities.

Request Logging: Searchable history of all LLM requests with filtering by prompt version, user, or custom tags.

A/B Testing: Built-in experimentation framework for comparing prompt variations with statistical analysis.

Team Collaboration: Shared workspace where team members can review, comment on, and approve prompt changes.

Simple Integration: Lightweight SDK that wraps existing OpenAI or Anthropic calls with minimal code changes.

Best For

Product teams where non-technical stakeholders need to collaborate on prompt development. The visual interface and approval workflows make prompt management accessible beyond just engineers.

Pricing

Free tier supports individual users with basic features. Pro plans start at $25/month for team collaboration and unlimited history.

Making the Right Choice

Your monitoring needs depend entirely on what you're trying to track. For brand visibility—understanding how AI models like ChatGPT and Claude discuss your company—Sight AI provides the specific intelligence traditional observability tools miss. Marketing teams focused on organic growth through AI channels will find the prompt tracking and sentiment analysis invaluable.

Developer teams building their own LLM applications have different requirements. Langfuse and LangSmith excel at debugging and tracing, with Langfuse offering open-source flexibility and LangSmith providing LangChain-native integration. Helicone and PromptLayer serve budget-conscious startups needing quick wins without complex setup.

Enterprise organizations typically choose based on existing infrastructure. Teams already using Datadog benefit from unified monitoring, while those prioritizing response quality should evaluate Galileo's hallucination detection. Arize and Weights & Biases fit organizations with mature ML operations needing comprehensive experiment tracking and model governance.

The distinction between monitoring what public LLMs say about you versus monitoring your own LLM deployments is fundamental. Most tools in this list address the latter—helping you debug and optimize AI applications you've built. Sight AI uniquely addresses the former, giving visibility into how the AI platforms your customers use present your brand.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.