As large language models become central to business operations—from customer support chatbots to content generation pipelines—understanding how these models perform is no longer optional. LLM analytics platforms help teams monitor response quality, track token usage, measure latency, and identify when AI outputs mention (or ignore) specific brands. Whether you're optimizing costs, debugging hallucinations, or ensuring your brand appears correctly across AI-generated responses, the right analytics platform makes the difference between flying blind and making data-driven decisions. Here are the top tools that stand out for different use cases in 2026.

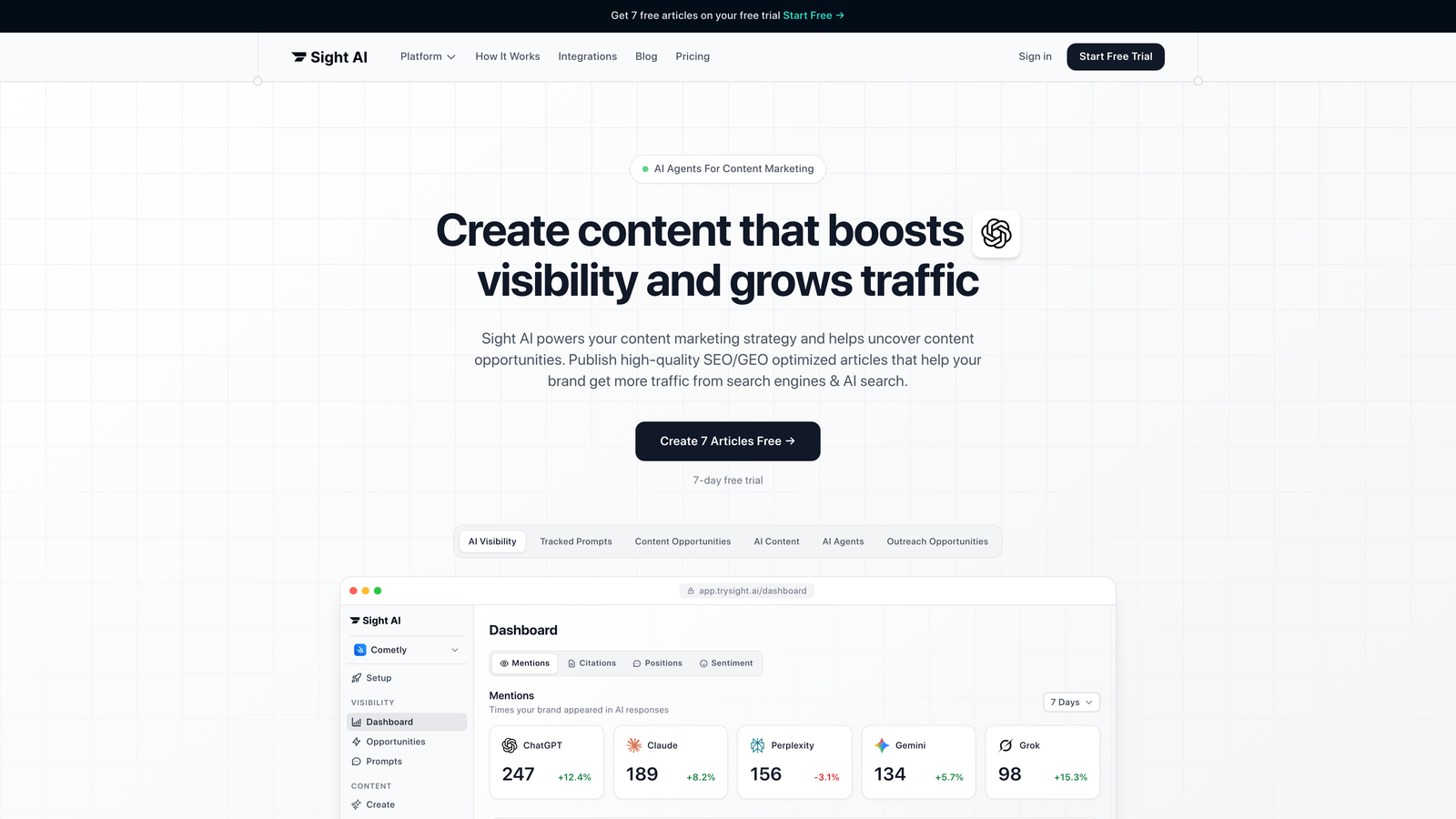

1. Sight AI

Best for: Marketing teams tracking brand visibility across AI platforms

Sight AI is an AI visibility platform that monitors how major language models mention and represent your brand across ChatGPT, Claude, Perplexity, and other AI platforms.

Where This Tool Shines

Sight AI addresses a problem most analytics platforms miss entirely: understanding how AI models talk about your brand. As AI becomes a primary discovery surface alongside traditional search, knowing whether ChatGPT mentions your company when users ask relevant questions matters as much as your Google rankings.

The platform provides an AI Visibility Score that quantifies your brand presence across multiple AI platforms, giving you a single metric to track over time. This makes it particularly valuable for marketing teams who need to report on AI visibility the same way they report on SEO performance.

Key Features

AI Visibility Score: Quantifies brand presence across 6+ AI platforms with historical tracking.

Sentiment Analysis: Identifies whether AI mentions are positive, neutral, or negative.

Prompt Tracking: Shows exactly what user queries trigger mentions of your brand.

Competitor Monitoring: Tracks when competitors appear in responses where your brand should be mentioned.

Content Recommendations: Suggests content strategies to improve AI visibility based on gaps identified.

Best For

Marketing teams and agencies focused on organic traffic growth through AI optimization. Particularly useful for SaaS companies, digital agencies, and brands investing in content marketing who need to understand and improve how AI models represent them to users.

Pricing

Contact for pricing. Offers tiered plans designed for different team sizes, from individual marketers to enterprise marketing departments.

2. Langfuse

Best for: Engineering teams needing open-source LLM observability with full control

Langfuse is an open-source LLM engineering platform for tracing, prompt management, and evaluation of language model applications.

Where This Tool Shines

Langfuse stands out for teams that value transparency and control. Being open-source means you can self-host the entire platform, keeping sensitive LLM interaction data within your infrastructure. This matters for companies handling proprietary information or operating in regulated industries.

The trace-level observability goes deeper than most alternatives, showing you exactly what happens at each step of complex LLM chains. When debugging why your chatbot gave a strange answer, you can see every API call, every prompt transformation, and every intermediate result.

Key Features

Detailed Tracing: Complete visibility into every LLM call with input/output logging and latency tracking.

Prompt Versioning: Manage and compare different prompt versions with A/B testing support.

Deployment Flexibility: Choose between self-hosted or managed cloud deployment based on your needs.

Provider Integration: Works with OpenAI, Anthropic, Cohere, and other major LLM providers.

Cost Analytics: Tracks spending per trace, user, or custom dimension.

Best For

Development teams building production LLM applications who want complete control over their observability stack. Ideal for companies with data sovereignty requirements or teams comfortable managing open-source infrastructure.

Pricing

Free tier available for individual developers. Cloud plans start at $59/month for teams. Self-hosted deployment is free with no usage limits.

3. Helicone

Best for: Developers prioritizing simple integration and cost visibility

Helicone is a developer-focused LLM observability platform emphasizing simple integration and cost visibility for OpenAI and other providers.

Where This Tool Shines

Helicone's one-line integration approach means you can start monitoring LLM usage in minutes rather than days. Instead of instrumenting your codebase, you route requests through Helicone's proxy, which captures everything automatically. This makes it perfect for teams that want observability without significant engineering investment.

The request caching feature is particularly clever. By automatically caching identical prompts, Helicone can reduce your API costs by 30-50% for applications with repeated queries—a feature that often pays for the platform itself.

Key Features

Proxy Integration: Add observability with a single URL change in your API configuration.

Cost Dashboards: Real-time tracking of spending across models and users.

Request Caching: Automatic caching of duplicate requests to reduce API costs.

User-Level Tracking: Monitor usage and set rate limits for individual users or API keys.

Prompt Management: Version and template prompts directly within the platform.

Best For

Development teams that want quick setup and immediate value without complex instrumentation. Particularly useful for startups and small teams where engineering time is precious and API costs are a concern.

Pricing

Free up to 100,000 requests per month. Pro plan starts at $20/month with higher limits and additional features.

4. Weights & Biases (W&B Prompts)

Best for: ML teams extending experiment tracking to LLM workflows

Weights & Biases is an ML experiment tracking platform with dedicated LLM features for prompt engineering, evaluation, and production monitoring.

Where This Tool Shines

If your team already uses W&B for model training, adding LLM observability feels natural. The platform treats prompts like hyperparameters and LLM outputs like model predictions, bringing the same systematic approach to prompt engineering that data scientists apply to traditional ML.

The experiment tracking integration is particularly powerful. You can run 50 variations of a prompt, track results across different models, and visualize performance trends—all within the same interface you use for training neural networks.

Key Features

Prompt Versioning: Track every prompt variation with full experiment metadata and results.

LLM Trace Visualization: See complete chains of LLM calls with timing and cost data.

Training Integration: Combine LLM monitoring with traditional model training workflows.

Team Collaboration: Share experiments, prompts, and results across your organization.

Evaluation Pipelines: Automate testing of prompts against benchmark datasets.

Best For

ML engineering teams already invested in the W&B ecosystem. Best suited for organizations treating LLM development as an extension of their existing machine learning workflows rather than a separate discipline.

Pricing

Free for individual researchers and students. Team plans start at $50 per user per month with enterprise options available.

5. Arize AI

Best for: Enterprise teams needing comprehensive ML and LLM observability

Arize AI is an enterprise ML observability platform with comprehensive LLM monitoring, evaluation, and troubleshooting capabilities.

Where This Tool Shines

Arize brings enterprise-grade ML observability to LLM applications. The platform excels at identifying subtle degradation in model performance over time—critical for production systems where small quality drops compound into major issues.

The embedding drift detection is particularly sophisticated. By monitoring how LLM embeddings change over time, Arize can alert you to shifts in user behavior or data distribution before they impact application performance. This proactive approach prevents issues rather than just detecting them after they occur.

Key Features

LLM Evaluation Metrics: Purpose-built metrics and guardrails for language model outputs.

Embedding Drift Detection: Monitors changes in embedding distributions to catch performance issues early.

Enterprise Scale: Handles billions of predictions with real-time monitoring capabilities.

Root Cause Analysis: Automated tools to identify why model performance degraded.

Framework Integration: Works with major ML frameworks and LLM providers.

Best For

Large enterprises running multiple ML models alongside LLM applications. Ideal for organizations that need unified observability across traditional ML and generative AI with enterprise security and compliance requirements.

Pricing

Free tier available for evaluation. Enterprise pricing varies based on volume and features—contact sales for custom quotes.

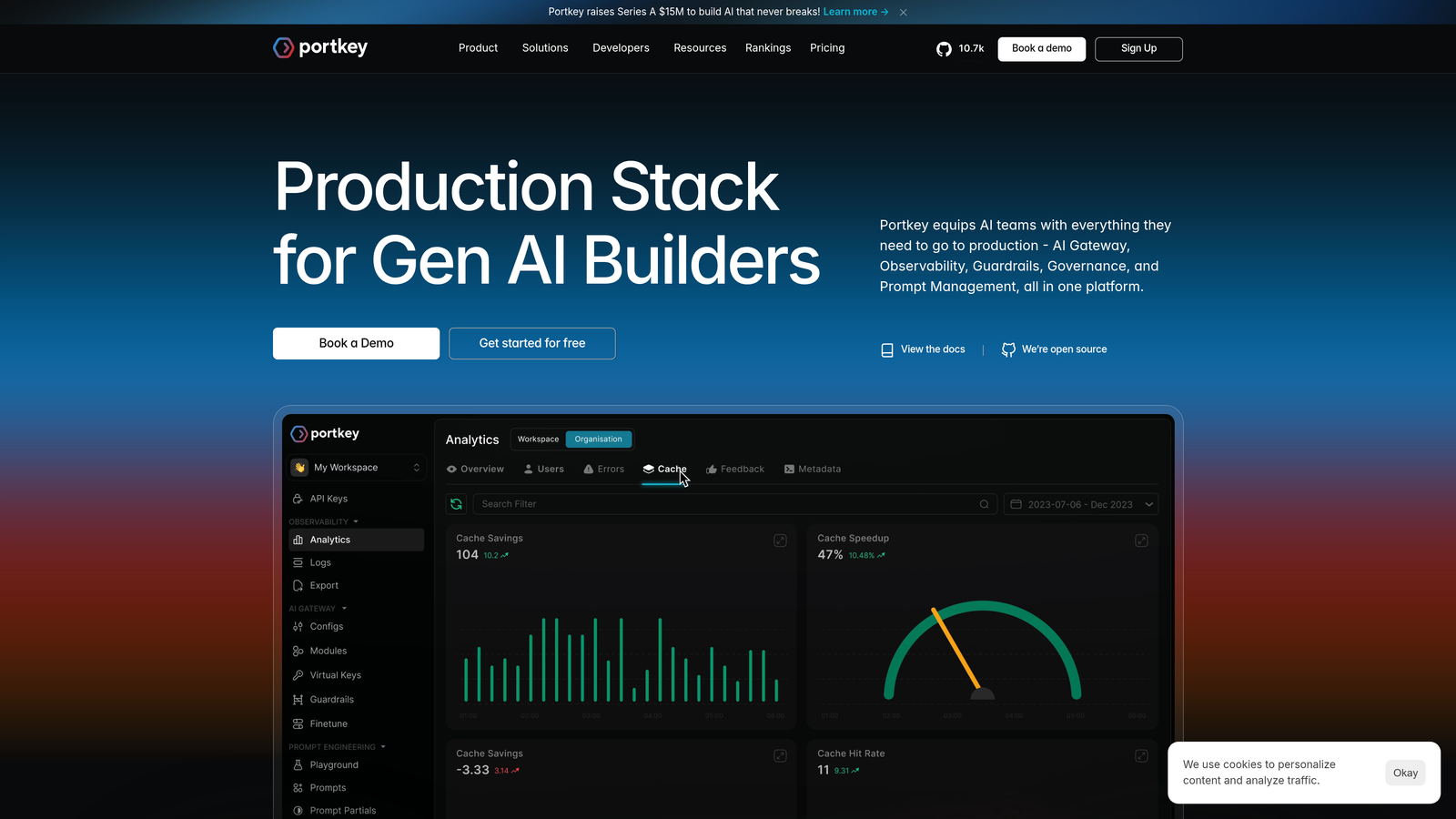

6. Portkey

Best for: Teams managing multiple LLM providers through unified infrastructure

Portkey is an AI gateway and observability platform for managing multiple LLM providers through a unified API with built-in analytics.

Where This Tool Shines

Portkey solves the multi-provider problem elegantly. Instead of writing separate integration code for OpenAI, Anthropic, Cohere, and others, you write against Portkey's unified API. The platform handles routing, fallback, and load balancing automatically.

The automatic fallback feature provides resilience that's difficult to build yourself. If your primary LLM provider experiences downtime, Portkey automatically routes requests to your backup provider without your application code needing to know anything changed.

Key Features

Unified API: Single interface for 100+ LLM providers with consistent request/response formats.

Automatic Fallback: Seamless failover to backup providers during outages or rate limits.

Cost and Latency Tracking: Request-level analytics across all providers.

Prompt Caching: Reduce costs by caching responses for identical prompts.

Content Filtering: Built-in guardrails for safety and compliance.

Best For

Engineering teams building multi-provider LLM applications or those wanting provider flexibility without vendor lock-in. Particularly valuable for companies hedging against provider changes or optimizing costs across multiple APIs.

Pricing

Free tier available for testing and small projects. Pro plan starts at $49/month with higher request limits and advanced features.

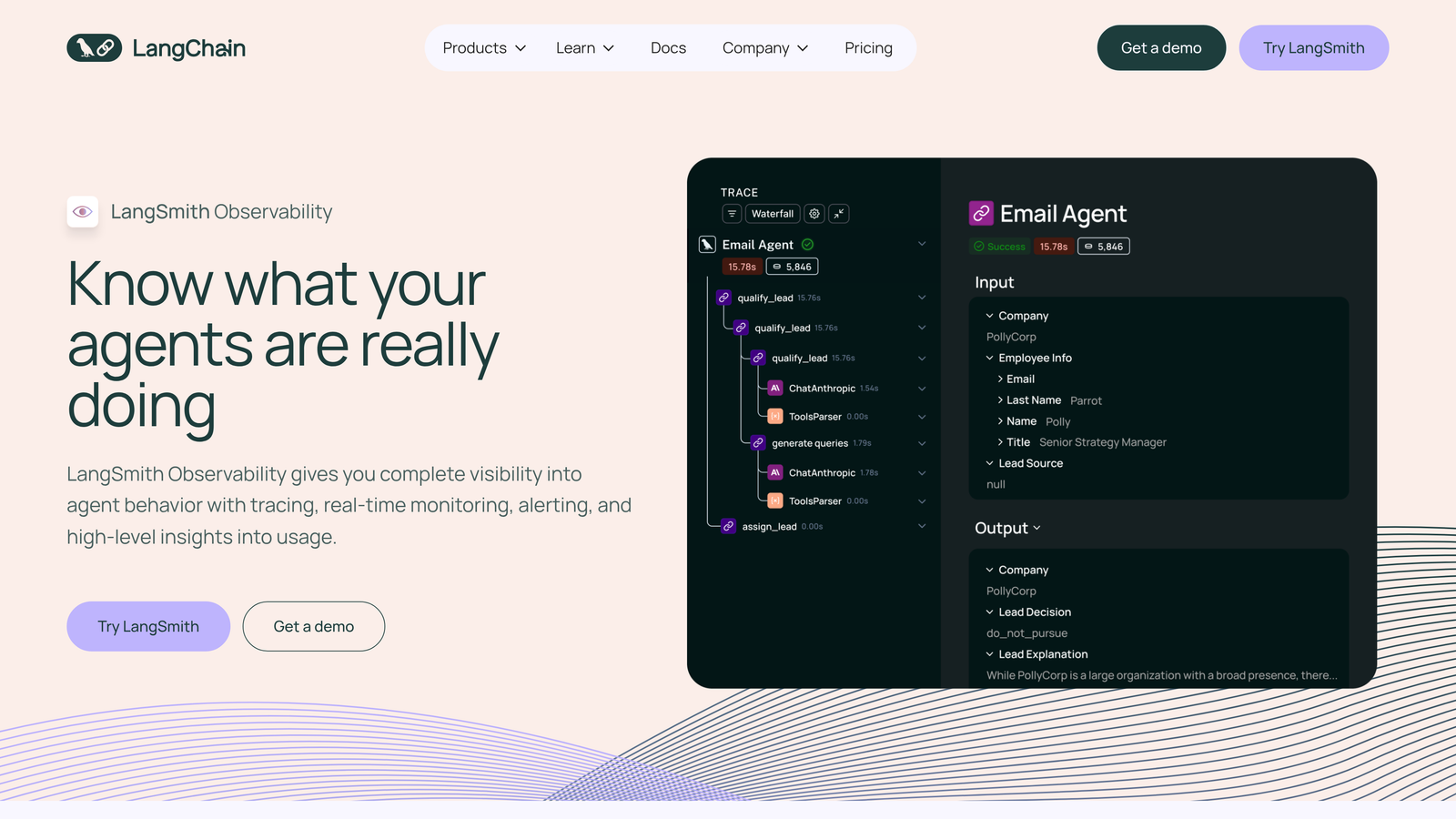

7. LangSmith

Best for: LangChain users needing native debugging and testing tools

LangSmith is a developer platform from LangChain for debugging, testing, and monitoring LLM applications built with the LangChain framework.

Where This Tool Shines

LangSmith is purpose-built for LangChain applications, which means it understands chains, agents, and tools at a fundamental level. When debugging a complex agent workflow, LangSmith shows you exactly which tool was called, what it returned, and how the agent decided to proceed.

The dataset management feature transforms evaluation from an afterthought into a systematic process. You can build test datasets of prompts and expected outputs, then run your entire application against them to catch regressions before deployment.

Key Features

Native LangChain Integration: Automatic tracing for chains, agents, and tools without manual instrumentation.

Detailed Tracing: Complete visibility into every step of complex LangChain workflows.

Dataset Management: Build and maintain test datasets for systematic evaluation.

Prompt Playground: Test and iterate on prompts with immediate feedback.

Production Monitoring: Real-time dashboards for deployed LangChain applications.

Best For

Development teams building applications with LangChain or LangGraph. The tight integration makes it the obvious choice if you're already invested in the LangChain ecosystem.

Pricing

Free tier with usage limits for individual developers. Plus plan starts at $39 per seat per month with higher limits and team features.

8. Datadog LLM Observability

Best for: Enterprises with existing Datadog infrastructure

Datadog LLM Observability is an enterprise observability platform extending its APM capabilities to include LLM-specific monitoring and tracing.

Where This Tool Shines

Datadog's strength lies in unified observability. You can correlate LLM performance with infrastructure metrics, application traces, and user behavior—all in one platform. When your chatbot slows down, you can immediately see whether it's an LLM API issue, a database bottleneck, or a network problem.

For enterprises already running Datadog for infrastructure monitoring, adding LLM observability means one less vendor to manage and one less dashboard to check. The integration feels seamless because it uses the same agent and data pipeline you're already operating.

Key Features

Unified Monitoring: Combine LLM metrics with APM, infrastructure, and log data.

Provider Integration: Out-of-box support for major LLM providers with automatic instrumentation.

Infrastructure Correlation: Link LLM performance to underlying infrastructure metrics.

Enterprise Security: Compliance features and data governance controls for regulated industries.

Custom Dashboards: Build tailored views combining LLM and traditional metrics.

Best For

Large enterprises with existing Datadog deployments who want to extend their observability stack to include LLM applications. Best suited for organizations prioritizing vendor consolidation and unified monitoring.

Pricing

Included with Datadog APM subscriptions. Contact Datadog sales for specific LLM observability pricing and volume-based discounts.

9. Humanloop

Best for: Teams focused on prompt engineering and systematic evaluation

Humanloop is a prompt engineering and evaluation platform focused on helping teams iterate on prompts and deploy with confidence.

Where This Tool Shines

Humanloop treats prompt engineering as a first-class software engineering discipline. The platform provides versioning, testing, and review workflows that bring the same rigor to prompts that you apply to code.

The evaluation pipelines let you define custom metrics specific to your use case. Instead of generic quality scores, you can measure whether outputs match your brand voice, include required legal disclaimers, or follow specific formatting requirements. This specificity makes evaluation actionable rather than theoretical.

Key Features

Prompt Versioning: Track every change to prompts with full history and rollback capabilities.

A/B Testing: Run controlled experiments comparing different prompt versions in production.

Custom Evaluation Metrics: Define domain-specific metrics that matter for your application.

Team Workflows: Collaboration and review processes for prompt changes.

Production Deployment: Deploy prompts with confidence using gradual rollout and instant rollback.

Best For

Product and engineering teams treating prompts as critical application logic that requires systematic development and testing. Ideal for companies where prompt quality directly impacts user experience or business outcomes.

Pricing

Free tier available for small projects. Team plans start at $99/month with features for collaboration and production deployment.

Finding Your Perfect Analytics Platform

The LLM analytics landscape has matured significantly, with platforms now serving distinct needs—from brand visibility tracking to cost optimization to debugging complex agent workflows.

For marketing teams focused on how AI models represent their brand, Sight AI offers unique visibility scoring across major platforms. Development teams building LLM applications will find Langfuse or LangSmith ideal for debugging, while enterprises already invested in observability stacks should consider Datadog or Arize.

Start by identifying your primary pain point. Is it understanding costs? Debugging outputs? Tracking brand mentions? Your answer will point you toward the right platform.

If you're managing multiple LLM providers, Portkey's unified gateway approach simplifies integration and provides automatic fallback. Teams using LangChain should default to LangSmith for its native integration. For those prioritizing prompt engineering workflows, Humanloop's systematic approach to versioning and evaluation stands out.

The common thread across successful implementations is starting small. Pick one critical workflow—whether that's monitoring production costs, debugging a specific chain, or tracking brand visibility—and prove value there before expanding. Most platforms offer free tiers that let you validate fit before committing to paid plans.

Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms. Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth.