When someone opens ChatGPT and asks "What's the best project management tool for remote teams?" or turns to Claude for "Which CRM should a startup use?", does your brand show up in the answer? Right now, millions of purchasing decisions are happening in AI chat interfaces instead of Google search results. These conversations are invisible to traditional analytics, untracked by conventional SEO tools, and completely outside the reach of standard brand monitoring.

This represents a fundamental shift in how people discover products and services. The old model was simple: rank higher on Google, get more clicks. The new reality is far more complex. AI models don't have rankings—they have contextual mentions. They don't show ten blue links—they synthesize recommendations into conversational responses. And most critically, they either mention your brand or they don't.

AI model response analysis is the systematic practice of tracking, measuring, and optimizing how AI systems talk about your brand. It's the discipline of understanding which prompts trigger mentions of your product, how AI platforms describe your offering compared to competitors, and what content strategies actually improve your visibility in these AI-generated responses. For marketers who've spent years mastering SEO, this represents an entirely new frontier—one that most are completely missing while early adopters capture market share in AI-assisted search.

How AI Models Actually Decide What to Recommend

Understanding AI model response analysis starts with grasping the mechanics of how these systems generate their answers. When someone asks ChatGPT or Claude for a recommendation, the model isn't searching a database of ranked results. Instead, it's synthesizing information through a complex process that combines learned knowledge with real-time information retrieval.

Large language models develop their understanding through training on massive datasets that include web content, documentation, reviews, and countless other text sources. During this training phase, they build semantic associations—connections between concepts, brands, and use cases. When your brand appears frequently in high-quality content associated with specific problems or categories, the model learns those associations. Think of it like building a web of connections: "project management" connects to "remote teams" connects to "collaboration tools" connects to specific brand names that appear in those contexts.

But training data is only part of the story. Modern AI platforms increasingly use retrieval-augmented generation, where the model pulls current information from the web in real-time to supplement its learned knowledge. Perplexity does this extensively, citing sources for its responses. ChatGPT's web browsing capability works similarly. This means your current web presence—not just historical training data—actively influences AI responses. Understanding how AI models cite sources becomes essential for optimizing your visibility.

Here's where it gets interesting for marketers: AI models don't have a "ranking algorithm" in the traditional sense. They use entity recognition to identify brands and semantic understanding to determine relevance. When the model encounters a prompt about CRM software, it recognizes entities like "Salesforce," "HubSpot," or "Pipedrive" and evaluates their semantic relevance to the specific context of the question. A prompt about "enterprise CRM" might trigger different brand mentions than "CRM for solopreneurs" because the model has learned different associations for these contexts.

The practical implication is profound: traditional SEO focuses on ranking for specific keywords, but AI visibility depends on building strong semantic associations across contexts. Your brand needs to appear consistently in content that connects your product category to specific use cases, problems, and user needs. Learning how AI models choose brands to recommend reveals why the AI model doesn't care about your domain authority score—it cares about the strength and consistency of the semantic connections it has learned between your brand and relevant concepts.

The Metrics That Actually Matter for AI Visibility

Tracking AI visibility requires a completely different metrics framework than traditional SEO. You can't measure rankings because there are no rankings. You can't track click-through rates because there are no search results pages. Instead, AI model response analysis centers on three core metrics that define your actual visibility in AI-generated recommendations.

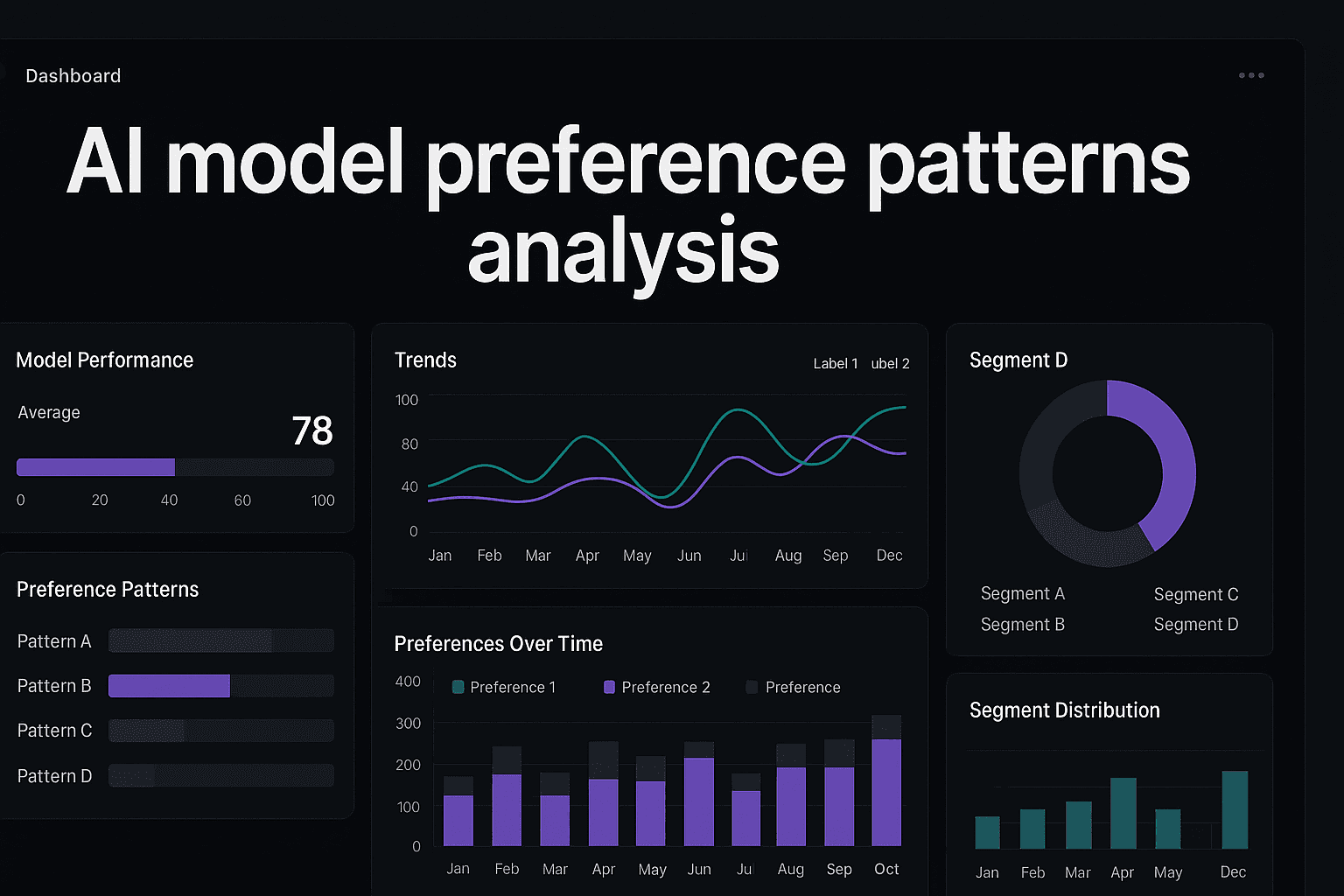

Mention Frequency Across Prompt Categories: This measures how often your brand appears when AI models respond to relevant queries. But raw frequency means nothing without context. The critical insight is tracking mentions across different prompt categories—buying intent queries ("best tool for X"), comparison queries ("X vs Y"), problem-solving queries ("how to solve Z"), and educational queries ("what is X"). A brand might appear frequently in educational contexts but never in buying-intent responses, which reveals a significant visibility gap where it matters most for conversions. Implementing AI model brand mention tracking helps you capture these nuances systematically.

Sentiment and Positioning Quality: Getting mentioned isn't enough—how the AI describes your brand determines whether the mention drives consideration or creates doubt. Sentiment analysis for AI responses goes beyond simple positive/negative classification. Does the AI mention your brand with enthusiasm or with caveats? Does it say "X is an excellent choice for teams that need Y" or "X is an option, though some users report Z issues"? These nuances dramatically affect how potential customers perceive your brand. Track whether mentions include qualifiers, whether they position you as a leading solution or an alternative, and whether the AI associates your brand with strengths or limitations.

Competitive Context and Share of Voice: Perhaps the most revealing metric is where you appear relative to competitors in recommendation contexts. When AI models list multiple options, position matters—even without formal rankings. Does your brand appear first in the response or buried at the end? How many competitors get mentioned alongside you? This competitive positioning reveals your actual share of voice in AI-generated recommendations. Many companies discover they're mentioned far less frequently than competitors they outrank in traditional search, exposing a critical gap in AI visibility.

The measurement challenge is that AI responses are non-deterministic—the same prompt can yield different responses across sessions, times, and even slight variations in phrasing. This means single-point measurements are worthless. Effective AI visibility tracking requires statistical sampling: running the same prompts multiple times, testing variations, and tracking trends over weeks and months rather than relying on individual responses.

Designing a Systematic Response Analysis Framework

Moving from ad-hoc checking to systematic AI visibility tracking requires building a structured framework that captures meaningful data over time. This isn't about occasionally asking ChatGPT if it knows your brand—it's about creating repeatable measurement processes that reveal trends, opportunities, and the impact of your optimization efforts.

Start by identifying the prompt categories that actually matter for your business. Generic brand awareness queries ("what is [your company]?") tell you little about competitive positioning. Instead, focus on the prompts your potential customers actually use. For a project management tool, that might include "best project management software for agencies," "how to manage remote team workflows," or "Asana alternatives for small teams." Map out 15-25 core prompts across different intent categories: informational queries, comparison queries, buying-intent queries, and problem-solving queries. These become your baseline measurement set.

Next, establish cross-platform baselines because different AI models have different training data, retrieval mechanisms, and response patterns. ChatGPT might mention your brand frequently while Claude rarely does, or Perplexity might cite your website while Gemini doesn't. Test your core prompt set across at least four major platforms: ChatGPT, Claude, Perplexity, and Gemini. Using multi-model AI tracking software reveals platform-specific gaps and opportunities. Many companies discover they have strong visibility in one AI ecosystem but are virtually invisible in others—a critical insight you can't get from testing a single platform.

Create a systematic tracking schedule rather than sporadic checks. AI models update regularly, web content changes, and your own optimization efforts need time to show impact. Monthly tracking works well for most businesses—frequent enough to catch trends but not so often that you're measuring noise. For each tracking session, run your core prompt set multiple times per platform (at least 3-5 iterations) to account for response variability. Document not just whether you were mentioned, but how you were described, which competitors appeared, and the overall context of the mention.

The framework should also include prompt variation testing. AI responses are highly sensitive to phrasing. "Best CRM for startups" might yield different brand mentions than "top CRM tools for new businesses" even though they represent the same intent. Implementing AI model prompt tracking helps you test semantic variations of your core prompts to understand how robust your visibility is across different ways users might ask the same question. Brands with strong AI visibility appear consistently across prompt variations, while those with weak visibility show up for some phrasings but disappear for others.

Optimization Strategies That Improve AI Mentions

Once you've established baseline measurements, the next challenge is actually improving your AI visibility. This requires a different approach than traditional SEO because you're optimizing for how AI models learn and retrieve information, not how search algorithms rank pages.

Strengthen Your Semantic Footprint Through Content: AI models learn brand associations from the content ecosystem surrounding your product. The optimization strategy is creating and encouraging content that consistently connects your brand to relevant use cases, problems, and categories. This means publishing comprehensive guides that naturally mention your product in context, creating comparison content that positions you alongside competitors, and developing use-case documentation that helps AI models understand what problems you solve. The goal isn't keyword density—it's building strong, repeated semantic associations between your brand and the concepts that matter to your business.

Optimize for Entity Recognition and Consistency: AI models rely on entity recognition to identify brands, and inconsistent entity information creates confusion. Ensure your brand name, product names, and key offerings appear consistently across your website, documentation, and third-party content. Use structured data markup to explicitly define your organization, products, and relationships. Maintain consistent NAP (Name, Address, Phone) information across platforms. When AI models encounter clear, consistent entity signals, they're more likely to recognize and mention your brand accurately in responses.

Build Authoritative Backlinks and Citations: For AI platforms that use retrieval-augmented generation, authoritative backlinks and citations significantly impact visibility. When high-quality publications, review sites, and industry resources link to and cite your content, AI models treat those signals as indicators of relevance and authority. Understanding how AI models select content sources helps you focus on earning mentions in the types of sources AI models are likely to retrieve: industry publications, comparison sites, expert roundups, and authoritative blogs. The feedback loop works like this: better web presence leads to stronger retrieval signals, which leads to more frequent AI mentions, which drives more traffic and backlinks, which further strengthens AI visibility.

Monitor and Iterate Based on Response Patterns: AI visibility optimization isn't a one-time project—it's an ongoing process of testing, measuring, and refining. Track which content initiatives correlate with improved mention frequency. Notice which semantic associations strengthen over time. Pay attention to how competitors' mentions change and what might be driving those shifts. The brands that win at AI visibility treat it like a continuous optimization discipline, not a checklist to complete. Learning how to improve AI model visibility provides a roadmap for this iterative process.

Avoiding the Traps That Undermine AI Visibility Analysis

As AI model response analysis becomes more critical, several common pitfalls can waste resources and lead to misguided strategies. Understanding these traps helps you build more effective measurement and optimization processes.

The Snapshot Fallacy: Perhaps the most common mistake is drawing conclusions from single-point measurements. Someone asks ChatGPT about their product category once, sees their brand mentioned, and assumes they have strong AI visibility. Or worse, they don't see a mention and panic without understanding response variability. AI responses change based on session context, time of day, slight prompt variations, and model updates. A single query tells you almost nothing. Effective analysis requires multiple samples over time—think statistical significance, not anecdotal evidence. Track trends across dozens of queries rather than reacting to individual responses.

Platform Blindspots: Many companies focus exclusively on ChatGPT because it's the most popular AI platform, completely ignoring Claude, Perplexity, Gemini, and emerging AI search tools. This creates dangerous blindspots because different platforms have different user bases, training data, and retrieval mechanisms. Your ideal customers might prefer Claude for research or use Perplexity for product discovery. Missing visibility on these platforms means missing potential customers. Comprehensive AI visibility tracking requires cross-platform measurement, even if you prioritize optimization efforts based on where your audience concentrates.

Vanity Metrics Versus Business Impact: Getting mentioned by AI models feels good, but mentions don't pay the bills. The trap is optimizing for mention frequency without connecting AI visibility to actual business outcomes. Are the prompts you're tracking actually relevant to buying intent? Are the contexts where you're mentioned likely to drive consideration? Does improved AI visibility correlate with increased organic traffic or conversions? The most sophisticated AI visibility programs connect response analysis to traffic analytics, conversion tracking, and revenue attribution. They measure not just whether AI mentions the brand, but whether those mentions drive valuable actions. If you're struggling with visibility, understanding why AI models ignore your business can help identify root causes.

Your Roadmap to Systematic AI Visibility Tracking

Implementing AI model response analysis doesn't require massive resources or specialized tools—it requires systematic thinking and consistent execution. Start with the fundamentals: identify your core prompt categories, establish cross-platform baselines, and create a monthly tracking schedule. Document not just mentions but context, sentiment, and competitive positioning. Use this baseline data to identify your biggest visibility gaps.

Then move to optimization: strengthen semantic associations through targeted content, ensure entity consistency across platforms, and build authoritative citations in sources AI models are likely to retrieve. Track the impact of these efforts through your systematic measurement framework, refining your approach based on what actually improves visibility in the contexts that matter for your business.

The competitive advantage here is timing. Most brands aren't tracking AI visibility at all—they're guessing whether AI models mention them or relying on occasional manual checks. Companies that implement systematic AI model response tracking now are building visibility advantages while the discipline is still emerging. As AI-assisted search becomes increasingly dominant, the gap between brands with strong AI visibility and those without will widen dramatically.

Think of where SEO was in the early 2000s. Companies that invested in systematic search optimization when it was still new captured market share that compounded over years. AI visibility is at that same inflection point today. The measurement frameworks, optimization strategies, and competitive intelligence you build now will compound as AI search grows.

The New Reality of Digital Visibility

AI model response analysis isn't a nice-to-have addition to your marketing stack—it's rapidly becoming as essential as traditional SEO for brands that depend on organic discovery. The shift from search engines to AI assistants is accelerating, and with it comes a fundamental change in how customers discover and evaluate products. Brands that understand and optimize for this new reality will capture market share from competitors who remain focused exclusively on traditional search.

The key insight is that AI visibility operates on different principles than search rankings. It's not about gaming algorithms—it's about building genuine semantic associations between your brand and the problems you solve. It's not about one-time optimization—it's about systematic tracking and continuous refinement. And it's not about vanity metrics—it's about connecting AI mentions to actual business impact.

The brands winning at AI visibility share common traits: they track systematically across platforms, they optimize for semantic associations rather than just keywords, they measure trends rather than snapshots, and they connect AI visibility to business outcomes. They've moved beyond wondering whether AI models mention their brand to understanding exactly how, when, and in what context those mentions occur.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.