AI models like ChatGPT, Claude, and Perplexity are reshaping how customers discover brands. When someone asks an AI assistant for product recommendations, your brand either gets mentioned—or it doesn't. The difference between being recommended and being invisible can mean thousands of lost opportunities each month.

AI model monitoring tools help you track these mentions, understand sentiment, and identify opportunities to improve your visibility across AI-powered search. Some focus on brand visibility tracking—monitoring what AI assistants say about you. Others focus on ML operations—tracking model performance and behavior in production systems. Many organizations need both.

This guide covers the top tools for monitoring how AI models talk about your brand, from comprehensive visibility platforms to specialized analytics solutions. Whether you're a marketer tracking brand perception or a founder ensuring AI assistants recommend your product, these tools provide the insights you need.

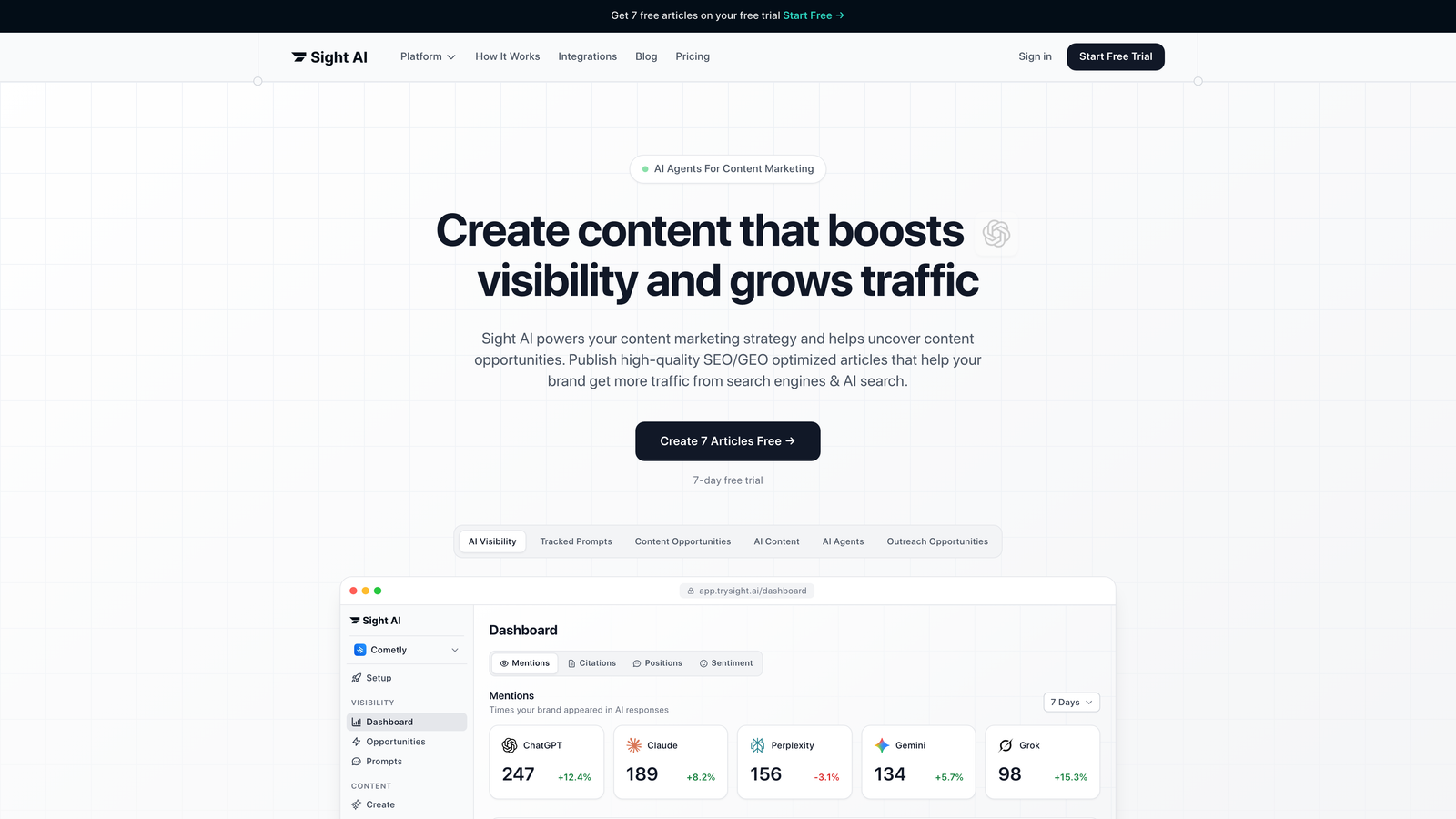

1. Sight AI

Best for: Tracking brand mentions and sentiment across ChatGPT, Claude, Perplexity, and other AI platforms

Sight AI is a specialized AI visibility platform that monitors how AI models talk about your brand across multiple platforms.

Where This Tool Shines

Sight AI addresses a critical gap that traditional monitoring tools miss: what happens when someone asks ChatGPT or Claude about your product category. The platform tracks brand mentions across 6+ AI platforms, giving you a comprehensive view of your AI visibility.

The AI Visibility Score provides a single metric to track your brand's presence over time, while sentiment analysis reveals whether AI models position you favorably or unfavorably. This matters because AI-powered search is fundamentally different from traditional SEO—you can't simply check rankings.

Key Features

Multi-Platform Monitoring: Tracks mentions across ChatGPT, Claude, Perplexity, and other major AI models from a single dashboard.

AI Visibility Score: Quantifies your brand's presence with a trackable metric that shows improvement over time.

Prompt Tracking: Shows which queries trigger mentions of your brand, revealing content opportunities and competitive positioning.

Sentiment Analysis: Identifies whether AI models describe your brand positively, negatively, or neutrally in their responses.

Competitor Comparison: Benchmarks your AI visibility against competitors to identify gaps and opportunities.

Best For

Marketing teams and founders focused on organic traffic growth through AI-powered search. Particularly valuable for brands in competitive categories where AI recommendations directly influence purchasing decisions.

Pricing

Contact for pricing; free trial available to test the platform before committing.

2. Brandwatch AI Monitor

Best for: Enterprise teams needing AI monitoring alongside comprehensive social listening and brand intelligence

Brandwatch AI Monitor is an enterprise brand intelligence platform expanding its capabilities into AI mention tracking.

Where This Tool Shines

Brandwatch built its reputation on social listening and is now extending that expertise to AI-generated content. For enterprises already using Brandwatch for traditional brand monitoring, adding AI tracking creates a unified view of brand perception across all channels.

The platform's strength lies in its advanced analytics capabilities and customizable reporting. Teams can create dashboards that combine social mentions, news coverage, and AI model references into comprehensive brand health reports.

Key Features

Cross-Platform Brand Monitoring: Combines social, news, review sites, and AI model tracking in one platform.

Advanced Sentiment Analysis: Uses sophisticated NLP to understand context and nuance in brand mentions.

Customizable Dashboards: Build reports tailored to specific stakeholders and business objectives.

API Access: Integrate brand intelligence data into internal systems and workflows.

Historical Data Analysis: Track brand perception trends over extended periods to identify patterns.

Best For

Large enterprises with established brand monitoring programs looking to add AI visibility tracking. Best suited for organizations that need comprehensive brand intelligence across multiple channels.

Pricing

Enterprise pricing model; contact sales for custom quote based on monitoring volume and features needed.

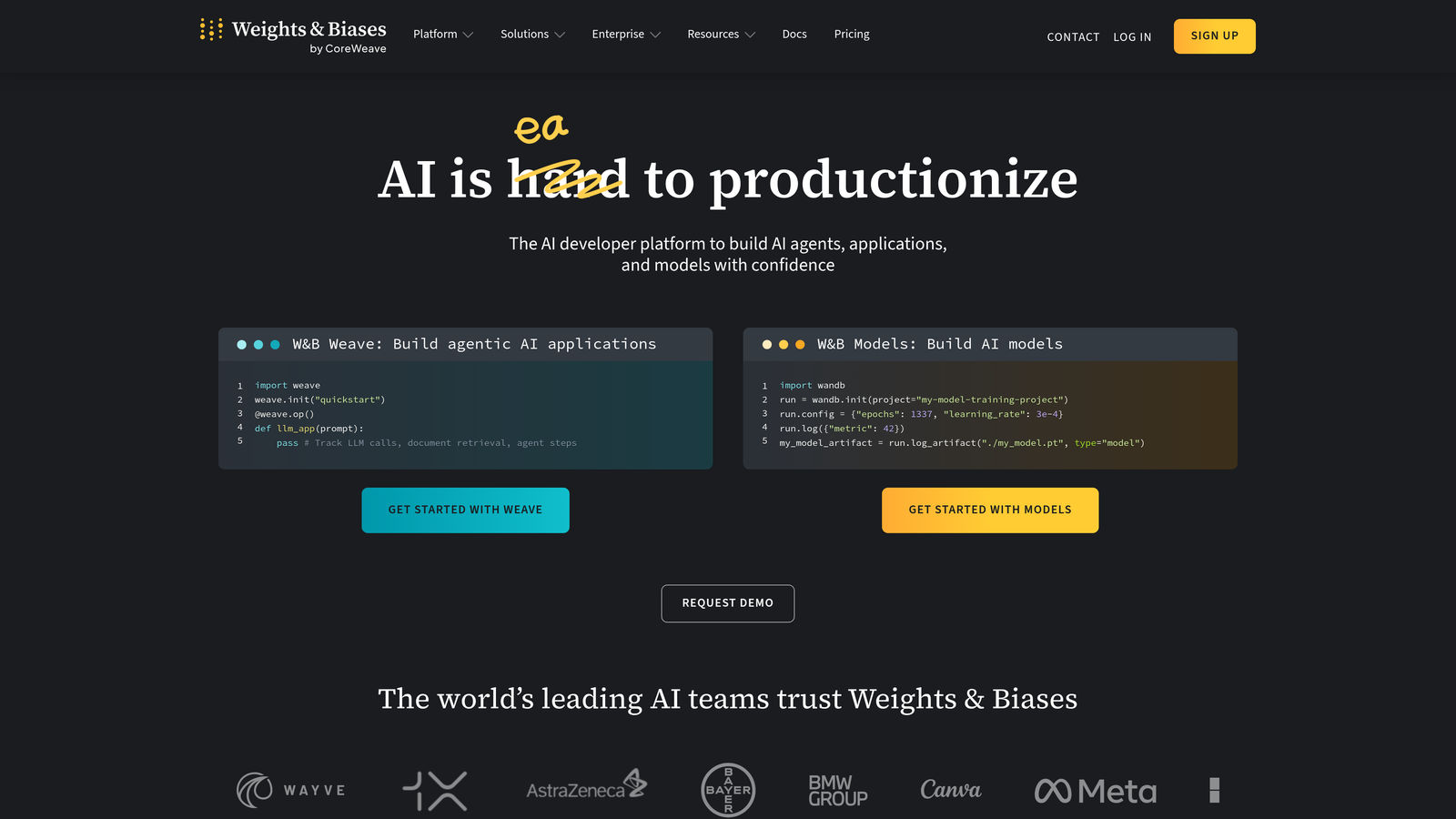

3. Weights & Biases

Best for: ML teams tracking experiment performance and model behavior during development and production

Weights & Biases is an ML experiment tracking platform popular with machine learning teams building and deploying models.

Where This Tool Shines

Weights & Biases excels at helping ML teams understand what's happening inside their models. The platform makes it easy to track hundreds of experiments, compare results, and identify which approaches work best.

For teams building custom AI models, the collaborative features enable data scientists to share findings, reproduce experiments, and maintain a clear record of model development. This becomes critical when debugging production issues or explaining model decisions to stakeholders.

Key Features

Experiment Tracking: Log metrics, hyperparameters, and outputs from every training run automatically.

Model Performance Dashboards: Visualize training progress, compare experiments, and identify optimal configurations.

Collaborative Workflows: Share experiments with team members and maintain institutional knowledge.

Artifact Management: Version and track datasets, models, and other artifacts throughout the ML lifecycle.

Framework Integration: Works seamlessly with PyTorch, TensorFlow, scikit-learn, and other popular ML libraries.

Best For

Data science teams and ML engineers building custom models who need to track experiments and collaborate effectively. Particularly valuable for organizations running many parallel experiments.

Pricing

Free tier for individuals and small teams; Teams plan starts at $50 per user per month with additional features.

4. Arize AI

Best for: Production ML monitoring with focus on drift detection and performance troubleshooting

Arize AI is an ML observability platform focused on monitoring models in production and identifying issues before they impact business outcomes.

Where This Tool Shines

Arize AI specializes in catching problems that emerge after deployment. Models that performed well in development can degrade over time as real-world data shifts. Arize detects these changes early, allowing teams to retrain or adjust models before accuracy drops significantly.

The platform's root cause analysis tools help teams quickly diagnose why a model's performance changed. Instead of manually investigating data quality issues or feature drift, Arize surfaces the specific factors causing degradation.

Key Features

Real-Time Monitoring: Track model predictions, inputs, and performance metrics as they happen in production.

Automated Drift Detection: Identify when input data or model behavior shifts from expected patterns.

Root Cause Analysis: Drill down into performance issues to understand exactly what's causing problems.

Performance Benchmarking: Compare current model performance against historical baselines and business targets.

LLM Application Support: Monitor large language model applications with specialized metrics and tracking.

Best For

ML operations teams managing production models where performance degradation has direct business impact. Especially useful for models making high-stakes predictions in finance, healthcare, or e-commerce.

Pricing

Free tier for small teams; paid plans start at $500 per month with volume-based scaling.

5. Fiddler AI

Best for: Enterprise ML governance with explainability, bias detection, and compliance features

Fiddler AI is an explainable AI platform with comprehensive model monitoring and governance capabilities for regulated industries.

Where This Tool Shines

Fiddler AI addresses a critical challenge for enterprises: understanding and documenting how AI models make decisions. For organizations in regulated industries, being able to explain model outputs isn't optional—it's required for compliance.

The platform's bias and fairness monitoring helps teams identify when models produce discriminatory outcomes across demographic groups. Combined with audit trails and compliance reporting, Fiddler enables organizations to deploy AI responsibly while meeting regulatory requirements.

Key Features

Model Explainability: Generate human-readable explanations for individual predictions and overall model behavior.

Bias Detection: Monitor for unfair outcomes across protected classes and demographic segments.

Compliance Trails: Maintain detailed audit logs for regulatory reporting and internal governance.

Custom Alerting: Set up notifications based on model performance, fairness metrics, or other business rules.

Enterprise Security: Deploy on-premises or in private cloud with SOC 2 compliance and role-based access control.

Best For

Large enterprises in regulated industries like finance, healthcare, and insurance that need explainability and governance alongside monitoring. Essential for organizations where model decisions require documentation and audit trails.

Pricing

Enterprise pricing model; contact sales for custom quote based on deployment requirements and scale.

6. WhyLabs

Best for: Data quality monitoring with open-source integration and LLM application tracking

WhyLabs is a data and ML monitoring platform with strong data quality features and integration with the open-source whylogs library.

Where This Tool Shines

WhyLabs focuses on data quality as the foundation of model performance. The platform catches data issues before they cause model problems, monitoring for schema changes, missing values, and distribution shifts in input data.

The open-source whylogs library enables teams to start monitoring locally before adopting the full platform. This approach reduces vendor lock-in while providing a clear upgrade path as monitoring needs grow. For LLM applications, WhyLabs offers specialized tracking for prompt inputs and model outputs.

Key Features

Data Quality Monitoring: Track data schema, completeness, and distributions to catch issues early.

Model Performance Tracking: Monitor predictions and model behavior in production environments.

Open-Source whylogs: Start with free local monitoring before scaling to cloud platform.

Anomaly Detection: Automatically identify unusual patterns in data or model outputs.

LLM Monitoring: Track prompt quality, response characteristics, and usage patterns for language models.

Best For

Teams that value open-source tools and need strong data quality monitoring alongside model tracking. Particularly useful for organizations building LLM applications that need to monitor prompt and response quality.

Pricing

Free tier available for small-scale use; Pro plans start at $250 per month with volume-based scaling.

7. Evidently AI

Best for: Open-source ML monitoring with pre-built reports and Python workflow integration

Evidently AI is an open-source ML monitoring platform with comprehensive dashboards and strong community support.

Where This Tool Shines

Evidently AI provides production-ready monitoring without vendor lock-in. The open-source core is fully functional for teams that want to self-host, while the cloud platform adds collaboration and alerting features for growing teams.

The pre-built monitoring reports cover common use cases like data drift, model quality, and target drift. Teams can generate these reports with just a few lines of Python code, making it easy to integrate monitoring into existing ML pipelines and notebooks.

Key Features

Open-Source Core: Full-featured monitoring available free with no usage limits for self-hosted deployment.

Pre-Built Reports: Generate comprehensive monitoring dashboards with minimal code.

Data Drift Detection: Identify when input data distributions shift from training data patterns.

Model Quality Metrics: Track accuracy, precision, recall, and other performance indicators over time.

Python Integration: Works seamlessly with Jupyter notebooks and Python-based ML workflows.

Best For

Data science teams comfortable with Python who want open-source monitoring they can customize and self-host. Ideal for organizations that prefer to avoid vendor dependencies or have strict data privacy requirements.

Pricing

Open-source version is completely free; cloud platform available with paid plans for team collaboration and advanced features.

8. Datadog ML Monitoring

Best for: Teams already using Datadog infrastructure monitoring who want unified observability

Datadog ML Monitoring integrates ML model tracking into Datadog's comprehensive observability platform.

Where This Tool Shines

Datadog ML Monitoring eliminates silos between infrastructure and model performance. Teams can correlate model degradation with infrastructure changes, database performance, or API latency—all from the same platform.

For organizations already using Datadog for application performance monitoring, adding ML monitoring creates a unified view of system health. The same alerting, dashboards, and incident management workflows that teams already know extend naturally to ML models.

Key Features

Unified Observability: Monitor infrastructure, applications, and ML models from a single platform.

Custom Metrics: Track any model-specific metric alongside standard performance indicators.

Alerting and Incidents: Use Datadog's mature alerting system for model performance issues.

APM Integration: Correlate model performance with application traces and service dependencies.

Integrations Ecosystem: Connect to hundreds of tools and services Datadog already supports.

Best For

Engineering teams already using Datadog for infrastructure and application monitoring. Makes most sense when you want to consolidate monitoring tools rather than adding a separate ML platform.

Pricing

ML monitoring is included with Datadog plans; specific pricing varies based on usage volume and features enabled.

9. Neptune.ai

Best for: Lightweight experiment tracking with metadata management and team collaboration

Neptune.ai is an experiment tracking and metadata management platform with flexible logging and collaboration features.

Where This Tool Shines

Neptune.ai focuses on making experiment tracking as frictionless as possible. The lightweight integration requires minimal code changes, and the flexible logging API handles any type of metadata—from simple metrics to complex objects.

The platform excels at team collaboration, making it easy to share experiments, compare approaches, and maintain institutional knowledge. Data scientists can work in their preferred environment—notebooks, IDEs, or command line—while Neptune automatically organizes and tracks everything.

Key Features

Experiment Tracking: Log and compare unlimited experiments with flexible metadata storage.

Metadata Management: Store any type of information about experiments, from hyperparameters to model artifacts.

Team Collaboration: Share experiments, create projects, and maintain knowledge across team members.

Flexible Logging API: Track metrics, parameters, files, and custom objects with simple Python calls.

IDE Integration: Works seamlessly with Jupyter, VS Code, PyCharm, and other development environments.

Best For

Data science teams that need experiment tracking without heavy infrastructure overhead. Particularly useful for organizations where collaboration and knowledge sharing are priorities.

Pricing

Free for individual users; Team plans start at $49 per user per month with additional storage and features.

Making the Right Choice

AI model monitoring serves two distinct needs, and understanding which category matters most to your organization determines the right tool.

For marketing teams and founders focused on brand visibility, Sight AI provides specialized tracking of how AI assistants like ChatGPT and Claude mention your brand. This matters when AI-powered search influences purchasing decisions in your category. Brandwatch offers similar capabilities within a broader brand intelligence platform for enterprises needing comprehensive monitoring across all channels.

For ML operations teams managing production models, the choice depends on your specific requirements. Arize AI and Fiddler AI serve enterprises with compliance needs and high-stakes predictions. WhyLabs and Evidently AI appeal to teams that value open-source flexibility. Weights & Biases and Neptune.ai focus on experiment tracking during development. Datadog ML Monitoring makes sense when you're already using their infrastructure platform.

Many organizations ultimately need both perspectives. Understanding how external AI models talk about your brand helps drive organic traffic and customer acquisition. Monitoring internal model performance ensures your own AI systems deliver reliable results. The tools in this guide address different parts of that equation.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.