As large language models become central to business operations—powering chatbots, content generation, and customer interactions—understanding how these models perform and how they reference your brand has become critical. LLM monitoring platforms help teams track model behavior, detect anomalies, measure performance metrics, and increasingly, monitor how AI systems mention and recommend brands.

Whether you're running production LLM applications or tracking your brand's visibility across AI models like ChatGPT and Claude, the right monitoring platform can mean the difference between proactive optimization and costly blind spots. Here are nine platforms that excel at different aspects of LLM monitoring.

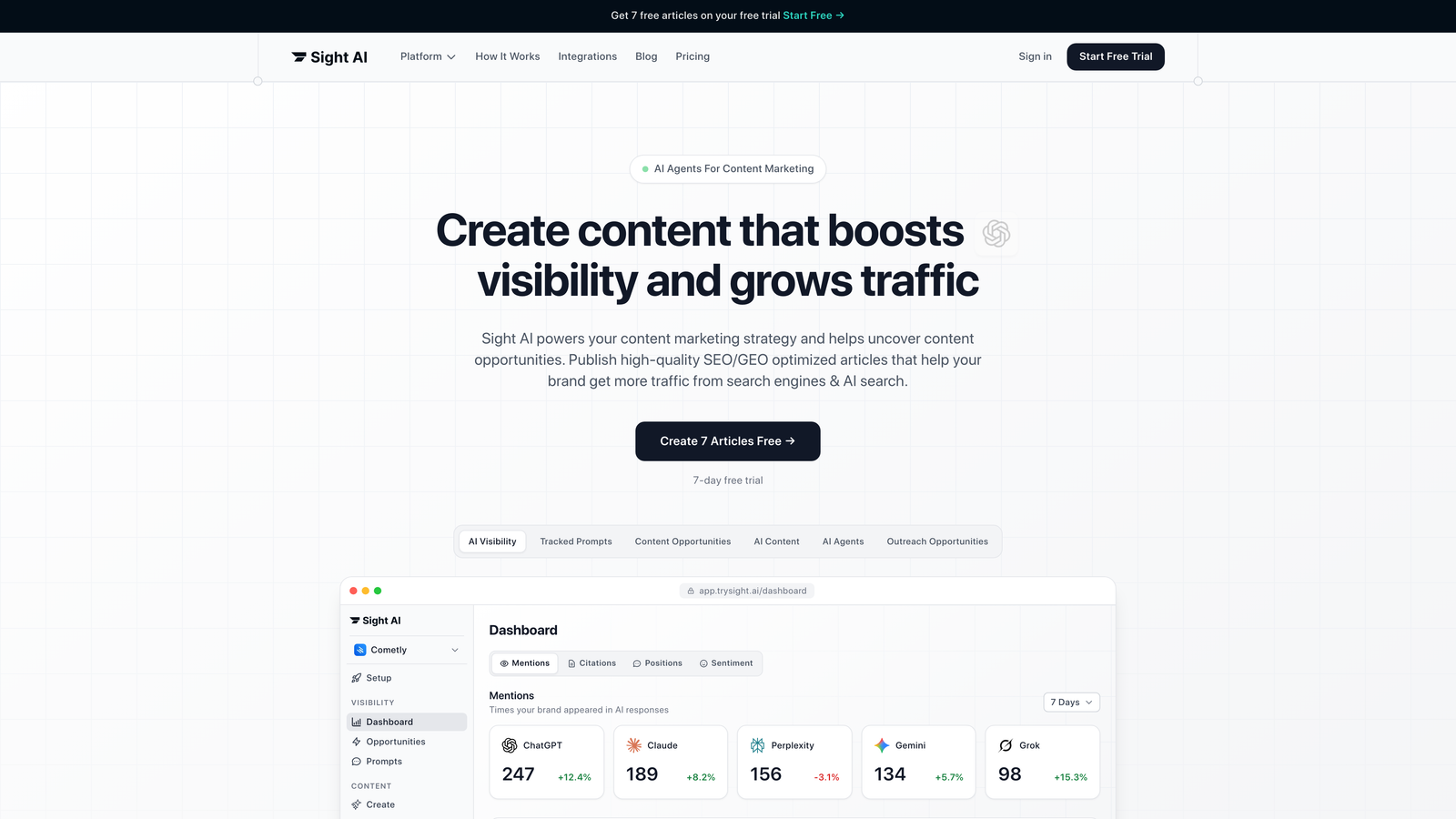

1. Sight AI

Best for: Tracking brand mentions and recommendations across major AI models

Sight AI monitors how large language models like ChatGPT, Claude, and Perplexity mention and recommend your brand in their responses.

Where This Tool Shines

While most LLM monitoring platforms focus on technical performance metrics, Sight AI addresses a critical business question: how are AI models talking about your company? As more users turn to AI for recommendations and research, understanding your brand's visibility in AI responses becomes as important as tracking traditional search rankings.

The platform provides daily monitoring of top AI models, giving you insight into sentiment trends and the specific prompts that trigger brand mentions. This visibility helps marketing teams understand their AI footprint and identify content opportunities to improve how models reference their brand.

Key Features

Brand Mention Tracking: Monitors how major AI models reference your company across different query types and contexts.

Sentiment Analysis: Analyzes whether AI-generated brand references are positive, neutral, or negative to track perception trends.

Prompt Tracking: Identifies which user questions and prompts trigger brand recommendations, revealing content gaps.

Daily Model Monitoring: Tracks ChatGPT, Claude, Perplexity, and other leading models for consistent visibility data.

Competitive Benchmarking: Compares your brand's AI visibility against competitors to identify positioning opportunities.

Best For

Marketing teams and agencies focused on AI visibility strategy, brands concerned about how AI models represent them, and companies looking to optimize content for AI recommendation engines. Particularly valuable for B2B SaaS companies where AI-driven research influences buying decisions.

Pricing

Contact for pricing with tiered plans based on monitoring scope and frequency. Offers different packages depending on the number of brands tracked and AI models monitored.

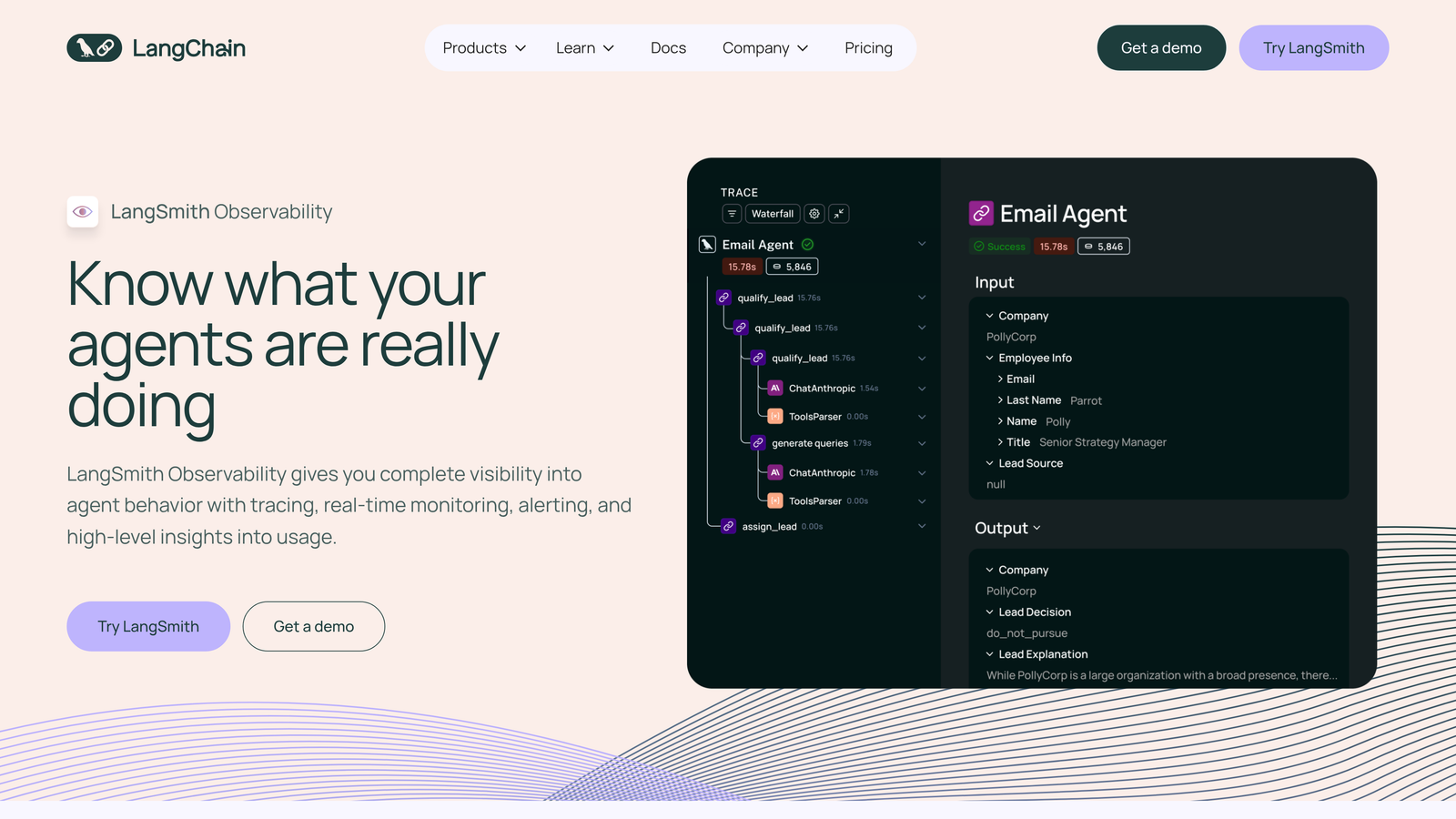

2. LangSmith

Best for: Debugging and tracing LangChain-based LLM applications

LangSmith is a comprehensive debugging platform from the creators of LangChain, designed specifically for tracing and evaluating LLM applications.

Where This Tool Shines

If you're building applications with LangChain, LangSmith provides the deepest native integration available. The platform captures every step of your LLM chain execution, making it easy to identify where things go wrong—whether that's a poorly performing prompt, an unexpected API response, or a logic error in your chain.

The trace visualization is particularly powerful, showing you the exact sequence of LLM calls, tool invocations, and data transformations. This level of detail turns debugging from guesswork into a systematic process.

Key Features

Full Trace Visualization: Captures complete execution traces showing every step in your LangChain application with timing and cost data.

Prompt Versioning: Manages different prompt versions and enables A/B testing to optimize model outputs systematically.

Evaluation Datasets: Creates test datasets for automated evaluation, ensuring consistent model performance across updates.

Annotation Queues: Facilitates human review of model outputs with collaborative annotation workflows for quality improvement.

Production Dashboards: Monitors live applications with real-time metrics on latency, cost, and error rates.

Best For

Development teams building production LLM applications with LangChain, organizations needing systematic prompt optimization workflows, and teams requiring collaborative debugging tools for complex AI chains.

Pricing

Free tier available for individual developers and small projects. Paid plans start at $39 per month with increased trace retention and team collaboration features.

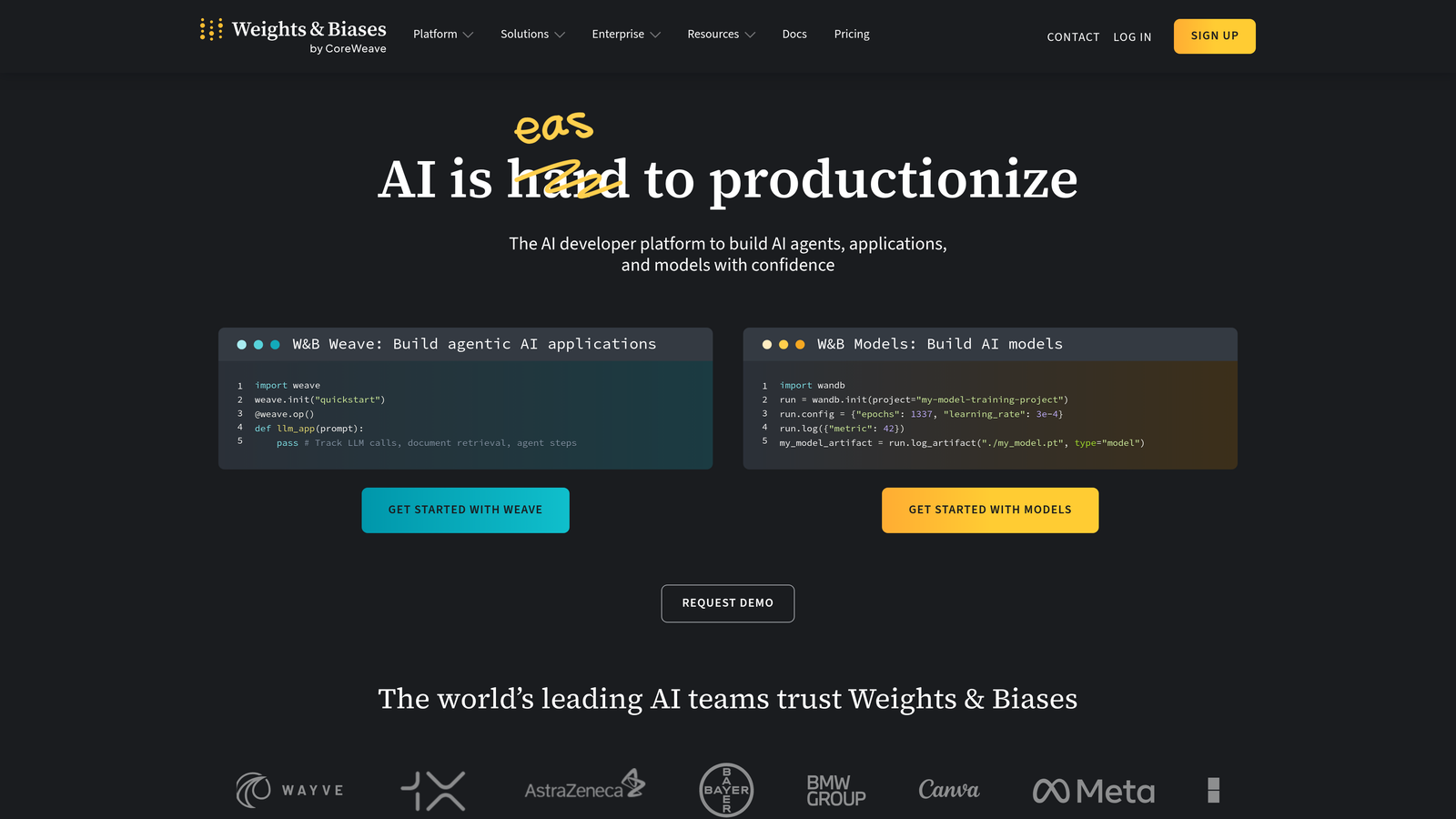

3. Weights & Biases

Best for: Experiment tracking and prompt engineering for ML teams

Weights & Biases is an industry-leading experiment tracking platform with robust support for LLM fine-tuning, prompt engineering, and model evaluation.

Where This Tool Shines

Weights & Biases excels at bringing scientific rigor to LLM development. The platform makes it easy to track hundreds of prompt variations, compare model outputs systematically, and understand which changes actually improve performance. This is crucial when you're iterating rapidly and need to avoid losing track of what worked.

The collaboration features are exceptional for larger teams. Multiple engineers can work on prompt optimization simultaneously, with full visibility into each other's experiments and results. The model registry ensures smooth handoffs from experimentation to production deployment.

Key Features

Experiment Tracking: Logs all experiments with full reproducibility, capturing code versions, hyperparameters, and environmental details.

Prompt Management: Organizes and versions prompts with performance metrics, making it easy to identify top-performing variations.

Model Registry: Manages model versions and deployment workflows with approval processes and rollback capabilities.

Team Collaboration: Enables shared dashboards, commenting, and report generation for cross-functional alignment.

Framework Integration: Works seamlessly with PyTorch, TensorFlow, Hugging Face, and major LLM libraries.

Best For

ML engineering teams running extensive prompt optimization experiments, organizations fine-tuning open-source models, and research teams requiring reproducible LLM development workflows. Particularly strong for teams already using W&B for traditional ML work.

Pricing

Free for individual users with unlimited experiments. Team plans start at $50 per user per month with additional storage and advanced features.

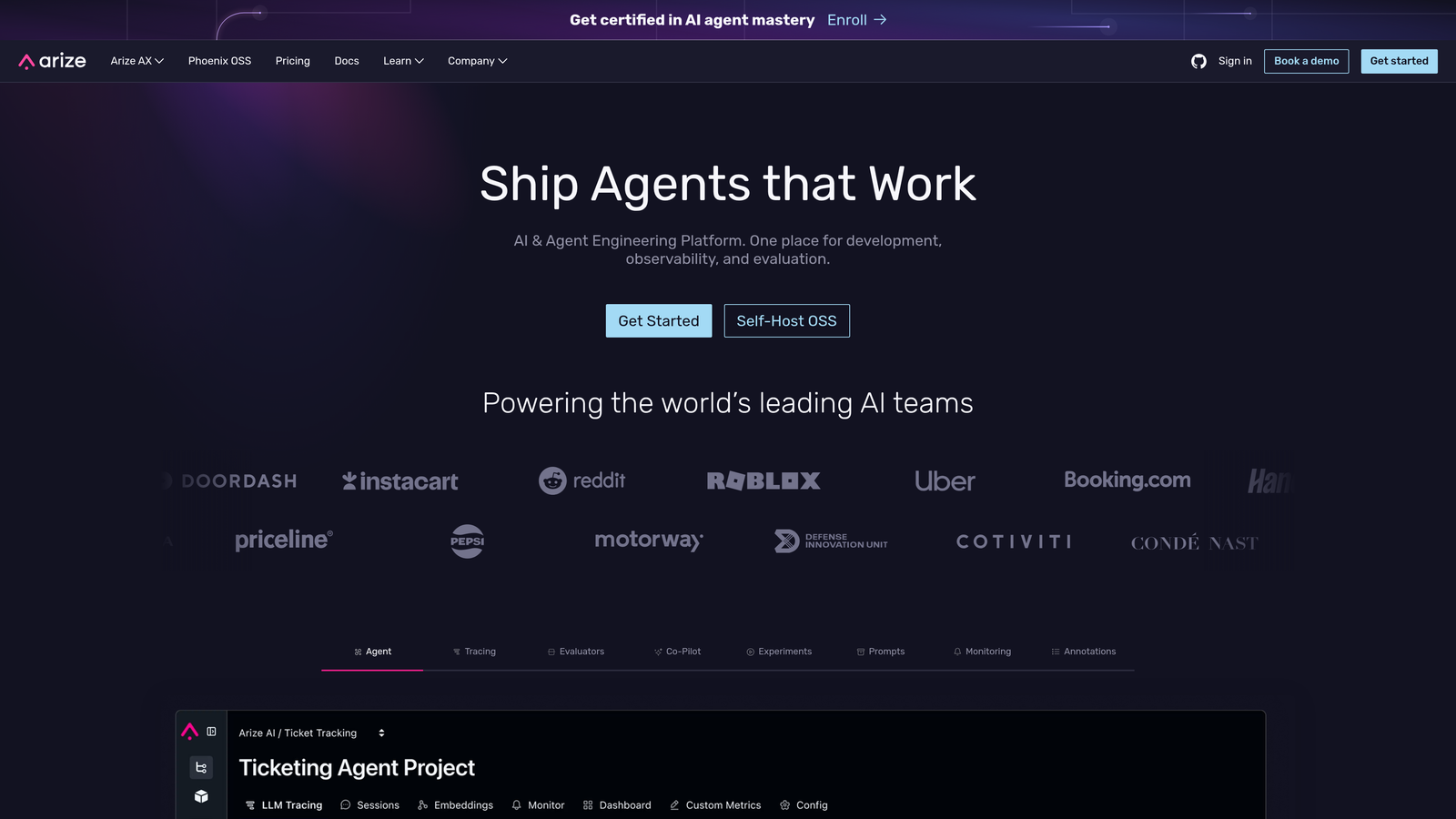

4. Arize AI

Best for: Production monitoring and embedding drift detection

Arize AI specializes in ML observability for production environments, with particular strength in embedding drift detection and real-time performance analysis for LLM applications.

Where This Tool Shines

Arize focuses on what happens after deployment. The platform excels at detecting subtle degradation in model performance—like when your embeddings start drifting because user language patterns have shifted, or when retrieval quality drops because your vector database is returning less relevant results.

The automated alerting system catches issues before they impact users significantly. Instead of waiting for customer complaints, you get notified when performance metrics cross predefined thresholds, with root cause analysis pointing you toward the specific component that's degrading.

Key Features

Embedding Drift Detection: Monitors vector embeddings for distribution shifts that indicate changing data patterns or model degradation.

Automated Alerting: Sends notifications when performance metrics deviate from expected ranges, enabling proactive issue resolution.

Root Cause Analysis: Identifies specific factors contributing to performance degradation through automated diagnostic workflows.

LLM-Specific Metrics: Tracks relevance, coherence, toxicity, and other LLM-specific quality dimensions beyond traditional ML metrics.

Vector Database Integration: Connects with Pinecone, Weaviate, and other vector databases to monitor retrieval performance.

Best For

Production ML teams running RAG applications at scale, organizations with customer-facing LLM features requiring high reliability, and teams managing complex embedding-based systems. Ideal when downtime or quality degradation has significant business impact.

Pricing

Free tier available for small-scale deployments. Enterprise pricing based on prediction volume and features required—contact for custom quotes.

5. Helicone

Best for: Lightweight cost tracking and request logging for OpenAI APIs

Helicone is a lightweight observability layer that sits between your application and LLM APIs, focused on cost tracking, request logging, and usage optimization.

Where This Tool Shines

Helicone's strength is simplicity. You add a single line of code to proxy your OpenAI requests through Helicone, and immediately gain visibility into costs, latency, and usage patterns. There's no complex instrumentation or SDK to learn—just change your API endpoint and you're monitoring.

The request caching feature can significantly reduce API costs by automatically serving identical requests from cache. For applications with repetitive queries, this alone can justify the platform cost several times over.

Key Features

One-Line Integration: Requires minimal code changes—just route requests through Helicone's proxy to start monitoring.

Cost Dashboards: Tracks spending across models and users with real-time visibility into token usage and API costs.

Request Caching: Automatically caches and serves repeated requests, reducing API calls and associated costs.

Rate Limiting: Implements user-level rate limits to prevent abuse and control spending on customer-facing applications.

Custom Properties: Tags requests with custom metadata for segmented analysis by feature, user type, or business unit.

Best For

Startups and small teams wanting quick cost visibility without complex setup, developers building OpenAI-powered features who need basic observability, and organizations looking to reduce API costs through intelligent caching.

Pricing

Free up to 100,000 requests per month. Pro plan starts at $20 per month with increased request limits and advanced features like custom retention periods.

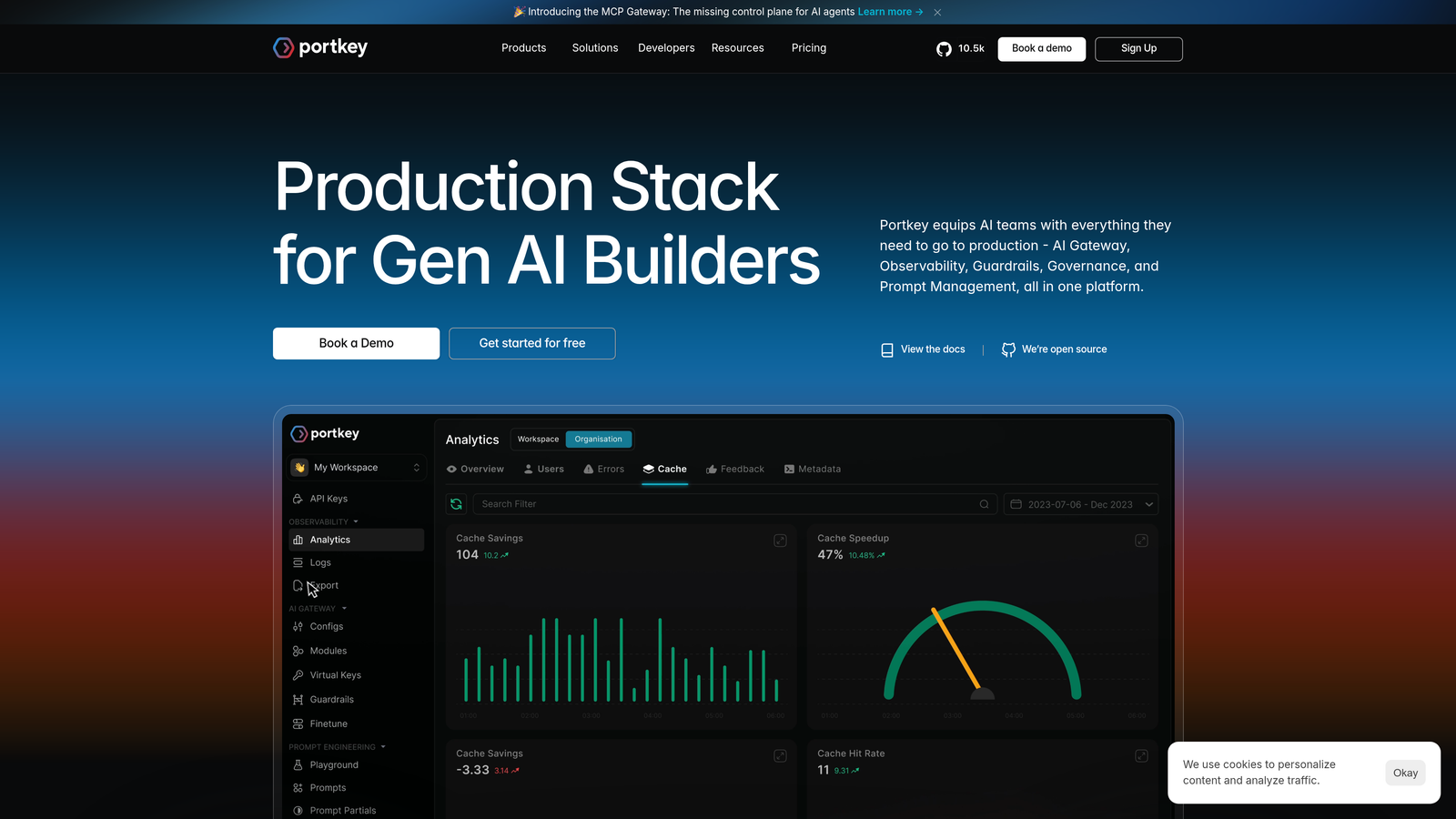

6. Portkey

Best for: Multi-provider LLM routing with automatic fallbacks

Portkey is an AI gateway and observability platform providing unified access to multiple LLM providers with built-in monitoring, fallbacks, and load balancing.

Where This Tool Shines

Portkey solves a critical challenge in production LLM applications: provider reliability. By routing through Portkey, you can automatically fall back to alternative models when your primary provider experiences downtime or rate limits. This means your application stays online even when OpenAI or Anthropic has issues.

The unified logging across providers is equally valuable. Instead of managing separate dashboards for OpenAI, Anthropic, and Cohere, you get a single view of all LLM usage with consistent metrics and cost tracking.

Key Features

Multi-Provider Routing: Connects to 20+ LLM providers through a single API, enabling easy model switching and experimentation.

Automatic Fallbacks: Implements retry logic and provider fallbacks to maintain uptime when primary models fail or hit rate limits.

Unified Logging: Aggregates logs from all providers into a single dashboard with consistent formatting and metrics.

Semantic Caching: Caches responses based on semantic similarity, not just exact matches, for more effective cost reduction.

Budget Controls: Sets spending limits by user, feature, or time period with automatic alerts when approaching thresholds.

Best For

Applications requiring high availability across multiple LLM providers, teams experimenting with different models to optimize cost and quality, and organizations needing centralized control over LLM spending and usage policies.

Pricing

Free tier available for development and testing. Growth plans start at $49 per month with increased request limits and enterprise features available on custom plans.

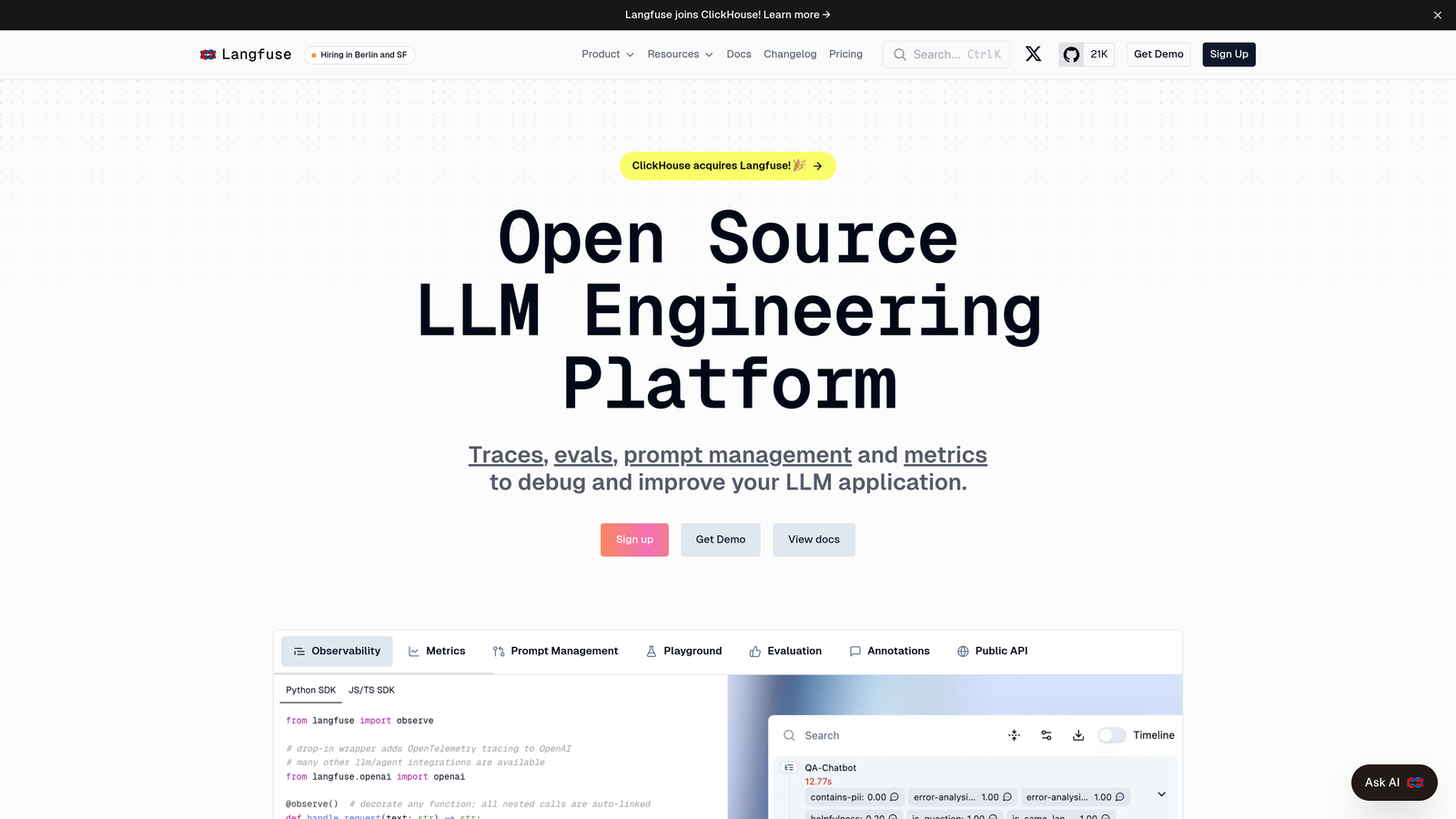

7. Langfuse

Best for: Open-source observability with self-hosting options

Langfuse is an open-source LLM observability platform offering tracing, analytics, and evaluation tools with self-hosting options for privacy-conscious teams.

Where This Tool Shines

Langfuse addresses a critical concern for many organizations: data privacy. Because it's open-source and self-hostable, you can run the entire observability stack within your infrastructure. Your prompts, user queries, and model responses never leave your environment—crucial for healthcare, finance, or any regulated industry.

Despite being open-source, Langfuse doesn't compromise on features. The trace visualization rivals commercial platforms, and the prompt management system is particularly well-designed for teams iterating on complex multi-step chains.

Key Features

Self-Hosting Option: Deploy the entire platform in your infrastructure for complete data control and compliance.

Trace Visualization: Provides detailed span-level tracing showing execution flow, timing, and costs for complex LLM chains.

Prompt Management: Versions and organizes prompts with performance tracking and collaborative editing capabilities.

User Feedback Collection: Captures thumbs up/down and detailed feedback directly within traces for quality improvement.

Cost Tracking: Breaks down spending by user, feature, or prompt version to identify optimization opportunities.

Best For

Organizations with strict data privacy requirements, teams wanting to avoid vendor lock-in with cloud platforms, and companies in regulated industries requiring on-premise deployment. Also ideal for developers who prefer open-source tools with community support.

Pricing

Free self-hosted version with full features. Cloud-hosted option starts at $59 per month for teams wanting managed infrastructure without setup complexity.

8. Datadog LLM Observability

Best for: Enterprise teams with existing Datadog APM infrastructure

Datadog LLM Observability is an enterprise-grade monitoring solution built into the Datadog platform, offering end-to-end tracing for AI applications within existing APM workflows.

Where This Tool Shines

If you're already using Datadog for application monitoring, their LLM observability integrates seamlessly into your existing workflows. You get the same dashboards, alerting system, and correlation capabilities you use for traditional services—but now extended to your LLM calls.

This unified approach is powerful for debugging. When users report slow response times, you can trace the issue from the frontend request through your backend services to the specific LLM call that's causing latency, all within a single platform.

Key Features

Native APM Integration: Connects LLM traces with existing application performance monitoring for end-to-end visibility.

End-to-End Tracing: Follows requests from user interaction through backend services to LLM calls and back.

Token Usage Tracking: Monitors token consumption and associated costs with attribution to specific services or features.

Quality Evaluations: Implements automated checks for output quality, safety, and compliance with organizational standards.

Enterprise Security: Provides role-based access control, audit logging, and compliance features required by large organizations.

Best For

Enterprise organizations with existing Datadog infrastructure, teams requiring unified observability across traditional and AI services, and companies needing enterprise-grade security and compliance features. Best when you want to avoid managing multiple monitoring platforms.

Pricing

Included as part of Datadog APM subscriptions. Pricing varies based on overall Datadog usage and spans ingested—contact Datadog for specific quotes based on your infrastructure scale.

9. WhyLabs

Best for: Privacy-preserving monitoring without storing sensitive data

WhyLabs is a data-centric AI monitoring platform using statistical profiling to detect data quality issues, model drift, and anomalies without storing sensitive data.

Where This Tool Shines

WhyLabs takes a fundamentally different approach to monitoring. Instead of logging every request and response, it generates statistical profiles of your data—capturing distributions, patterns, and anomalies without storing the actual content. This means you can monitor LLM applications handling sensitive data without creating a compliance nightmare.

The LangKit integration adds LLM-specific guardrails, detecting issues like prompt injection attempts, toxic outputs, or personally identifiable information in responses—all while maintaining the privacy-preserving architecture.

Key Features

Statistical Profiling: Creates privacy-preserving profiles that capture data characteristics without storing raw inputs or outputs.

Data Quality Monitoring: Detects anomalies in LLM inputs that could indicate data pipeline issues or adversarial attacks.

Output Validation: Implements guardrails to catch toxic content, PII leakage, or off-topic responses before they reach users.

Drift Detection: Identifies distribution shifts in inputs or outputs without requiring access to historical raw data.

LangKit Integration: Adds LLM-specific metrics for prompt injection detection, hallucination monitoring, and safety evaluation.

Best For

Organizations handling sensitive data like healthcare records or financial information, teams requiring compliance with strict data retention policies, and companies wanting monitoring without creating additional data storage liability. Particularly valuable for customer-facing applications where output safety is critical.

Pricing

Free tier available for small-scale monitoring. Paid plans start at $250 per month with pricing scaling based on data volume and retention requirements.

Making the Right Choice

The LLM monitoring landscape has evolved into two distinct categories, and most organizations will eventually need both. Technical observability platforms like LangSmith, Arize, and Helicone track model performance, latency, costs, and application behavior. Brand visibility platforms like Sight AI monitor how external AI models discuss and recommend your company.

For technical monitoring, your choice depends heavily on your existing infrastructure and primary use case. Teams building with LangChain get the most value from LangSmith's native integration. Organizations already using Datadog should start there to avoid platform sprawl. Startups wanting quick cost visibility with minimal setup will appreciate Helicone's simplicity.

If you're running production applications requiring high reliability, Portkey's multi-provider fallbacks and Arize's drift detection become essential. Privacy-conscious teams or those in regulated industries should prioritize Langfuse or WhyLabs for their self-hosting and privacy-preserving capabilities.

For brand visibility monitoring, Sight AI addresses a different but increasingly critical question: how are AI models representing your company to users? As more people turn to ChatGPT and Claude for recommendations and research, understanding your AI footprint becomes as important as tracking search rankings. This visibility helps marketing teams identify content gaps and optimize how AI systems reference their brand.

Consider starting with a lightweight solution like Helicone for basic cost and usage tracking, then adding specialized tools as your needs grow. Most platforms offer free tiers that let you test integration complexity and feature fit before committing. The key is matching the platform's strengths to your specific challenges—whether that's debugging complex chains, controlling costs, ensuring uptime, or tracking brand visibility.

Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth. Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms.