AI systems now influence everything from hiring decisions to loan approvals—and the biases baked into these models can quietly undermine fairness at scale. Whether you're deploying large language models for customer interactions or using ML systems for critical business decisions, detecting and mitigating bias isn't optional anymore. It's a compliance requirement, a brand protection strategy, and increasingly, a competitive differentiator.

Here are the top tools that help organizations identify, measure, and address bias across AI systems, from pre-deployment auditing to continuous production monitoring.

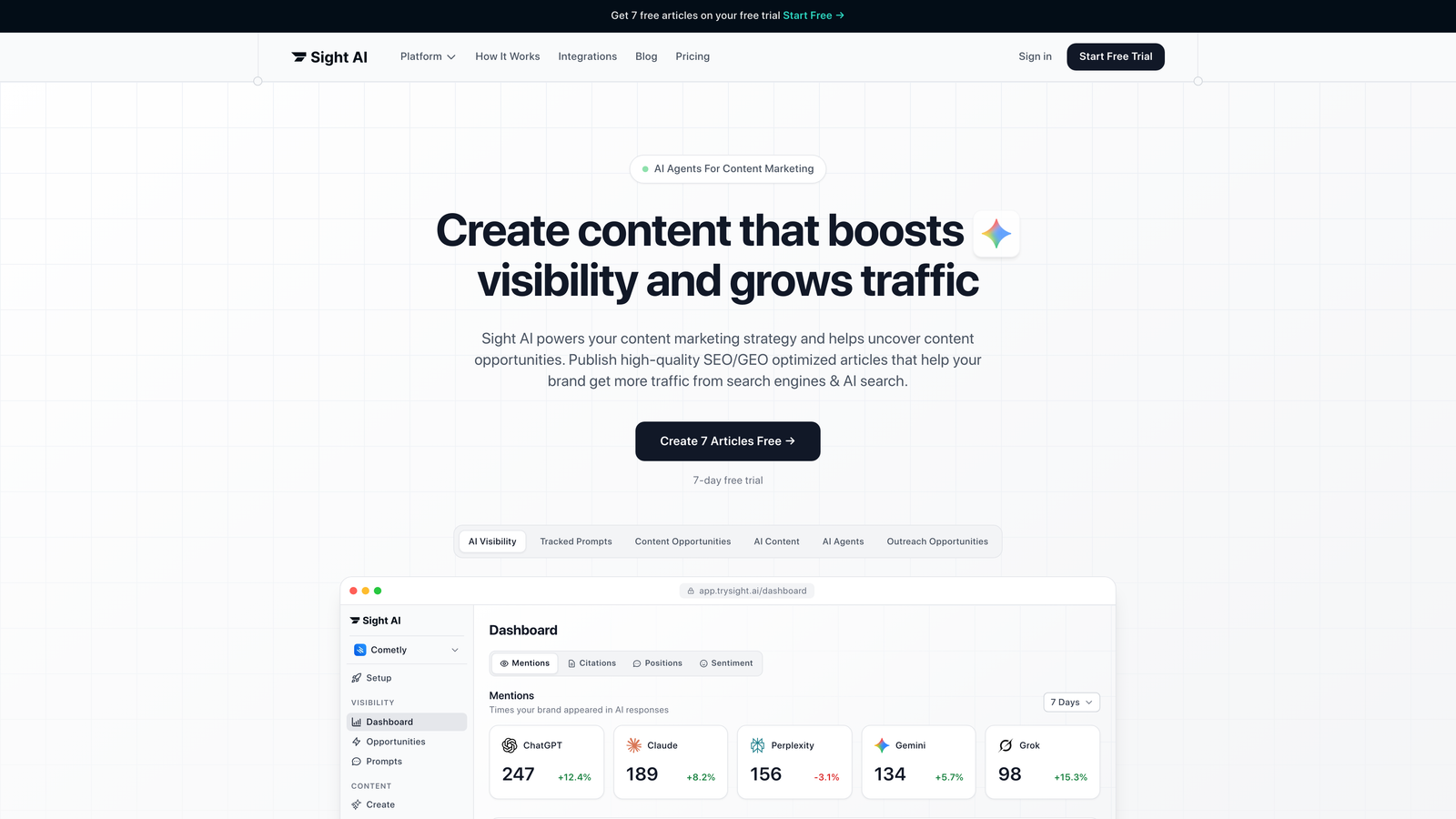

1. Sight AI

Best for: Monitoring how AI models characterize your brand and detecting biased representations

Sight AI tackles a bias problem most detection tools miss: how third-party AI models like ChatGPT, Claude, and Perplexity talk about your brand.

Where This Tool Shines

While traditional bias detection focuses on your internal models, Sight AI monitors external AI systems that millions of users query daily. When someone asks ChatGPT about your industry or competitors, you need to know if your brand is being misrepresented, ignored, or characterized unfairly.

The platform provides real-time tracking of brand mentions across major AI models, with sentiment analysis that flags when AI-generated content skews negative or inaccurate. This addresses a growing concern: biased training data in large language models can perpetuate outdated or false information about companies, products, and entire industries.

Key Features

Brand Mention Tracking: Monitors how frequently and accurately your brand appears in responses from ChatGPT, Claude, Perplexity, and other AI models.

Sentiment Analysis: Analyzes the tone and context of AI-generated content about your brand to identify negative bias or mischaracterization.

Prompt Tracking: Shows which user queries trigger mentions of your brand, helping you understand the context in which bias appears.

Bias Alerts: Automated notifications when AI models generate biased, negative, or factually incorrect content about your organization.

Competitive Benchmarking: Compare how AI models characterize your brand versus competitors to identify representation gaps.

Best For

Marketing teams and brand managers who need visibility into how AI search engines and chatbots represent their company. Particularly valuable for organizations in competitive industries where AI-generated recommendations influence customer decisions.

Pricing

Contact for pricing. Enterprise plans scale based on brand monitoring volume and number of AI platforms tracked.

2. IBM AI Fairness 360

Best for: Technical teams building custom ML pipelines with comprehensive fairness metrics

IBM AI Fairness 360 is the Swiss Army knife of bias detection toolkits, offering over 70 fairness metrics and mitigation algorithms.

Where This Tool Shines

This open-source library gives data scientists granular control over every stage of the ML pipeline. You can detect bias in training data before building models, during model training itself, and after deployment when making predictions.

The toolkit supports multiple fairness definitions—demographic parity, equalized odds, disparate impact—because fairness isn't one-size-fits-all. Different use cases require different fairness criteria, and AIF360 lets you choose the metrics that matter for your specific application.

Key Features

70+ Fairness Metrics: Comprehensive library covering demographic parity, equalized odds, calibration, and other fairness definitions.

Multi-Stage Mitigation: Pre-processing algorithms clean biased training data, in-processing methods adjust model training, and post-processing techniques modify predictions.

Framework Flexibility: Works with TensorFlow, PyTorch, scikit-learn, and other major ML frameworks.

Extensive Documentation: Tutorials, research papers, and example notebooks help teams implement fairness best practices.

Active Research Integration: IBM continuously updates the toolkit with cutting-edge fairness research from their AI labs.

Best For

Data science teams building ML systems from scratch who need maximum flexibility in fairness testing. Ideal for organizations with technical resources to implement custom bias mitigation strategies.

Pricing

Free and open-source. No licensing costs, making it accessible for teams of any size.

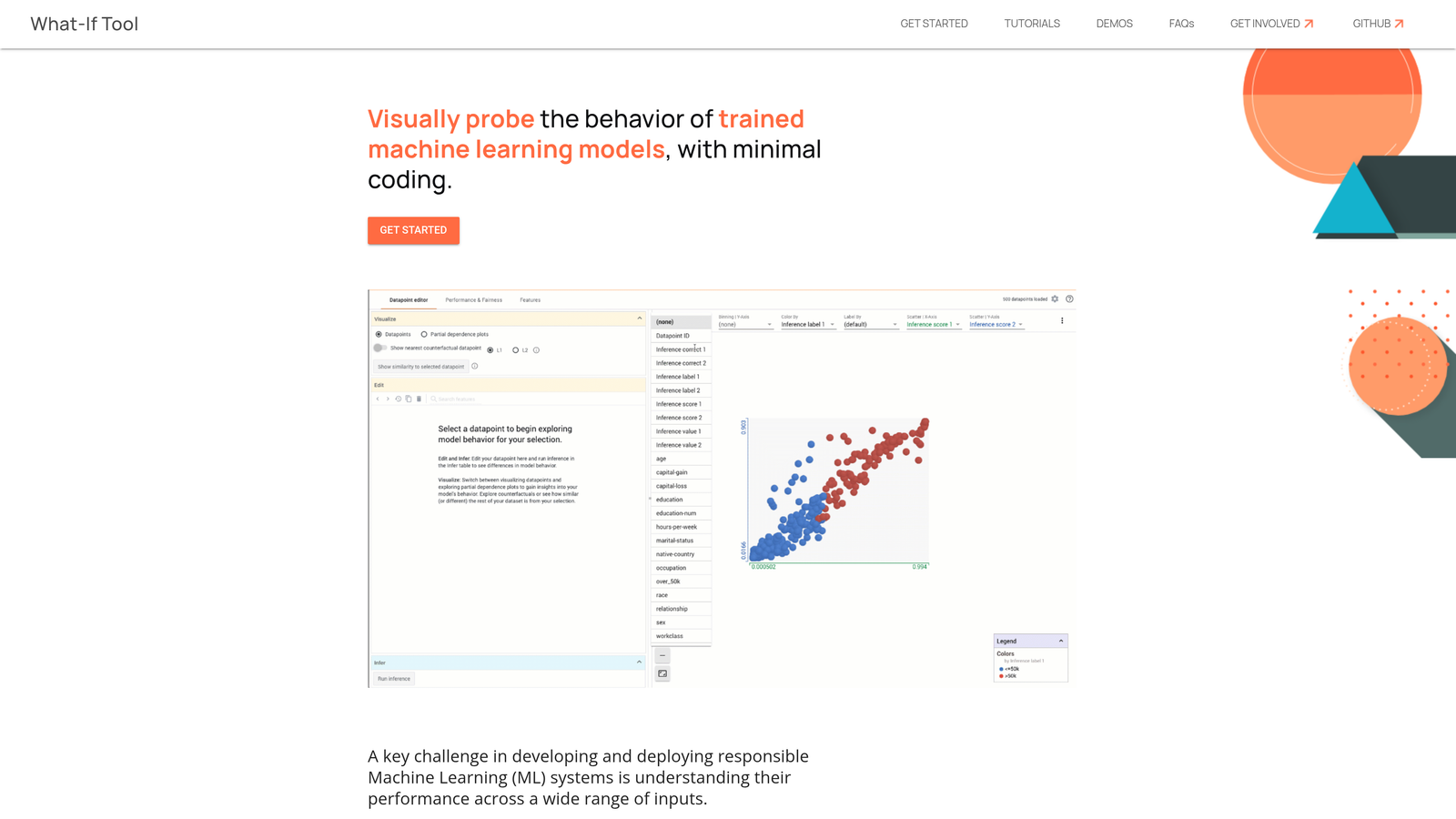

3. Google What-If Tool

Best for: Visual exploration of model behavior without writing code

Google What-If Tool makes bias detection accessible to non-technical stakeholders through an interactive visual interface.

Where This Tool Shines

You don't need to write Python scripts to understand how your model treats different demographic groups. The What-If Tool lets you load a dataset, point it at your model, and immediately start exploring fairness through visual dashboards.

The counterfactual exploration feature is particularly powerful. You can ask questions like "What if this applicant had been a different gender?" and instantly see how the model's prediction changes. This makes bias patterns obvious even to non-technical team members.

Key Features

No-Code Interface: Point-and-click exploration of model behavior without programming knowledge required.

Fairness Slicing: Automatically compare model performance across demographic subgroups to identify disparate treatment.

Counterfactual Analysis: Test how changing specific features affects predictions to understand bias mechanisms.

TensorFlow Integration: Works seamlessly with TensorFlow models and integrates into Jupyter notebooks.

Custom Thresholds: Adjust decision thresholds for different groups to optimize for fairness constraints.

Best For

Cross-functional teams where product managers, compliance officers, and data scientists need to collaborate on fairness assessments. Perfect for organizations prioritizing accessibility over deep customization.

Pricing

Free and open-source. Part of Google's PAIR (People + AI Research) initiative.

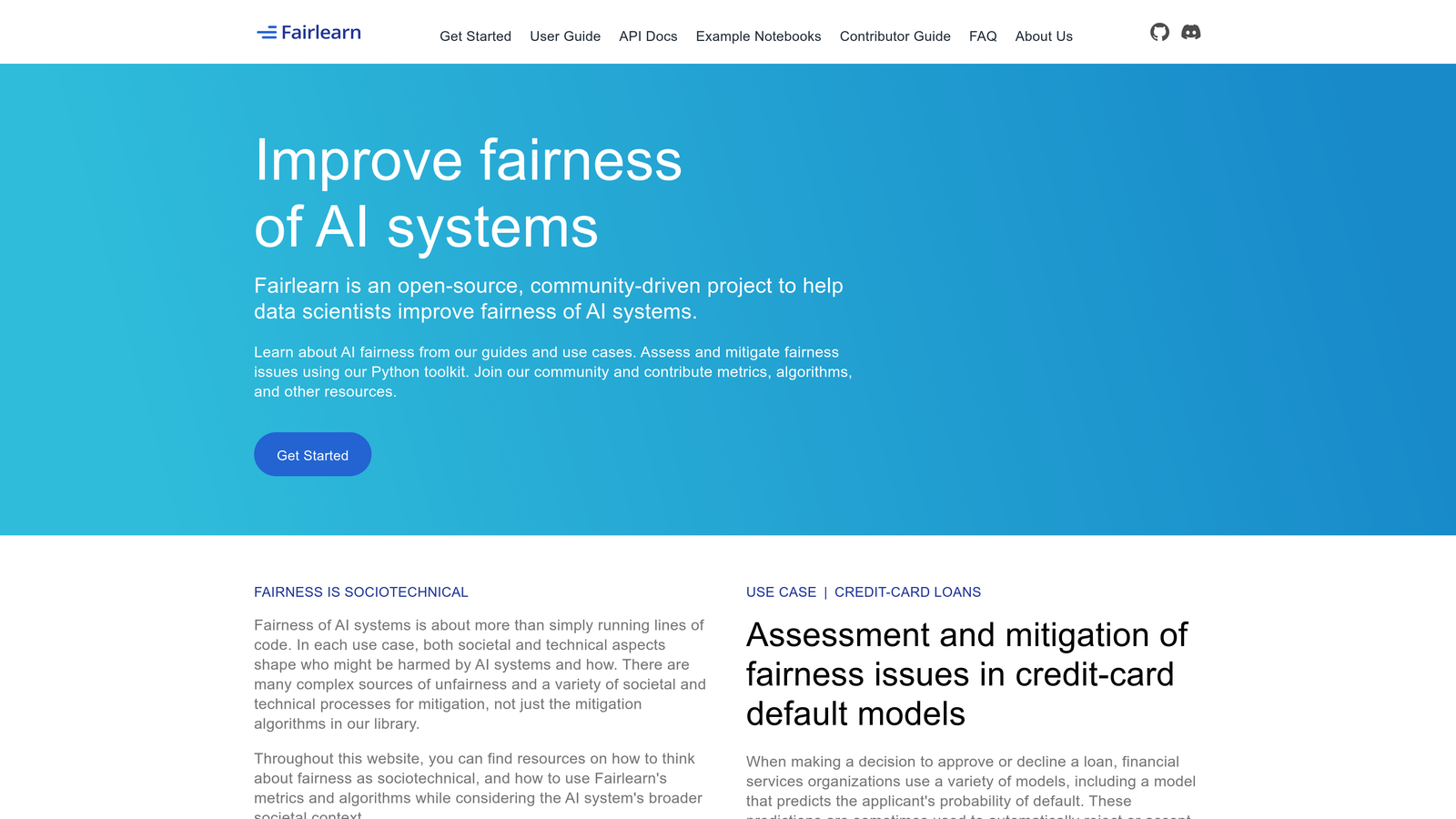

4. Microsoft Fairlearn

Best for: Python developers integrating fairness into scikit-learn workflows

Microsoft Fairlearn provides a Python-native approach to bias detection and mitigation that integrates smoothly with existing ML pipelines.

Where This Tool Shines

Fairlearn follows scikit-learn conventions, making it immediately familiar to Python data scientists. The library focuses on two core capabilities: assessing fairness through standardized metrics, and mitigating unfairness through algorithmic interventions.

The threshold optimization approach is particularly practical. Instead of retraining models, you can adjust decision thresholds for different groups to achieve fairness goals while maintaining overall accuracy. This makes it easier to retrofit fairness into existing systems.

Key Features

Scikit-Learn Integration: Follows familiar API patterns for seamless integration into existing Python ML workflows.

Assessment Metrics: Built-in fairness metrics for classification and regression tasks across demographic groups.

Mitigation Algorithms: Reductions approach and threshold optimization for addressing identified bias.

Interactive Dashboards: Visual tools for exploring fairness-accuracy tradeoffs across different mitigation strategies.

Community Development: Active open-source community contributing new algorithms and use case examples.

Best For

Python-first data science teams already using scikit-learn who want to add fairness capabilities without changing their development workflow.

Pricing

Free and open-source under MIT license. No commercial restrictions on usage.

5. Fiddler AI

Best for: Enterprise-scale monitoring of production ML systems with real-time bias detection

Fiddler AI shifts bias detection from a pre-deployment checkpoint to continuous production monitoring.

Where This Tool Shines

Models that pass fairness audits during development can develop bias drift in production as data distributions change. Fiddler monitors your live models for degrading fairness metrics, alerting you before biased predictions cause compliance issues or customer harm.

The platform combines bias detection with explainability features, so when fairness metrics degrade, you can quickly diagnose which features or data segments are driving the problem. This speeds up remediation significantly compared to treating bias and explainability as separate concerns.

Key Features

Real-Time Bias Drift: Continuous monitoring of fairness metrics in production with automated alerts when thresholds are breached.

Explainability Integration: Understand which features contribute to biased predictions through SHAP values and other explanation methods.

Compliance Reporting: Generate audit-ready documentation for regulatory requirements like the EU AI Act.

LLM Monitoring: Extends bias detection to large language models, tracking toxic outputs and fairness across demographic mentions.

Multi-Model Dashboards: Monitor fairness across entire model portfolios from a single interface.

Best For

Large enterprises running dozens or hundreds of ML models in production who need centralized monitoring and compliance documentation capabilities.

Pricing

Enterprise pricing based on number of models monitored and prediction volume. Contact for custom quotes.

6. Arthur AI

Best for: Automated bias alerts and root cause analysis for production ML systems

Arthur AI focuses on making production monitoring actionable through intelligent alerting and automated diagnostics.

Where This Tool Shines

Arthur's strength is reducing alert fatigue. Instead of flooding teams with every minor fairness fluctuation, the platform uses anomaly detection to surface statistically significant bias changes that require investigation.

When an alert fires, Arthur automatically performs root cause analysis, showing you which data segments, features, or time periods are driving the fairness degradation. This turns a generic "bias detected" alert into specific, actionable insights about what changed and why.

Key Features

Intelligent Alerting: Anomaly detection identifies statistically significant fairness changes while filtering out normal variation.

Automated Root Cause Analysis: When bias is detected, the platform automatically identifies contributing factors and affected data segments.

Structured ML and LLM Support: Monitor both traditional ML models and large language models for bias patterns.

Custom Fairness Thresholds: Set organization-specific fairness requirements based on your risk tolerance and regulatory obligations.

Drift Detection: Track how input data distributions change over time and correlate with fairness metric degradation.

Best For

Organizations that need production monitoring with minimal manual oversight, where automated diagnostics reduce the time from detection to remediation.

Pricing

Enterprise pricing based on model count and monitoring volume. Annual contracts typical for production deployments.

7. Weights & Biases

Best for: Experiment tracking with custom fairness metric dashboards

Weights & Biases extends its popular MLOps platform to include fairness evaluation alongside traditional performance metrics.

Where This Tool Shines

If you're already using Weights & Biases for experiment tracking, adding fairness metrics to your evaluation workflow requires minimal additional setup. You can log custom fairness metrics alongside accuracy and F1 scores, creating a complete picture of model performance.

The collaborative features are valuable for teams where data scientists, ML engineers, and compliance officers need shared visibility into fairness testing. Everyone can access the same dashboards and comment on experiments, streamlining the approval process for model deployments.

Key Features

Custom Fairness Metrics: Log any fairness metric as part of your experiment tracking, from standard metrics to organization-specific definitions.

Collaborative Dashboards: Share fairness evaluation results with stakeholders who can comment and approve without needing code access.

Experiment Comparison: Compare fairness metrics across model variants to understand fairness-accuracy tradeoffs.

Framework Integration: Works with PyTorch, TensorFlow, scikit-learn, and other major ML frameworks.

Report Generation: Create shareable reports documenting fairness testing for compliance and stakeholder communication.

Best For

Teams already invested in the Weights & Biases ecosystem who want to add fairness tracking without adopting a separate tool.

Pricing

Free tier available for individuals and small teams. Team plans start at $50 per user per month with volume discounts.

8. Holistic AI

Best for: Regulatory compliance and third-party AI auditing

Holistic AI positions bias detection within the broader context of AI governance and regulatory compliance.

Where This Tool Shines

As regulations like the EU AI Act create mandatory bias testing requirements, Holistic AI provides frameworks aligned with regulatory standards. The platform maps your bias assessments to specific compliance requirements, making it easier to demonstrate regulatory adherence.

The third-party audit capabilities are particularly valuable for high-risk AI systems. External auditors can access standardized bias reports without needing direct access to your models or data, streamlining the audit process while maintaining security.

Key Features

EU AI Act Compliance: Pre-built frameworks and assessment templates aligned with European regulatory requirements.

Third-Party Audit Support: Generate standardized reports for external auditors without exposing proprietary model details.

Risk Scoring: Quantify AI system risk levels based on bias severity, use case sensitivity, and regulatory classification.

Mitigation Recommendations: Receive specific guidance on addressing identified bias based on industry best practices.

Policy Templates: Pre-built governance policies for common AI use cases and industries.

Best For

Organizations in regulated industries or European markets where demonstrating compliance with AI regulations is a primary driver for bias detection.

Pricing

Enterprise pricing based on number of AI systems assessed and regulatory complexity. Contact for industry-specific quotes.

9. Credo AI

Best for: Embedding AI governance and fairness checks into development workflows

Credo AI treats AI governance as code, integrating fairness requirements directly into CI/CD pipelines.

Where This Tool Shines

Instead of treating bias detection as a separate audit step, Credo AI embeds fairness checks into your existing development workflow. When a data scientist commits model changes, automated tests verify that fairness requirements are met before the code can be deployed.

The policy-as-code approach means your organization's fairness standards are enforced programmatically rather than relying on manual reviews. This reduces the risk of biased models reaching production because someone forgot to run fairness tests.

Key Features

Policy-as-Code: Define fairness requirements as code that automatically tests every model version against organizational standards.

CI/CD Integration: Embed fairness checks into GitHub, GitLab, or other version control workflows as automated tests.

Automated Documentation: Generate compliance documentation automatically as models pass fairness tests, reducing manual reporting burden.

Stakeholder Collaboration: Allow legal, compliance, and business teams to review and approve fairness policies without technical expertise.

Audit Trail: Maintain complete history of fairness test results and policy changes for regulatory investigations.

Best For

Engineering-first organizations with mature DevOps practices who want to scale AI governance without creating bottlenecks in the development process.

Pricing

Enterprise pricing based on team size and number of AI systems under governance. Annual contracts standard.

Making the Right Choice

The right bias detection tool depends on where you are in the AI lifecycle and what type of bias concerns you most.

If you need to monitor how external AI models like ChatGPT and Claude characterize your brand, Sight AI addresses a bias problem that traditional tools miss entirely. When AI systems perpetuate inaccurate or unfair representations of your organization, you need visibility before those characterizations spread to millions of users.

For technical teams building custom ML pipelines, IBM AI Fairness 360 and Microsoft Fairlearn provide the flexibility and depth required for sophisticated bias mitigation. These open-source libraries give you maximum control but require data science expertise to implement effectively.

Organizations running models in production should prioritize continuous monitoring platforms like Fiddler AI or Arthur AI. Models that pass pre-deployment fairness tests can still develop bias drift as data distributions change, making real-time monitoring essential for high-stakes applications.

When regulatory compliance drives your bias detection requirements, Holistic AI and Credo AI provide frameworks aligned with regulations like the EU AI Act. These platforms help you demonstrate compliance through standardized documentation and third-party audit capabilities.

The emerging category of AI visibility tools addresses a different dimension of bias entirely. As large language models increasingly influence customer decisions, how these models talk about your brand becomes a critical fairness concern. Traditional bias detection focuses on your internal models, but external AI systems can perpetuate biased characterizations that affect your business.

Start tracking your AI visibility today and see exactly where your brand appears across top AI platforms. Stop guessing how AI models like ChatGPT and Claude talk about your brand—get visibility into every mention, track content opportunities, and automate your path to organic traffic growth.